ICN BP family intends to address deployment of workloads in a large number of edges and also in public clouds using K8S as resource orchestrator in each site and ONAP-K8S as service level orchestrator (across sites). ICN also intends to integrate infrastructure orchestration which is needed to bring up a site using bare-metal servers. Infrastructure orchestration, which is the focus of this page, needs to ensure that the infrastructure software required on edge servers is installed on per-site basis, but controlled from a central dashboard. Infrastructure orchestration is expected to do the following:

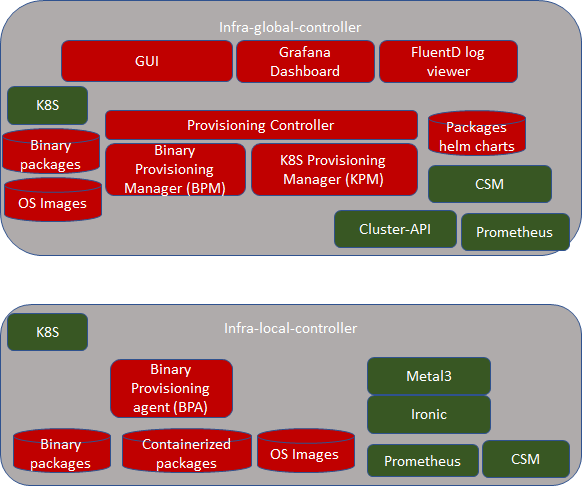

infra-global-controller: If infrastructure provisioning needs to controlled from a central location, this component is expected to be brought up in one location. This controller communicates with infra-local-controller (which is kind of agent) that perform the actual software installation/update/patch and provisions the software or BIOS etc...

infra-local-controller: Typically sits in each site in a bootstrap machine. Typically provided as bootable USB disk. It works in conjunction with the infra-global-controller. Note that, if there is no requirement to manage the software provisioning from a central location, then infra-global-controller is brought up along with the infra-local-controller.

User experience needs to be as simple as possible and even novice user shall be able to set up a site

Akraino's "Integrated Cloud Native NFV & App Stack" (ICN) Blueprint is a Cloud Native Compute and Network Framework(CN-CNF) to integrated NFV's application to the de-facto standard and setting a framework to address 5G, IOT and various Linux Foundation edge use case in Cloud Native.

ICN has ONAP as the Service Orchestration Engine(SOE) and the Cloud Native(CN) projects such as Kubernetes for Resource Orchestration Engine(ROE), Prometheus as the monitoring and alerting, OVN as the SDN controller, Container Network Interface(CNI) for Orchestration Networking, provides networking between the clusters, Envoy for Service proxy, Helm and Operators for package management and Rook for storage. The framework stack specifics the best configuration methodology, enables development projects, installation scripts, software package to bind CNCF and LF edge use cases together.

This document break downs the hardware requirements, software ingredient, Testing and benchmarking for the R2 and R3 release for and provides overall picture toward blue print effect in Edge use cases.

All the green items are existing open source projects. If they require any enhancements, it is best done in the upstream community.

All the red items are expected to be part of the Akraino BP. In some cases, the code in various upstream projects can be leveraged. But, we made them in red color as we don't know at this time to what extent we can use the upstream ASIS. Some guidance

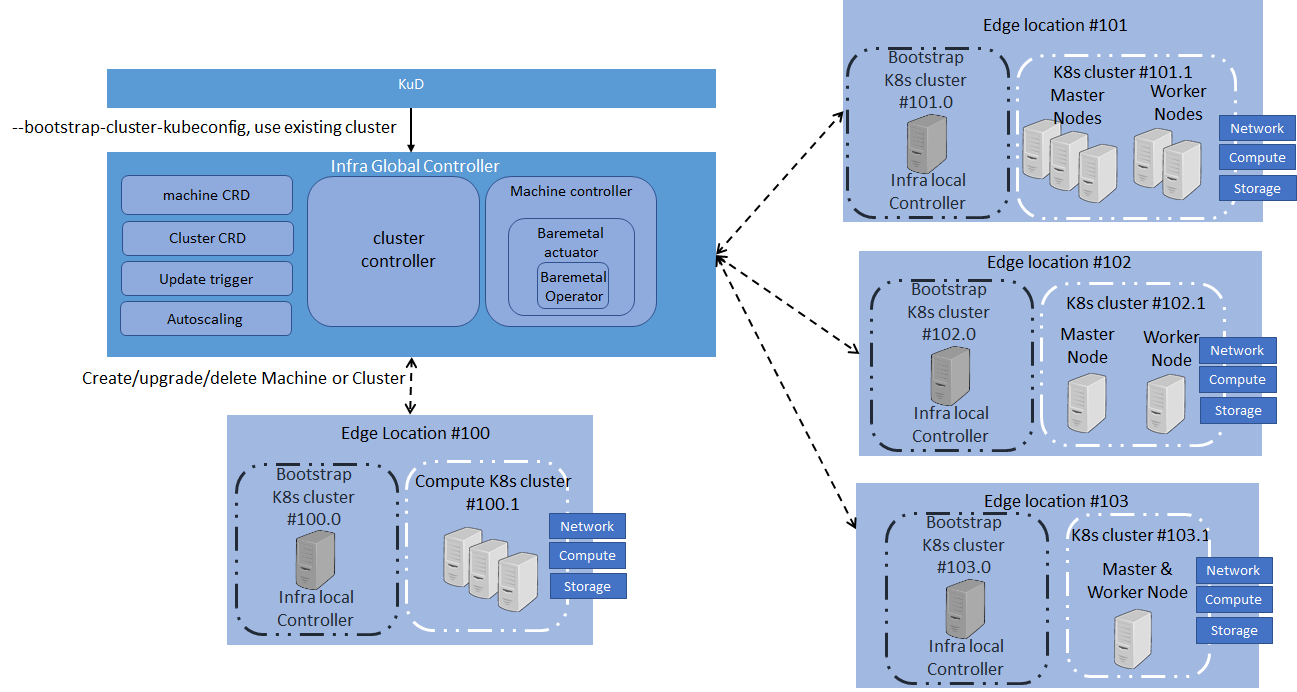

Since, there are few K8S cluster, let us define them:

"infra-local-controller" is expected to run in bootstrap machine of each location. Bootstrap is the one which installs the required software in compute nodes used for future workloads. Just an example, say a location has 10 servers. 1 server can be used as bootstrap machine and all other 9 servers can be used compute nodes for running workloads. Bootstrap machine is not only installs all required software in the compute nodes, but also is expected to patch and update compute nodes with newer patched versions of the software.

As you see above in the picture, bootstrap machine itself is based on K8S. Note that this K8S is different from the K8S that gets installed in compute nodes. That is, these are two are different K8S clusters. In case of bootstrap machine, it itself is complete K8S cluster with one node that has both master and minion software combined. All the components of infra-local-controller (such as BPA, Metal3 and Ironic) themselves are containers.

Since we expect infra-local-controller is reachable from outside we expect it to be secured using

Infra-local-controller is expected to be brought in two ways:

Note that infra-local-controller can be run without infra-global-controller. In interim release, we expect that only infra-local-controller is supported. infra-global-controller is targeted for final Akraino R2 release. It is the goal that any operations done in interim release on infra-local-controller manually are automated by infra-global-controller. And hence the interface provided by infra-local-controller is flexible to support both manual actions as well as automated actions.

As indicated above, infra-local-controller is expected to bring K8S cluster on the compute nodes used for workloads. Bringing up workload K8S cluster normally requires the following steps

Step 1 and 2 are expected to be taken care using Metal3 and Ironic. Step 3 is expected to be taken care by BPA and Step 4 is expected to be taken care by talking to application-K8S

User experience for infrastructure administrators:

When using USB bootable disk

As a developer:

BPA job is to install all packages that can't be installed using kubectl to application-K8S. Hence, BPA is normally used right after compute nodes get installed with Linux operating system, before installing kubernetes based packages. BPA is also an implementation of CRD controller of infra-local-controller-k8s. We expect to have following CRs:

BPA also provides some RESTful API for doing the following:

Since compute nodes may not have Internet connectivity

BPA also takes care of: (After interim release)

BPA is expected to store any private key and secret information in CSM.

BPA and Ironic related integration:

Ironic is expected to bring up Linux on compute nodes. It is also expected to create SSH keys automatically for each compute node. In addition, it is also expected to create SSH user for each compute node. Usernames and password are expected to be stored in SMS for security reasons in infra-local-controller. BPA is expected to leverage these authentication credentials when it installs the software packages.

CSM is expected to be used not only for storing secrets, but also securely store and perform crypto operations using CSM.

Implementation suggestions:

KuD needs to be broken into pieces

There could be multiple edges that need to be brought up. Administrator going to each location, using infra-local-controller to bring up application-K8S cluster in compute nodes of location is not scalable. "infra-global-controller" is expected to provide centralized software provisioning and configuration system. It provides one single-pane-of-glass for administrating the edge locations with respect to infrastructure. Administration involves

It is expected that infra-local-controller is brought up in each location. infra-local-controller kubeconfig is something that is expected to be made known to the infra-global-controller. Beyond that everything else is taken care by infra-global-controller. infra-global-controller communicates with various infra-local-controllers to do the job of software installation and provisioning.

Infra-global-controller runs in its own K8S cluster. All the components of infra-global-controllers are containers. Following components are part of the infra-global-controller.

Since we expect infra-global-controller is reachable from the Internet, we expect it to be secured using

Assuming that infra-global-controller is brought up with all its micro-services, following steps are expected to be taken up to provision sites/edges.

Infra-global-controller uses Cluster-API to provision OS related installation in the locations via infra-local-controller.

Following sections describe the components of infra-global-controller.

It has following functions

Site information :

Inventory information:

Application-K8S reachability information:

Software and configuration of site:

Implementation notes:

It has following functions

Binary package installation and configuration management : This functionality via RESTful API is called by PC to trigger the installation. It then internally calls BPA of infra-local-controller to initiate the installation and configuration process. It triggers BPA via infra-local-K8S BPA CRs.

Binary package distribution : This functionality via RESTful API is called by PC. It figures out the differences between the binary packages & container packages it has locally for this location with the packages that are already in the BPA. Any differences are uploaded to BPA via BPA provided RESTful API.

Collection of KubeConfig of application-K8S : This functionality gets the KubeConfig of application-K8S from BPA. This gets stored in the database table that is specific to site.

KPM is used to install containerized packages on application-K8S. KPM looks at all the relevant helm charts and instantiates them by talking to application-K8S.

Implementation details:

Code can be borrowed from the ONAP Multi-Cloud K8S plugin service which does similar functionality.

Note : ZTP (Zero Touch Provisioning) term is used in the BP presentation. This represents both infra-local-controller and infra-global-controller.

As shown in the above figure, the infra local controller is itself a Bootstrap K8s cluster, that brings up the compute k8s cluster in the edge location. Infra-local controller has BPA, Metal3, Baremetal operator(Ironic). This section explains the details of it.

This subsection is referred from https://github.com/metal3-io/metal3-docs/blob/master/design/nodes-machines-and-hosts.md

Baremetal operator provides hardware provisioning of compute nodes by using the kubernetes API. The Baremetal operator defines a CRD BaremetalHost Object represents a physical server, it represents several hardware inventories. Ironic is responsible for provisioning the physical servers, and the Baremetal Operator is for responsible for wrapping the Ironic and represents them as CRD object.

Sequence Diagrams involving all of above + CSM + Logging + Monitoring stuff

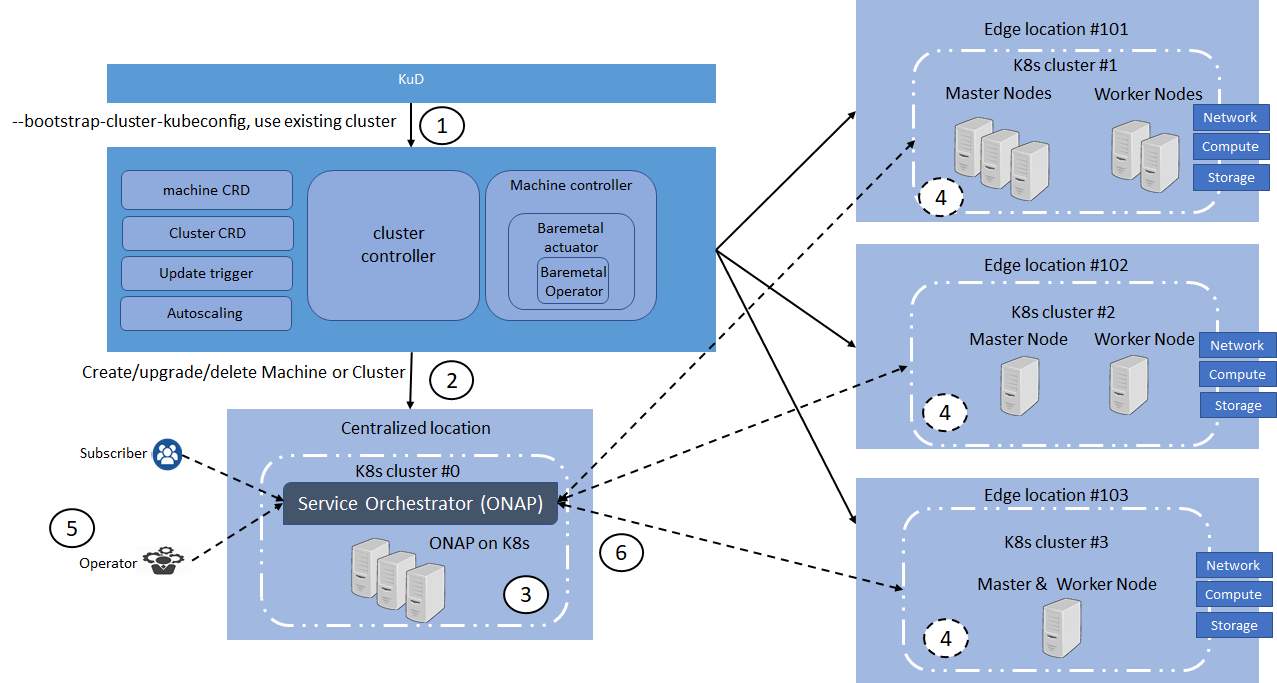

Global ZTP system is used for Infrastructure provisioning and configuration in ICN family. It is subdivided into 3 deployments Cluster-API, KuD and ONAP on K8s.

One of the major challenges to cloud admin managing multiple clusters in different edge location is coordinate control plane of each cluster configuration remotely, managing patches and updates/upgrades across multiple machines. Cluster-API provides declarative APIs to represent clusters and machines inside a cluster. Cluster-API provides the abstraction for various common logic that can be seen in various cluster provider such as GKE, AWS, Vsphere. Cluster-API consolidated all those logic provide abstractions for all those logic functions such as grouping machines for the upgrade, autoscaling mechanism.

In ICN family stack, Cluster-API Baremetal provider is metal3 Baremetal Operator, it is used as a machine actuator that uses Ironic to provide k8s API to manage the physical servers that also run Kubernetes clusters on bare metal host. Cluster-API manages the kubernetes control plane through cluster CRD, and Kubernetes node(host machine) through machine CRDs, Machineset CRDs and MachineDeployment CRDS. It also has an autoscaler mechanism that checks the Machineset CRD that is similar to the analogy of K8s replica set and MachineDeployment CRD similar to the analogy of K8s Deployment. MachineDeployment CRDs are used to update/upgrade of software drivers in

Cluster-API provider with Baremetal operator is used to provision physical server, and initiate the kubernetes cluster with user configuration

Kubernetes deployer(KUD) in ONAP can be reused to deploy the K8s App components(as shown in fig. II), NFV Specific components and NFVi SDN controller in the edge cluster. In R2 release KuD will be used to deploy the K8s addon such as Prometheus, Rook, Virlet, OVN, NFD, and Intel device plugins in the edge location(as shown in figure I). In R3 release, KuD will be evolved as "ICN Operator" to install all K8s addons.

One of the Kubernetes clusters with high availability, which is provisioned and configured by Cluster-API will be used to deploy ONAP on K8s. ICN family uses ONAP Operations Manager(OOM) to deploy ONAP installation. OOM provides a set of helm chart to be used to install ONAP on a K8s cluster. ICN family will create OOM installation and automate the ONAP installation once a kubernetes cluster is configured by cluster-API

ONAP will be the Service Orchestration Engine in ICN family and is responsible for the VNF life cycle management, tenant management and Tenant resource quota allocation and managing Resource Orchestration engine(ROE) to schedule VNF workloads with Multi-site scheduler awareness and Hardware Platform abstraction(HPA). Required an Akraino dashboard that sits on the top of ONAP to deploy the VNFs

Kubernetes will be the Resource Orchestration Engine in ICN family to manage Network, Storage and Compute resource for the VNF application. ICN family will be using multiple container runtimes as Virtlet, Kata container, Kubevirt and gVisor. Each release supports different container runtimes that are focused on use cases.

Kubernetes module is divided into 3 groups - K8s App components, NFV specific components and NFVi SDN controller components, all these components will be installed using KuD addons

K8s App components: This block has k8s storage plugins, container runtime, OVN for networking, Service proxy and Prometheus for monitoring, and responsible application management

NFV Specific components: This block is responsible for k8s compute management to support both software and hardware acceleration(include network acceleration) with CPU pinning and Device plugins such as QAT, FPGA, SRIOV & GPU.

SDN Controller components: This block is responsible for managing SDN controller and to provide additional features such as Service Function chaining(SFC) and Network Route manager.

fComponents | Link | Akraino Release target |

Cluster-API | R2 | |

Cluster-API-Provider-bare metal | R2 | |

Provision stack - Metal3 | R2 | |

Host Operating system | Ubuntu 18.04 | R2 |

Quick Access Technology(QAT) drivers | Intel® C627 Chipset - https://ark.intel.com/content/www/us/en/ark/products/97343/intel-c627-chipset.html | R2 |

NIC drivers | R2 | |

ONAP | Latest release 3.0.1-ONAP - https://github.com/onap/integration/ | R2 |

Workloads |

| R3 |

KUD | R2 | |

Kubespray | R2 | |

K8s | R2 | |

Docker | https://github.com/docker - 18.09 | R2 |

Virtlet | R2 | |

SDN - OVN | R2 | |

OpenvSwitch | https://github.com/openvswitch/ovs - 2.10.1 | R2 |

Ansible | https://github.com/ansible/ansible - 2.7.10 | R2 |

Helm | https://github.com/helm/helm - 2.9.1 | R2 |

Istio | https://github.com/istio/istio - 1.0.3 | R2 |

Kata container | R3 | |

Kubevirt | https://github.com/kubevirt/kubevirt/ - v0.18.0 | R3 |

Collectd | R2 | |

Rook/Ceph | R2 | |

MetalLB | R3 | |

Kube - Prometheus | R2 | |

OpenNESS | Will be updated soon | R3 |

Multi-tenancy | R2 | |

Knative | R3 | |

Device Plugins | https://github.com/intel/intel-device-plugins-for-kubernetes - QAT, SRIOV | R2 |

| https://github.com/intel/intel-device-plugins-for-kubernetes - FPGA, GPU | R3 | |

Node Feature Discovery | R2 | |

CNI | https://github.com/coreos/flannel/ - release tag v0.11.0 https://github.com/containernetworking/cni - release tag v0.7.0 https://github.com/containernetworking/plugins - release tag v0.8.1 https://github.com/containernetworking/cni#3rd-party-plugins - Multus v3.3tp, SRIOV CNI v2.0( withSRIOV Network Device plugin) | R2 |

Conformance Test for K8s | R2 |

| Release | Block | Components | Identified Gaps | Initial thought |

|---|---|---|---|---|

R2 | ZTP | Cluster-API | The cluster upgrade yet to be support | The definition of "cluster upgrade" and expected behaviour should be documented here. For example cluster upgrade could be kubelet version upgrade. |

| No node repair mechanism | Node logs such kubelet logs should be enable in the automation script | |||

| No Multi-Master support | Required to confirm from engineers | |||

| KuD | Virtlet , Multus, NFD & Istio | Installation script are in ansible and static. Required to be in daemonset | ||

| Virtlet & Intel Device plugin | Have to check with Virtlet support with device plugin framework | |||

| ONAP | OOM automation | Portal chart is deployed with loadbalancer with floating IP address | ||

| Dashboard | Monitoring tool to check the deployment across the multi site and show the metrics/statistics details to the operator | |||

| R3 | APP use cases | SDWAN | OpenWRT is potential candidate to configured SDWAN use case. Required more information on it |

| Timeline | Release | required state of implementation | Expected Result |

|---|---|---|---|

| Aug 2nd | ICN-v0.1.0 |

|

|

| Aug 9th | ICN-v0.1.1 |

|

|

| Aug 16th | ICN-v0.2.0 |

|

|

Akraino R2 release

| Components | required state of implementation | Expected Result |

|---|---|---|

| ZTP |

| All-in-one ZTP script with cluster-API and Baremetal operator |

| ONAP |

| Should be integrated with the above script |

| KuD addons |

| Daemonset yaml should be integrated with the above script |

| Tenant Manager |

| should be deployed as part of KuD addons |

| Dashboard |

| Dashboard run as deployment in ONAP cluster |

| App |

| Instantiate 3 workloads from ONAP to show the SFC functionality in Dashboard |

| CI |

| End-to-End testing script |

| Components | required state of implementation | Expected Result |

|---|---|---|

| ZTP |

| All-in-one ZTP script with cluster-API and Baremetal operator |

| ONAP |

| Should be integrated with the above script |

| KuD addons |

| Daemonset yaml should be integrated with the above script |

| Dashboard |

| Dashboard run as deployment in ONAP cluster |

| App |

| Instantiate 3 workloads from ONAP to show the SFC functionality in Dashboard |

| CI |

| End-to-End testing script |

Yet to discuss