ICN BP family intends to address deployment of workloads in a large number of edges and also in public clouds using K8S as resource orchestrator in each site and ONAP-K8S as service level orchestrator (across sites). ICN also intends to integrate infrastructure orchestration which is needed to bring up a site using bare-metal servers. Infrastructure orchestration, which is the focus of this page, needs to ensure that the infrastructure software required on edge servers is installed on per-site basis, but controlled from a central dashboard. Infrastructure orchestration is expected to do the following:

infra-global-controller: If infrastructure provisioning needs to controlled from a central location, this component is expected to be brought up in one location. This controller communicates with infra-local-controller (which is kind of agent) that perform the actual software installation/update/patch and provisions the software or BIOS etc...

infra-local-controller: Typically sits in each site in a bootstrap machine. Typically provided as bootable USB disk. It works in conjunction with the infra-global-controller. Note that, if there is no requirement to manage the software provisioning from a central location, then infra-global-controller is brought up along with the infra-local-controller.

User experience needs to be as simple as possible and even novice user shall be able to set up a site

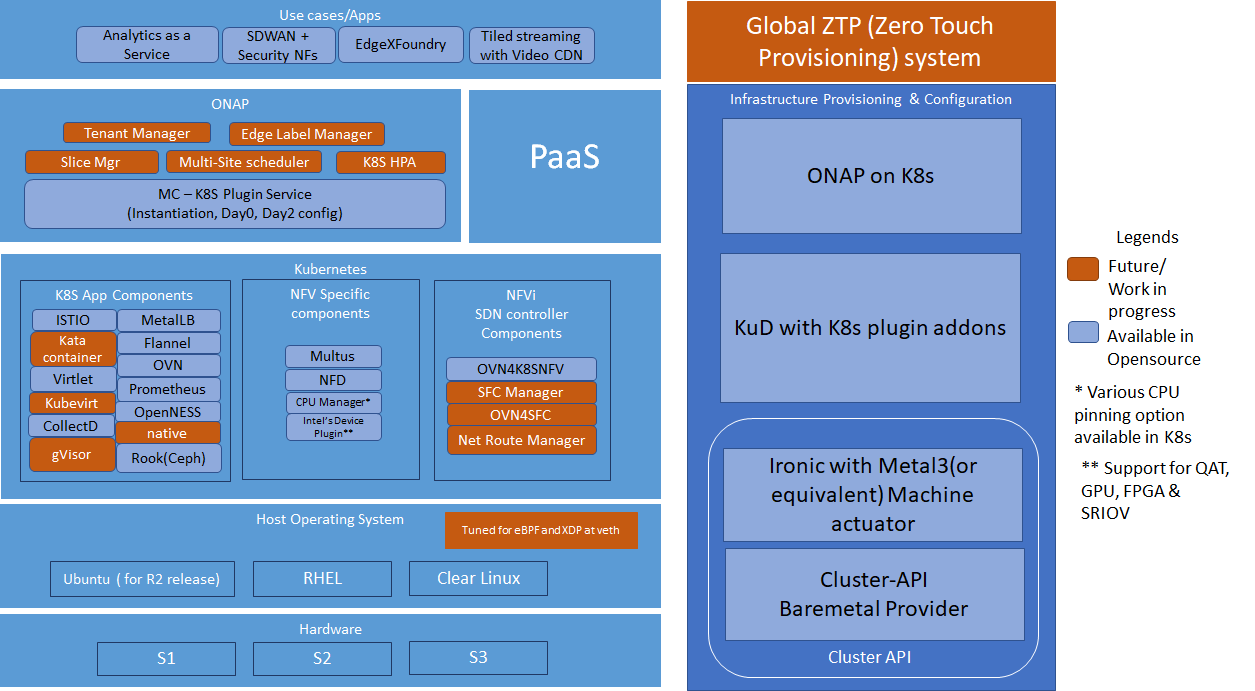

Akraino's "Integrated Cloud Native NFV & App Stack" (ICN) Blueprint is a Cloud Native Compute and Network Framework(CN-CNF) to integrated NFV's application to the de-facto standard and setting a framework to address 5G, IOT and various Linux Foundation edge use case in Cloud Native.

ICN has ONAP as the Service Orchestration Engine(SOE) and the Cloud Native(CN) projects such as Kubernetes for Resource Orchestration Engine(ROE), Prometheus as the monitoring and alerting, OVN as the SDN controller, Container Network Interface(CNI) for Orchestration Networking, provides networking between the clusters, Envoy for Service proxy, Helm and Operators for package management and Rook for storage. The framework stack specifics the best configuration methodology, enables development projects, installation scripts, software package to bind CNCF and LF edge use cases together.

This document break downs the hardware requirements, software ingredient, Testing and benchmarking for the R2 and R3 release for and provides overall picture toward blue print effect in Edge use cases.

One of the major challenges to cloud admin managing multiple clusters in different edge location is coordinate control plane of each cluster configuration remotely, managing patches and updates/upgrades across multiple machines. Cluster-API provides declarative APIs to represent clusters and machines inside a cluster. Cluster-API provides the abstraction for various common logic that can be seen in various cluster provider such as GKE, AWS, Vsphere. Cluster-API consolidated all those logic provide abstractions for all those logic functions such as grouping machines for the upgrade, autoscaling mechanism.

In ICN family stack, Cluster-API Baremetal provider is used to machine actuator that uses Ironic to provide k8s API to manage the physical servers that also run bare metal kubernetes. Cluster-api manages the kubernetes control plane through cluster CRD, and Kubernetes node(host machine) through machine CRDs, Machineset CRDs and MachineDeployment CRDS. It also has autoscaler mechanism that checks the Machineset CRD that is similar to the analogy of K8s replica set and MachineDeployment CRD similar to the analogy of K8s Deployment

Kubernetes deployer in ONAP can be reused to deploy the K8s App components

ONAP Block and Modules:

Kubernetes Block and Modules:

Apps/ Use cases:

Components | Link | Akraino Release target |

Cluster-API | R2 | |

Cluster-API-Provider-bare metal | R2 | |

Provision stack - Metal3 | R2 | |

Host Operating system | Ubuntu 18.04 | R2 |

Quick Access Technology(QAT) drivers | Intel® C627 Chipset - https://ark.intel.com/content/www/us/en/ark/products/97343/intel-c627-chipset.html | R3 |

NIC drivers | R3 | |

ONAP | Latest release 3.0.1-ONAP - https://github.com/onap/integration/ | R2 |

Workloads | OpenWRT SDWAN - https://openwrt.org/ | R3 |

KUD | R2 | |

Kubespray | R2 | |

K8s | R2 | |

Docker | https://github.com/docker - 18.09 | R2 |

Virtlet | R2 | |

SDN - OVN | 0.3.0 | R2 |

OpenvSwitch | R2 | |

Ansible | https://github.com/ansible/ansible - 2.7.10 | R2 |

Helm | https://github.com/helm/helm - 2.9.1 | R2 |

Istio | https://github.com/istio/istio - 1.0.3 | R2 |

Kata container | R3 | |

Kubevirt | https://github.com/kubevirt/kubevirt/ - v0.18.0 | R3 |

Collectd | R2 | |

Rook/Ceph | R3 | |

MetalLB | R3 | |

Kube - Prometheus | R3 | |

OpenNESS | Will be updated soon | R3 |

Multi-tenancy | R2 | |

Knative | R3 | |

Device Plugins | https://github.com/intel/intel-device-plugins-for-kubernetes - | R2 |

Node Feature Discovery | R2 | |

CNI | https://github.com/coreos/flannel/ - release tag v0.11.0 https://github.com/containernetworking/cni - release tag v0.7.0 https://github.com/containernetworking/plugins - release tag v0.8.1 https://github.com/containernetworking/cni#3rd-party-plugins - Multus v3.3tp, SRIOV CNI v2.0( withSRIOV Network Device plugin) | R2 |

Conformance Test for K8s | R2 |

| Release | Block | Components | Identified Gaps | Initial thought |

|---|---|---|---|---|

R2 | ||||

| R3 | ||||