This wiki describes the specifications for designing the Binary Provisioning Agent required for the Integrated Cloud Native Akraino project.

The BPA is part of the infra local controller which runs as a bootstrap k8s cluster in the ICN project. As described in Integrated Cloud Native Akraino project, the purpose of the BPA is to install packages that cannot be installed using kubectl. It will be called once the operating system (Linux) has been installed in the compute nodes by the baremetal operator. The Binary Provisioning Agent will carry out the following functions;

For more information on the BPA functions, check out the ICN Akraino project link above

We do not intend to make any changes to the existing kubernetes API in order to implement the specifications described in this document. We will simply be extending the Kubernetes API using Custom Resource Definition as described here and then creating a custom controller that will handle the requirements of our provisioning Agent custom resource.

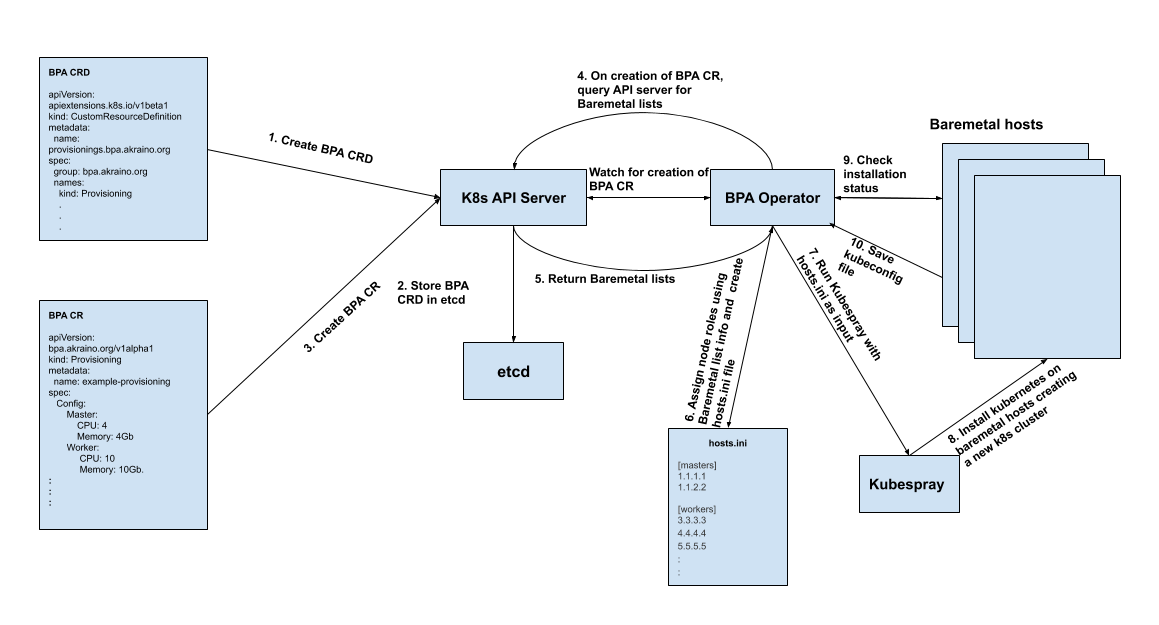

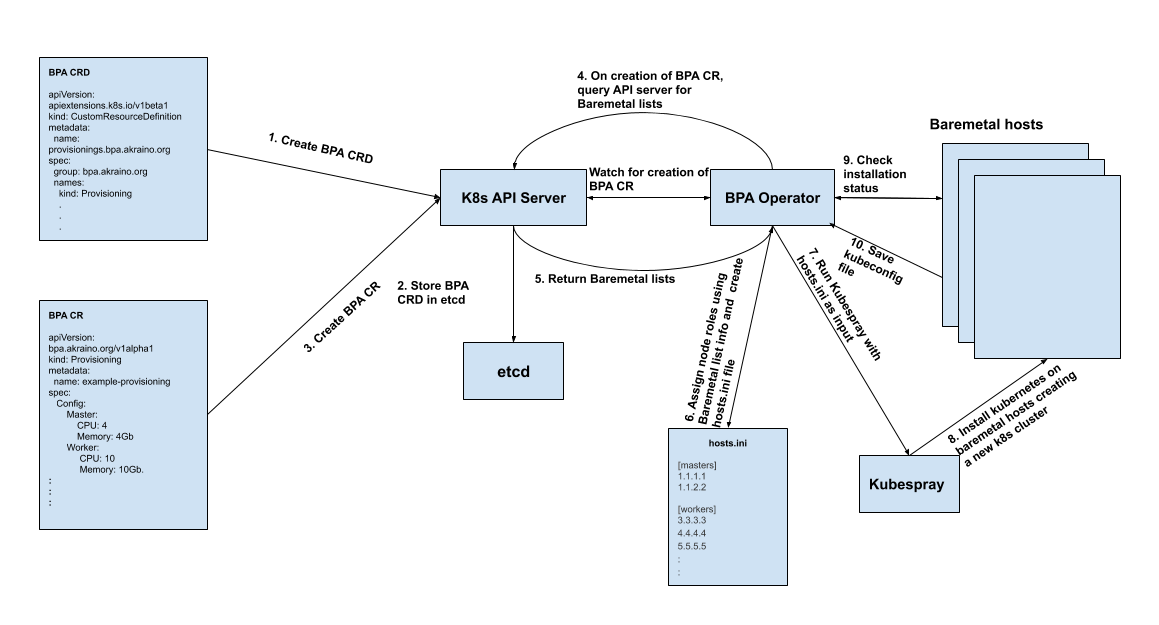

Prerequisites: This workflow assumes that the baremetal CR and baremetal operator have been created and has successfully installed the compute nodes with Linux OS. It also assumes that the BPA controller is running.

Fig 1: Illustration of the proposed workflow

Create the BPA Custom Resource

The BPA Operator continues to watch the k8s API server and once it sees that a new BPA CR object has been created, it queries the k8s API server for the Baremetal hosts lists. The baremetal hosts lists contains information about the compute nodes provisioned including the IP address, CPU, memory..etc of each host.

The BPA operator looks into the baremetal hosts list and knows which hosts should be master and which should be workers. As the master and worker fields have various parameters, it can do this in various ways;

The BPA operator then creates the hosts.ini file using the assigned roles and their corresponding IP addresses.

The BPA operator then installs kubernetes using kubespray on the compute nodes thus creating an active kubernetes cluster. During installation, it would continue to check the status of the installation

On successful completion of the k8s cluster installation, the BPA operator would save the application-k8s kubeconfig file in order to access the k8s cluster and make changes such as software updates or add a worker node for future purposes.

The BPA CRD tells the Kubernetes API how to expose the provisioning custom resource object. The CRD yaml file is applied using

“kubectl create -f bpa_v1alpha1_provisioning_crd.yaml” See below for the CRD definition.

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: provisionings.bpa.akraino.org

spec:

group: bpa.akraino.org

names:

kind: Provisioning

listKind: ProvisioningList

plural: provisionings

singular: provisioning

shortNames:

- bpa

scope: Namespaced

subresources:

status: {}

validation:

openAPIV3Schema:

properties:

apiVersion:

description:

type: string

kind:

description:

type: string

metadata:

type: object

spec:

type: object

status:

type: object

version: v1alpha1

versions:

- name: v1alpha1

served: true

storage: true |

The provisioning_types.go file is the API for the provisioning agent custom resource.

// ProvisioningSpec defines the desired state of Provisioning

type ProvisioningSpec struct {

Masters []map[string]Master `json:"masters,omitempty"`

Workers []map[string]Worker `json:"workers,omitempty"`

}

// ProvisioningStatus defines the observed state of

// Provisioning

type ProvisioningStatus struct {

}

// Provisioning is the Schema for the provisionings API

type Provisioning struct {

metav1.TypeMeta `json:",inline"`

metav1.ObjectMeta `json:"metadata,omitempty"`

Spec ProvisioningSpec `json:"spec,omitempty"`

Status ProvisioningStatus `json:"status,omitempty"`

}

// ProvisioningList contains a list of Provisioning

type ProvisioningList struct {

metav1.TypeMeta `json:",inline"`

metav1.ListMeta `json:"metadata,omitempty"`

Items []Provisioning `json:"items"`

}

// master struct contains resource requirements for a master

// node

type Master struct {

CPU int32 `json:"cpu,omitempty"`

Memory string `json:"memory,omitempty"`

MACaddress string `json:"mac-address,omitempty"`

}

// worker struct contains resource requirements for a worker node

type Worker struct {

CPU int32 `json:"cpu,omitempty"`

Memory string `json:"memory,omitempty"`

SRIOV bool `json:"sriov,omitempty"`

QAT bool `json:"qat,omitempty"`

MACaddress string `json:"mac-address,omitempty"`

}

|

The variables in the ProvisioningSpec struct are used to create the data structures in the yaml spec for the custom resource. Three variables are added to the ProvisioningSpec struct;

apiVersion: bpa.akraino.org/v1alpha1

kind: Provisioning

metadata:

name: provisioning-sample

labels:

cluster: cluster-abc

owner: c1

spec:

masters:

- master:

mac-address: 00:c6:14:04:61:b2

workers:

- worker:

mac-address: 00:c6:14:04:61:b2

|

apiVersion: bpa.akraino.org/v1alpha1

kind: Provisioning

metadata:

name: provisioning-sample

labels:

cluster: cluster-xyz

owner: c2

spec:

masters:

- master:

cpu: 10

memory: 4Gi

mac-address: 00:c5:16:05:61:b2

- master:

cpu: 10

memory: 4Gi

mac-address: 00:c2:14:06:61:b5

workers:

- worker:

cpu: 20

memory: 8Gi

mac-address: 00:c6:14:04:61:b2 |

The YAML file above can be used to create a provisioning custom resource which is an instance of the provisioning CRD describes above. The spec.master field corresponds to the Masters variable in the ProvisioningSpec struct of the *-types.go file, while the spec.worker field corresponds to the Workers variable in the ProvisioningSpec struct of the *-types.go file and the spec.replica field corresponds to the Replicas variable in the same struct.

Based on the values above, when the BPA operator gets the baremetal hosts object (Step 5in figure 1), it would assign hosts with 10 CPUs and 4Gi memory the role of master and it would assign hosts with 20CPUs and 8Gi memory the role of worker.

apiVersion: v1

items:

- apiVersion: metal3.io/v1alpha1

kind: BareMetalHost

metadata:

creationTimestamp: "2019-07-20T01:43:19Z"

finalizers:

- baremetalhost.metal3.io

generation: 2

name: demo-provisioning

namespace: metal3

resourceVersion: "35002"

selfLink: /apis/metal3.io/v1alpha1/namespaces/metal3/baremetalhosts/demo-provisioning

uid: 3b22014e-9252-4f15-89a5-67f96e1a07a2

spec:

bmc:

address: ipmi://172.31.1.17

credentialsName: demo-provisioning-bmc-secret

description: ""

externallyProvisioned: false

hardwareProfile: ""

image:

checksum: http://172.22.0.1/images/bionic-server-cloudimg-amd64.md5sum

url: http://172.22.0.1/images/bionic-server-cloudimg-amd64.qcow2

online: true

status:

errorMessage: ""

goodCredentials:

credentials:

name: demo-provisioning-bmc-secret

namespace: metal3

credentialsVersion: "30393"

hardware:

cpu:

arch: x86_64

clockMegahertz: 3700

count: 72

flags:

- ….

- xtopology

- xtpr

model: Intel(R) Xeon(R) Gold 6140M CPU @ 2.30GHz

firmware:

bios:

date: 11/07/2018

vendor: Intel Corporation

version: SE5C620.86B.00.01.0015.110720180833

hostname: localhost.localdomain

nics:

- ip: ""

mac: 3c:fd:fe:9c:88:60

model: 0x8086 0x1572

name: eth0

pxe: false

speedGbps: 0

vlanId: 0

- ip: 172.22.0.55

mac: a4:bf:01:64:86:6f

model: 0x8086 0x37d2

name: eth5

pxe: true

speedGbps: 0

vlanId: 0

…

ramMebibytes: 262144

storage:

- hctl: "6:0:0:0"

model: INTEL SSDSC2KB48

name: /dev/sda

rotational: false

serialNumber: BTYF8290022M480BGN

sizeBytes: 480103981056

vendor: ATA

wwn: "0x55cd2e414fc888c1"

wwnWithExtension: "0x55cd2e414fc888c1"

- hctl: "7:0:0:0"

model: INTEL SSDSC2KB48

name: /dev/sdb

rotational: false

serialNumber: BTYF83160FDB480BGN

sizeBytes: 480103981056

vendor: ATA

wwn: "0x55cd2e414fd7b5a3"

wwnWithExtension: "0x55cd2e414fd7b5a3"

systemVendor:

manufacturer: Intel Corporation

productName: S2600WFT (SKU Number)

serialNumber: BQPW84200264

hardwareProfile: unknown

lastUpdated: "2019-07-20T02:41:30Z"

operationalStatus: OK

poweredOn: false

provisioning:

ID: 94fa2511-3cb1-4372-ab42-9c377db8aeca

image:

checksum: ""

url: ""

state: provisioning

kind: List

metadata:

resourceVersion: ""

selfLink: ""

|

In addition, we would also have two other CRDs that the BPA would use to perform its functions;

The software CRD will install the required software, drivers and perform software updates

Draft Software CRD

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: cluster.bpa.akraino.org

spec:

group: bpa.akraino.org

names:

kind: software-updater

listKind: software-updaterList

plural: software-updaters

singular: software-updater

shortNames:

- su

scope: Namespaced

subresources:

status: {}

validation:

openAPIV3Schema:

properties:

apiVersion:

description:

type: string

kind:

description:

type: string

metadata:

type: object

spec:

type: object

status:

type: object

version: v1alpha1

versions:

- name: v1alpha1

served: true

storage: true |

The cluster CRD will have the Cluster name and contain the provisioning CR and/or the software CR for the specified cluster

Draft Cluster CRD

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: cluster.bpa.akraino.org

spec:

group: bpa.akraino.org

names:

kind: cluster

listKind: clusterList

plural: clusters

singular: cluster

shortNames:

- cl

scope: Namespaced

subresources:

status: {}

validation:

openAPIV3Schema:

properties:

apiVersion:

description:

type: string

kind:

description:

type: string

metadata:

type: object

spec:

type: object

status:

type: object

version: v1alpha1

versions:

- name: v1alpha1

served: true

storage: true |

This proposal would make it possible to assign roles to nodes based on the features discovered. Currently, the proposal makes use of CPU, memory, SRIOV and QAT. However the baremetal operator list returns much more information about the nodes, we would be able to extend this feature to allow the operator assign roles based on more complex requirements such as CPU model. This would feed into Hardware Platform Awareness (HPA)