ICN strives to automate the process of installing the local cluster controller to the greatest degree possible–"zero touch installation". Most of the work is done simply by booting up the jump host (Local Controller). Once booted, the controller is fully provisioned and begins to inspect and provision baremetal servers, until the cluster is entirely configured.

This document show step by step to configure the network, and deployment architecture for ICN BP.

Apache license v2.0

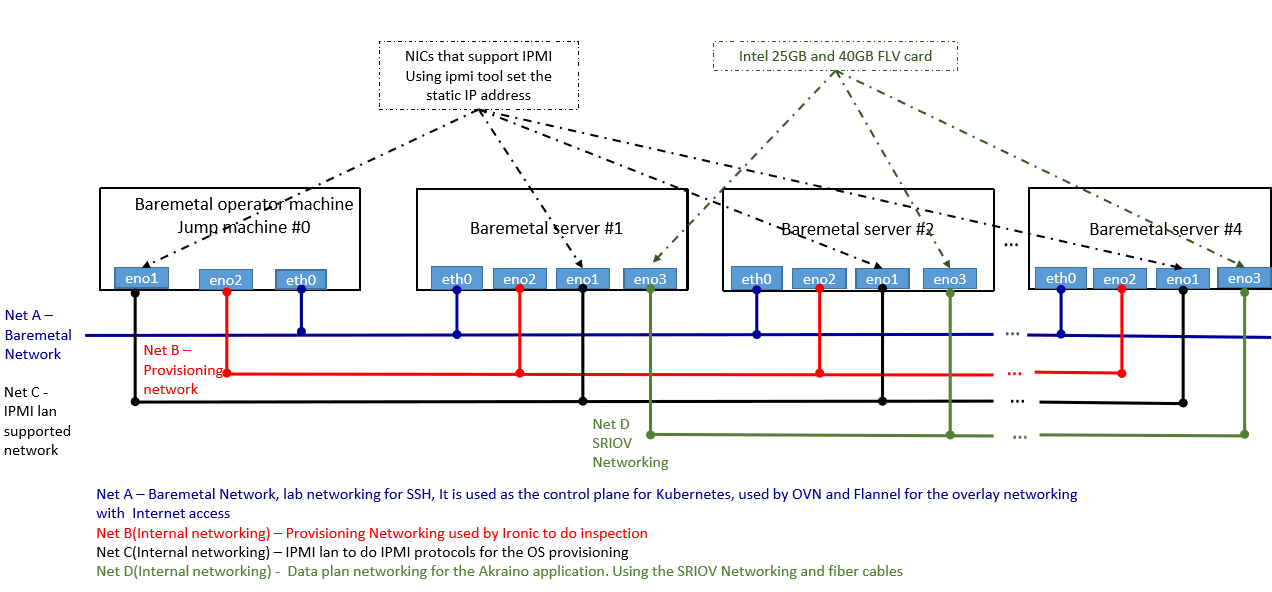

The local controller is provisioned with the Metal3 Baremetal Operator and Ironic, which enable provisioning of Baremetal servers. The controller has three network connections to the baremetal servers: network A connects baremetal servers, network B is a private network used for provisioning the baremetal servers, and network C is the IPMI network, used for control during provisioning. In addition, the baremetal hosts connect to the network D, the SRIOV network.

In some deployment model, you can combine Net C and Net A to be the same networks, but developer should take care of IP Address management between Net A and IPMI address of the server.

There are two main components in ICN Infra local controller - Local controller and Compute K8s cluster

Local controller will reside in the jump server to run the Metal3 operator, Binary provisioning agent operator and Binary provisioning agent restapi controller.

Compute K8s cluster will actually run the workloads and it installed on Baremetal nodes

All-in-one VM based deployment required at least 32 GB RAM and 32 CPU servers

Recommended Hardware requirements 64GB Memory and 32 CPU servers, QAT card and SRIOV network cards

Jump server required to be pre-installed with Ubuntu 18.04

No Prerequisites for ICN BP

Jump server required to be installed with Ubuntu 18.04 server, and have 3 distinguished networks as shown in figure 1

Local controller: at least three network interfaces.

Baremetal hosts: four network interfaces, including one IPMI interface.

Four or more hubs, with cabling, to connect four networks.

Hostname | CPU Model | Memory | Storage | 1GbE: NIC#, VLAN, (Connected extreme 480 switch) | 10GbE: NIC# VLAN, Network (Connected with IZ1 switch) |

|---|---|---|---|---|---|

Jump | Intel 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

ICN R2 release support Ubuntu 18.04 - ICN BP install all required software during "make install"

Please refer the figure 1, for all the network requirement in ICN BP

Please sure you have 3 distinguished networks net A, Net B and Net C as mentioned in figure 1. Local controller uses the Net B and Net C to provision the Baremetal servers to do the OS provisioning.

(Tested as below)

Hostname | CPU Model | Memory | Storage | 1GbE: NIC#, VLAN, (Connected extreme 480 switch) | 10GbE: NIC# VLAN, Network (Connected with IZ1 switch) |

|---|---|---|---|---|---|

node1 | Intel 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

node2 | Intel 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

node3 | Intel 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

The local controller will install all the software in compute servers right from OS, the software required to bring up the Kubernetes cluster

ICN BP check all the precondition and execution requirements for both Baremetal and VM deployment

Installation is two-step process and everything starts with one command "make install"

User required to provide the IPMI information of the edge server they required to connect to the local controller by editing node JSON sample file in the directory icn/deploy/metal3/scripts/nodes.json.sample as below. If you want to increase nodes, just add another array

{

"nodes": [

{

"name": "edge01-node01",

"ipmi_driver_info": {

"username": "admin",

"password": "admin",

"address": "10.10.10.11"

},

"os": {

"image_name": "bionic-server-cloudimg-amd64.img",

"username": "ubuntu",

"password": "mypasswd"

}

},

{

"name": "edge01-node02",

"ipmi_driver_info": {

"username": "admin",

"password": "admin",

"address": "10.10.10.12"

},

"os": {

"image_name": "bionic-server-cloudimg-amd64.img",

"username": "ubuntu",

"password": "mypasswd"

}

}

]

} |

User will find the network configuration file named as "user_config.sh" in the icn parent folder

#!/bin/bash

#Local controller - Bootstrap cluster DHCP connection

#BS_DHCP_INTERFACE defines the interfaces, to which ICN DHCP deployment will bind

#e.g. BS_DHCP_INTERFACE=${BS_DHCP_INTERFACE:-"ens513f0"}

BS_DHCP_INTERFACE=${BS_DHCP_INTERFACE:-}

#BS_DHCP_INTERFACE_IP defines the IPAM for the ICN DHCP to be managed.

#e.g. BS_DHCP_INTERFACE_IP=${BS_DHCP_INTERFACE_IP:-"172.31.1.1/24"}

BS_DHCP_INTERFACE_IP=${BS_DHCP_INTERFACE_IP:-}

#Ironic Metal3 settings for provisioning network

#Interface to which Ironic provision network to be connected

#e.g. IRONIC_INTERFACE=${IRONIC_INTERFACE:-"enp4s0f1"}

IRONIC_INTERFACE=${IRONIC_INTERFACE:-}

#Ironic Metal3 setting for IPMI LAN Network

#Interface to which Ironic IPMI LAN should bind

#e.g. IRONIC_IPMI_INTERFACE=${IRONIC_IPMI_INTERFACE:-"enp4s0f0"}

IRONIC_IPMI_INTERFACE=${IRONIC_IPMI_INTERFACE:-}

#Interface IP for the IPMI LAN, ICN verfiy the LAN Connection

#e.g. IRONIC_IPMI_INTERFACE_IP=${IRONIC_IPMI_INTERFACE_IP:-"10.10.110.20"}

IRONIC_IPMI_INTERFACE_IP=${IRONIC_IPMI_INTERFACE_IP:-} |

After configuring, Node inventory file and setting files. Please run make install from the ICN parent directory

Virtual deployment is used for the dev env using metal3 virtual deployment to create VM with PXE boot. VM Ansible scripts the node inventory file in the /opt/ironic. No setting is required from user to deploy the virutal deployment