| Table of Contents | ||

|---|---|---|

|

The ICN blueprint family intends to address deployment of workloads in a large number of edges and also in public clouds using K8S as resource orchestrator in each site and ONAP-K8S as service level orchestrator (across sites). ICN also intends to integrate infrastructure orchestration which is needed to bring up a site using bare-metal servers. Infrastructure orchestration, which is the focus of this page, needs to ensure that the infrastructure software required on edge servers is installed on a per-site basis, but controlled from a central dashboard. Infrastructure orchestration is expected to do the following:

...

- SDWAN, Customer Edge, Edge Clouds – deploy VNFs/CNFs and applications as micro-services (Completed in R2 release using OpenWRT SDWAN Containerized)

- DAaaS - Distributed Analytics as a Service

- vFW

- EdgeX FoundryCDN - Content Delivery Network

Where on the Edge

Nowadays best efforts are put to keep the Cloud native control plane close to workload to reduce latency, increase performance, and fault tolerance. A single orchestration engine to be lightweight and maintain the resources in a cluster of compute node, Where customer can deploy multiple Network Functions, such as VNF, CNF, Micro service, Function as a service (FaaS), and also scale the orchestration infrastructure depending upon the customer demand.

...

Flows & Sequence Diagrams

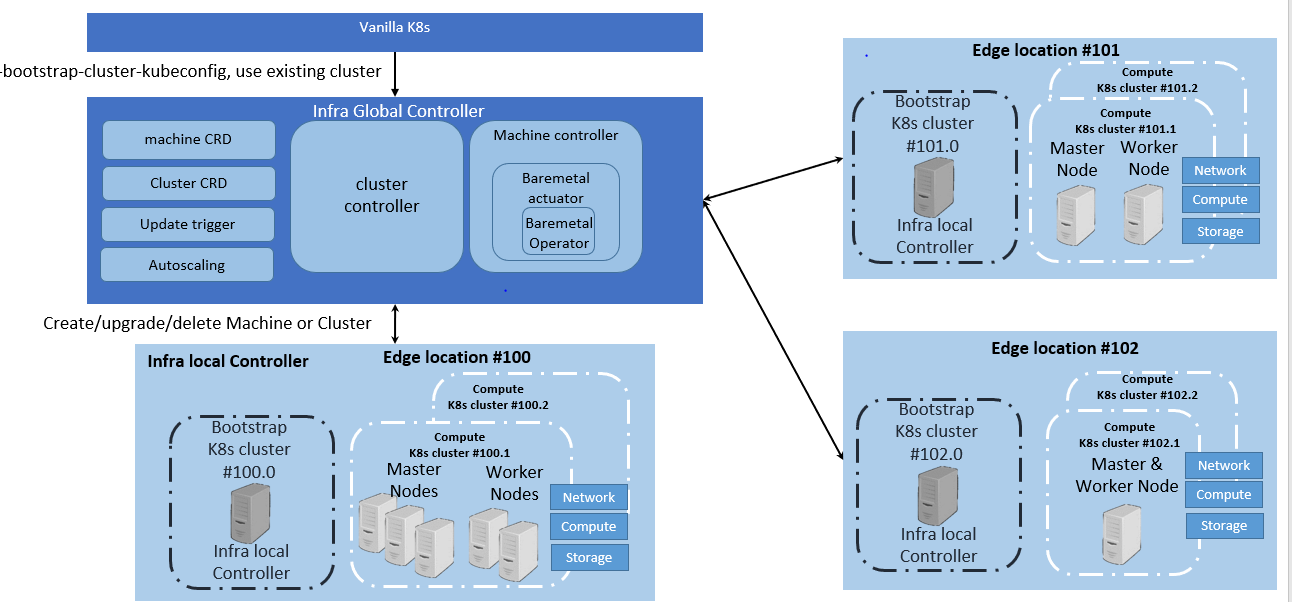

Each edge location has infra local controller, which has a bootstrap cluster, which has all the components required to boot up the compute cluster.

Platform Architecture

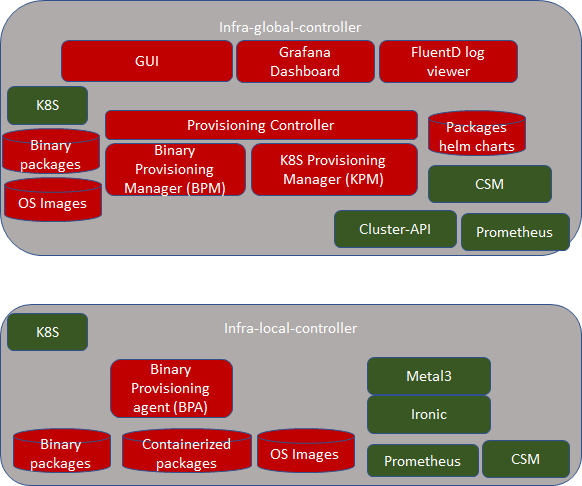

Infra-global-controller:

...

Software Platform Architecture

...

Local Controller: Kubeadm, Metal3, Baremetal Operator, Ironic, Prometheus, ONAP

...

R2 Release cover only Infra local controller:

Baremetal Operator

...

Kubernetes deployment (KUD) is a project that uses Kubespray to bring up a Kubernetes deployment and some addons on a provisioned machine. As it already part of ONAP it can be effectively reused to deploy the K8s App components(as shown in fig. II), NFV Specific components and NFVi SDN controller in the edge cluster. In R2 release KuD will be used to deploy the K8s addon such as Virlet, OVN, NFD, and Intel device plugins such as SRIOV and QAT SRIOV in the edge location(as shown in figure I). In R3 release, KuD will be evolved as "ICN Operator" to install all K8s addons. For more information on the architecture of KuD please find the information here.

...

NFV Specific components: This block is responsible for k8s compute management to support both software and hardware acceleration(include network acceleration) with CPU pinning and Device plugins such as QAT, SRIOV

SDN Controller components: This block is responsible for managing SDN controller and to provide additional features such as Service Function chaining(SFC) and Network Route manager.

...

ICN project injects the user data in each server regarding network configuration, remote command execution using ssh and maintain a common secure mechanism for all provisioning the servers. Each local controller maintains IP address management for that edge location. For more information refer - Metal3 Baremetal Operator in ICN stack

BPA Operator: Itohan Ukponmwan ramamani yeleswarapu

ICN uses the BPA operator to install KUD. It can install KUD either on Baremetal hosts or on Virtual Machines. The BPA operator is also used to install software on the machines after KUD has been installed successfully

...

When a new software CR is created, the reconcile loop is triggered, on seeing that it is a software CR, the bpa operator checks for a configmap with a cluster label corresponding to that in the software CR, if it finds one, it gets the IP addresses of all the master and worker nodes, ssh's into the hosts and installs the required software. If no corresponding config map is found, it throws an error.

Refer

BPA Rest Agent: Enyinna Ochulor

Provides a straightforward RESTful API that exposes resources: Binary Images, Container Images, and OS Images. This is accomplished by using MinIO for object storage and Mongodb for metadata.

...

Kubernetes deployment (KUD) is a project that uses Kubespray to bring up a Kubernetes deployment and some addons on a provisioned machine. As it already part of ONAP it can be effectively reused to deploy the K8s App components(as shown in fig. II), NFV Specific components and NFVi SDN controller in the edge cluster. In R2 release KuD will be used to deploy the K8s addon such as Virlet, OVN, NFD, and Intel device plugins such as SRIOV and QAT in the edge location(as shown in figure I). In R3 release, KuD will be evolved as "ICN Operator" to install all K8s addons. For more information on the architecture of KuD please find the information here.

ONAP4K8s: Kuralamudhan Ramakrishnan

SDWAN:

ONAP is used as Service orchestration in ICN BP. A lightweight golang version of ONAP is developed as part of Multicloud-k8s project in ONAP community. ICN BP developed containerized KUD multi-cluster to install the onap4k8s as a plugin in any cluster provisioned by BPA operator. ONAP4k8s installed EdgeX Foundry Workload, vFW application to install in any edge location.

Openness: Chenjie Xu

SDEWAN:

SDWAN module is SDWAN module is worked as a software-defined router which can be used to define the rules when connecting to the external internet. It is implemented as CNF instead of VNF for better performance and effective deployment, and leverage OpenWRT (an open-source project based on Linux, and used on embedded devices to route network traffic) and mwan3 package (for wan interfaces management) to implement its functionalities, detail information can be found at: SDWAN Module Designat: ICN - SDEWAN

SDEWAN controller: Huifeng Le Cheng Li Ruoyu Ying

Cloud Storage:

Cloud Storage which used by BPA Rest Agent to provide storage service for image objects with binary, container and operating system. There are 2 solutions, MinIO and GridFS, with the consideration of Cloud native and Data reliability, we propose to use MinIO, which is CNCF project for object storage and compatible with Amazon S3 API, and provide language plugins for client application, it is also easy to deploy in Kubernetes and flexible scale-out. MinIO also provide storage service for HTTP Server. Since MinIO need export volume in bootstrap, local-storage is a simple solution but lack of reliability for the data safety, we will switch to reliability volume provided by Ceph CSI RBD in next release. Detail information can be found at: Cloud Storage Design

...

Components | Link | Akraino Release target | ||

Provision stack - Metal3 | R2 | |||

Host Operating system | Ubuntu 18.04 | R2Quick Access Technology(QAT) drivers | ||

Intel® C627 Chipset - https://ark.intel.com/content/www/us/en/ark/products/97343/intel-c627-chipset.html | R2 | NIC drivers | R2 | |

ONAP | R2 | |||

Workloads | OpenWRT SDWAN - https://openwrt.org/ | R2 | ||

KUD | R2 | |||

Kubespray | R2 | |||

K8s | R2 | |||

Docker | https://github.com/docker - 18.09 | R2 | ||

Virtlet | R2 | |||

SDN - OVN | R2 | |||

OpenvSwitch | https://github.com/openvswitch/ovs - 2.10.1 | R2 | ||

Ansible | https://github.com/ansible/ansible - 2.7.10 | R2 | ||

Helm | https://github.com/helm/helm - 2.9.1 | R2 | ||

Istio | https://github.com/istio/istio - 1.0.3 | R2 | ||

Rook/Ceph | R2 | |||

MetalLB | R2 | |||

| Device Plugins | https://github.com/intel/intel-device-plugins-for-kubernetes - QAT, SRIOV | R2 | ||

Node Feature Discovery | R2 | |||

CNI | https://github.com/coreos/flannel/ - release tag v0.11.0 https://github.com/containernetworking/cni - release tag v0.7.0 https://github.com/containernetworking/plugins - release tag v0.8.1 https://github.com/containernetworking/cni#3rd-party-plugins - Multus v3.3tp, SRIOV CNI v2.0( with SRIOV Network Device plugin) | R2 |

Hardware and Software Management

Software Management

ICN BP R2 Timelines

Hardware Management

Hostname | CPU Model | Memory | Storage | 1GbE: NIC#, VLAN, (Connected extreme 480 switch) | 10GbE: NIC# VLAN, Network (Connected with IZ1 switch) |

|---|---|---|---|---|---|

Jump | 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

node1 | 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

node2 | 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

node3 | 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

node4 | 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

node5 | 2xE5-2699 | 64GB | 3TB (Sata) | IF0: VLAN 110 (DMZ) | IF2: VLAN 112 (Private) |

...