Introduction

The R3 release will evaluation the throughput and packet forwarding performance of the Mellanox BlueField SmartNIC card.

A DPDK based Open vSwitch (OVS-DPDK) is used as the virtual switch, and the network traffic is virtualized with the VXLAN encapsulation.

Currently, the community version OVS-DPDK is considered experimental and not mature enough, it only supports "partial offload" which cannot

utilize the full performance advantage of Mellanox NICs. Thus the OVS-DPDK we used is a fork of the community Open vSwitch. We develop

our own offload code which enables the full hardware offload with DPDK rte_flow APIs.

We have plans to open source this OVS-DPDK. More detailed will be provided in the future documentation.

Akarino Test Group Information

Overall Test Architecture

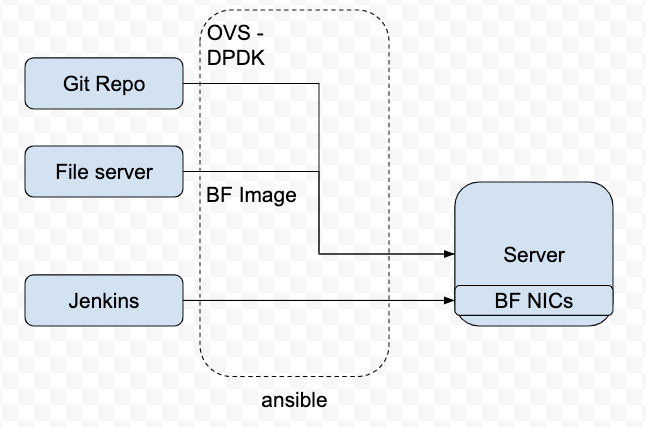

To deploy the Test architecture, we use a private Jenkins and an Intel server equipped with a BlueField v1 SmartNIC.

We use Ansible to automatically setup the filesystem image and install the OVS-DPDK in the SmartNICs.

The File Server is a simple Nginx based web server where stores the BF drivers, FS image.

The Git repo is our own git repo where hosts OVS-DPDK and DPDK code.

The Jenkins will use ansible plugin to download BF drivers and FS image in the test server and setup the environment

according to the ansible playbook.

| Image | download link |

|---|---|

| BlueField-2.5.1.11213.tar.xz | https://www.mellanox.com/products/software/bluefield |

| core-image-full-dev-BlueField-2.5.1.11213.2.5.3.tar.xz | https://www.mellanox.com/products/software/bluefield |

| mft-4.14.0-105-x86_64-deb.tgz | |

| MLNX_OFED_LINUX-5.0-2.1.8.0-debian8.11-x86_64.tgz |

Test Bed

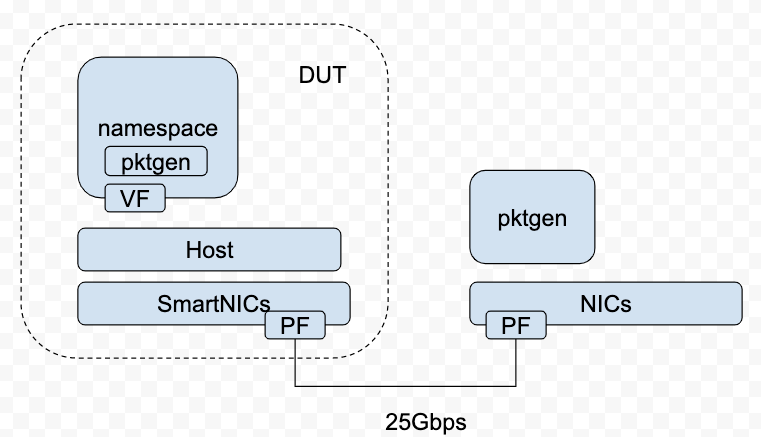

The testbed setup is shown in the above diagram. DUT stands for Device Under Test

| Type | Description |

|---|---|

| SmartNICs | BlueField v1, 25Gbps |

| DPDK | version 19.11 |

| vSwitch | OVS-DPDK 2.12 with VXLAN DECAP/ENCAP offload enabled. |

OVS-DPDK Setup

root@bluefield:/home/ovs-dpdk# ovs-vsctl show

2dccd148-526c-44a5-9351-67b04c5e2da4

Bridge br-int

datapath_type: netdev

Port vxlan-vtp

Interface vxlan-vtp

type: vxlan

options: {dst_port="4789", key=flow, local_ip="192.168.1.1", remote_ip=flow, tos=inherit}

Port br-int

Interface br-int

type: internal

Port pf1hpf

Interface pf1hpf

type: dpdk

options: {dpdk-devargs="class=eth,mac=ae:d8:8a:c5:22:fb"}

Bridge br-ex

datapath_type: netdev

Port br-ex

Interface br-ex

type: internal

Port p1

Interface p1

type: dpdk

options: {dpdk-devargs="class=eth,mac=98:03:9b:af:7b:0b"}

root@bluefield:/home/ovs-dpdk# ovs-vsctl list open_vswitch

_uuid : 2dccd148-526c-44a5-9351-67b04c5e2da4

bridges : [22334686-733a-445e-9130-a42009a3586e, 38af610d-01f7-497d-878b-c6b6a44abf6a]

cur_cfg : 10

datapath_types : [netdev, system]

datapaths : {}

db_version : []

dpdk_initialized : true

dpdk_version : "DPDK 19.11.0"

external_ids : {}

iface_types : [dpdk, dpdkr, dpdkvhostuser, dpdkvhostuserclient, erspan, geneve, gre, internal, ip6erspan, ip6gre, lisp, patch, stt, system, tap, vxlan]

manager_options : []

next_cfg : 10

other_config : {dpdk-extra="-w 03:00.1,representor=[0,65535] --legacy-mem ", dpdk-init="true", hw-offload="true"}

ovs_version : []

ssl : []

statistics : {cpu="16", file_systems="/,13521220,3918464 /data,243823,2064 /boot,357176,61104", load_average="1.41,1.37,1.36", memory="16337652,5589928,1707640,0,0", process_ovs-vswitchd="5959388,256352,11515170,0,11484792,11484792,7", process_ovsdb-server="12620,6260,13190,0,68233832,68233832,6"}

system_type : []

system_version : []

Traffic Generator

We will use DPDK pktgen as the Traffic Generator.

Test Results

Packet Forwarding Performance Results

| OVS-DPDK | OF rules | Traffic | pktgen pps | received pps (hw) | received pps (no hw) | PMD idle cycles w/ hw offload | PMD idle cycles w/o hw offload |

|---|---|---|---|---|---|---|---|

| 1 PMD(s) | Directly forwarding without match "in_port=vxlan-vtp, actions=output: pf1hpf" | single TCP flow with VXLAN encapsulation | 24.6Mpps | 23.9Mpps | 745156 | 99% | 0% |