You are viewing an old version of this page. View the current version.

Compare with Current

View Page History

« Previous

Version 7

Next »

Introduction

IEC Type3 mainly focus on Android Application running on edge ARM Cloud architecture with GPU/ vGPU Management. Also, ARM cloud games need to have the basic features of "cloud”, such as flexibility , availability everywhere. Based on cloud infrastructure optimized for android application, providing ARM application services.

How to use this document

This document describes the construction, compilation and use of robox Android container environment. Introduce environment configuration, code download, build and compile, and how to use it.

This document is mainly suitable for users who build and compile robox container Android emulation.

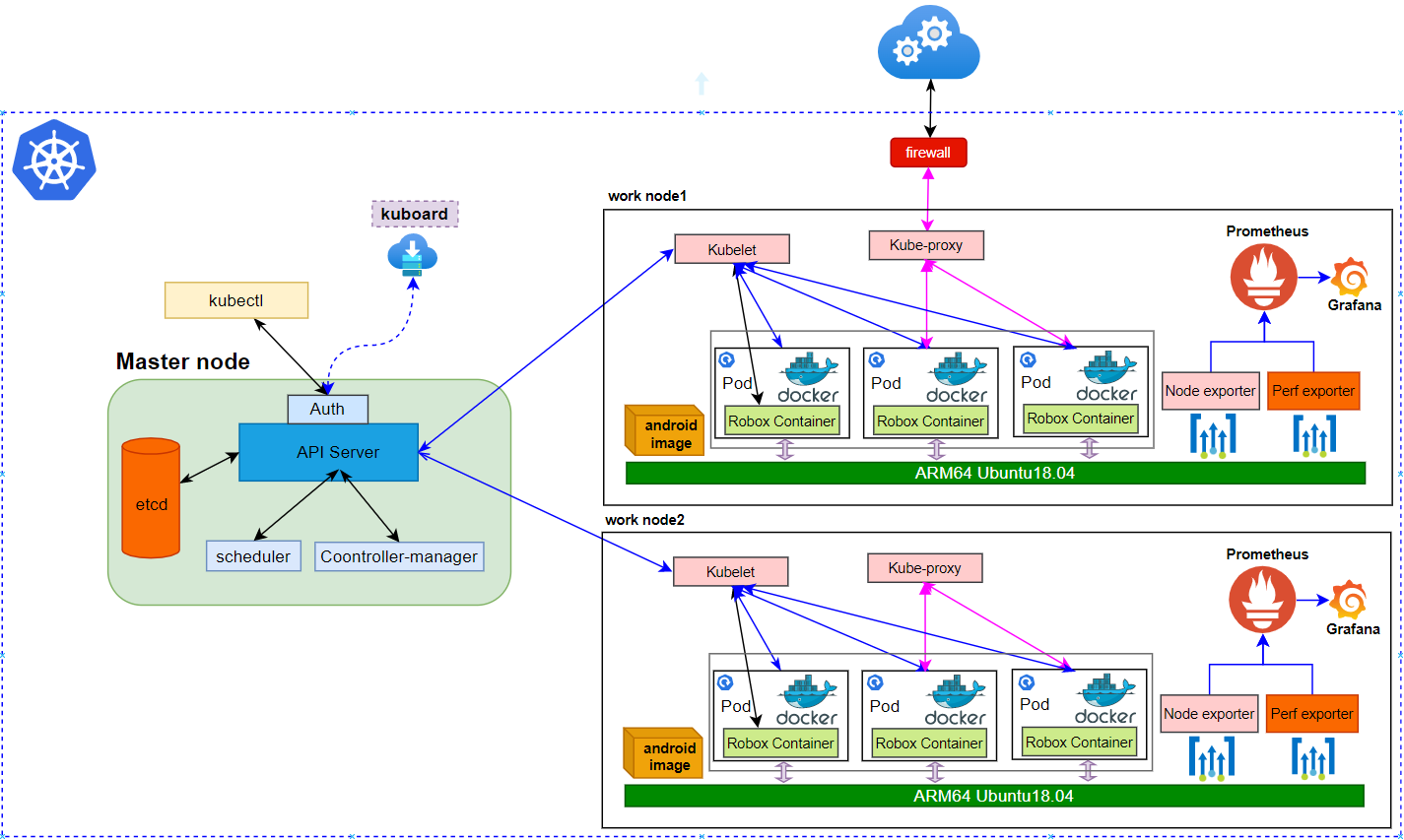

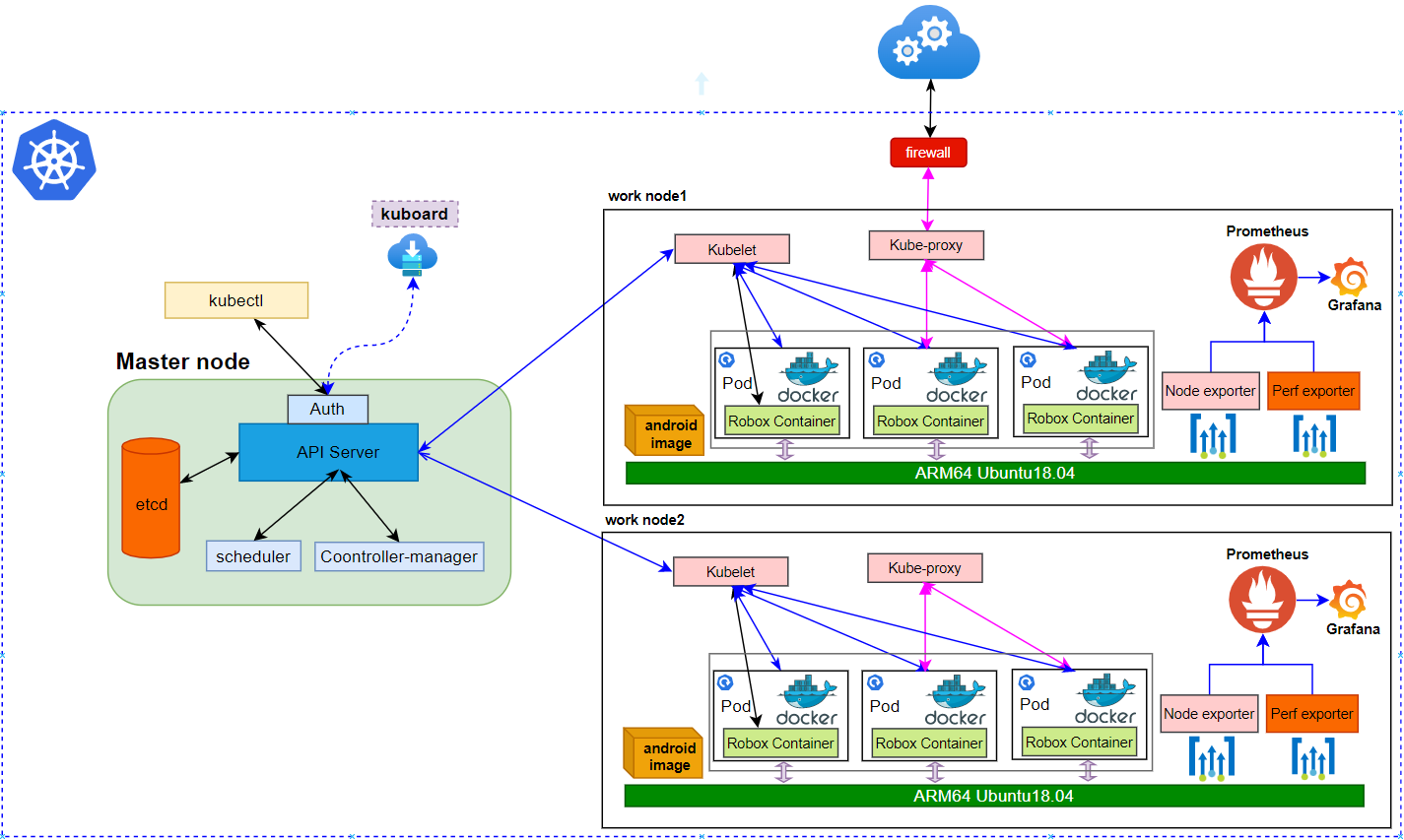

Deployment Architecture

Core Framework

Robox Framework

Pre-Installation Requirements

2*arm64 server:

Arch | AARCH64 |

Processor model | 1*Aarch64 processor( A72 inside) |

RAM | 16*DDR4-2933 |

Storage | 10*2.5 inch SAS/SATA/SSD or 8*2.5 inch NVMe SSD |

Network | 1 onboard network card, each card supports 4*GE port or 4*10GE port or 4*25GE port |

Power Supply | Power 100~240V AC,240V DC |

Scale | 447 mm x 490 mm x 86.1 mm |

ARM Server satisfies the Arm Server Ready certified.

item |

| method |

os | ubuntu 18.04.3(key) | |

robox | robox is an Android container. | |

robox Compile and run dependent packages | support for robox compilation and operation. | apt-get install build-essential cmake cmake-data debhelper dbus google-mock libboost-dev libboost-filesystem-dev libboost-log-dev libboost-iostreams-dev libboost-program-options-dev libboost-system-dev libboost-test-dev libboost-thread-dev libcap-dev libdbus-1-dev libdbus-cpp-dev libegl1-mesa-dev libgles2-mesa-dev libglib2.0-dev libglm-dev libgtest-dev liblxc1 libproperties-cpp-dev libprotobuf-dev libsdl2-dev libsdl2-image-dev lxc-dev pkg-config protobuf-compiler |

docker | needed by K8S/Robox | |

Anbox | Run Android applications on any GNU/Linux operating system. | |

Grafana | Compose and scale observability with one or all pieces of the stack | 8.4.3 |

Prometheus | | 2.34.0 |

K8s | container orchestration engine for automating deployment, scaling, and management of containerized applications | k8s: v1.23.5;kube-apiserver:v1.21.11kube-scheduler:v1.21.11kube-proxy:v1.21.11etcd:3.4.13-0coredns:v1.8.0 |

schema scripts: N/A

Other Installation Requirements

Jump Host Requirements:N/A

Network Requirements: The network connection is normal and can communicate with the external network.

Installation High-Level Overview

Creating a Node Inventory File: N/A

Creating the Settings Files: N/A

Running: N/A

Upstream Deployment Guide

Upstream Deployment Key Features :N/A

Special Requirements for Upstream Deployments :N/A

Scenarios and Deploy Settings for Upstream Deployments :N/A

Including Upstream Patches with Deployment:N/A

Running: N/A

Interacting with Containerized Overcloud: N/A

Verifying the Setup as defined the Akraino validation feature project plus any additional testing specific to the blue print

Install Main Components

Since the components and images required by the project are relatively large, and the process of compilation takes time, we store the compiled images on the github repository. The link is: https://github.com/ysemi-computing/RoboxWidget.git

The code structure after downloading is as follows:

├── android.img├── components│ ├── grafana-server│ ├── node_exporter│ ├── perf_exporter│ └── prometheus |

Build Android image for anbox

Code cloning and compilation is very time-consuming. If you just want to deploy and experience the Android system by iec, you can skip this section, because the project you just cloned already exists.

Firstly, you should install a ubuntu 18.04 system on Aarch64 processor, and swith to root user, then run the script “build_android_image.sh”。

After about two hours, the Android image was compiled successfully, and the results are as follows:

ls out/target/product/arm64/android-info.txt obj previous_build_config.mk recovery symbols system.img build_fingerprint.txt cache.img data gen module-info.json ramdisk.img root system userdata.img |

Required packages installation

Before running anbox, we need to install some necessary packages, you can execute the fol script “arm64_env_setup.sh”

sudo docker images | grep roboxandroid robox e223a91c4b58 6 days ago 860MB |

Run robox android system

After the above two scripts are executed, the robox operating environment is ready, and the entire directory structure of robox can be seen as follows

├── android├── binaryFiles├── cmake├── COPYING.GPL├── cross-compile-chroot.sh├── data├── docs├── external├── kernel├── patch├── products├── scripts├── src |

Step1: You can copy the binaryFiles in the robox code to the home directory, then run robox

start the first continer instance

./robox -v start 1

start the second continer instance

./robox -v start 2

Step2: Check the docker instance process.

sudo docker ps | grep instance

step3: Check the anbox session manager process

ps -aux | grep session

step4: Log in the container and confirm whether the robox instance started successfully.

docker exec -it instance2 sh

step5: get android property sys.boot_completed

getprop | grep sys.boot.completed

[sys.boot_completed]: [1] //1 which means start successfully

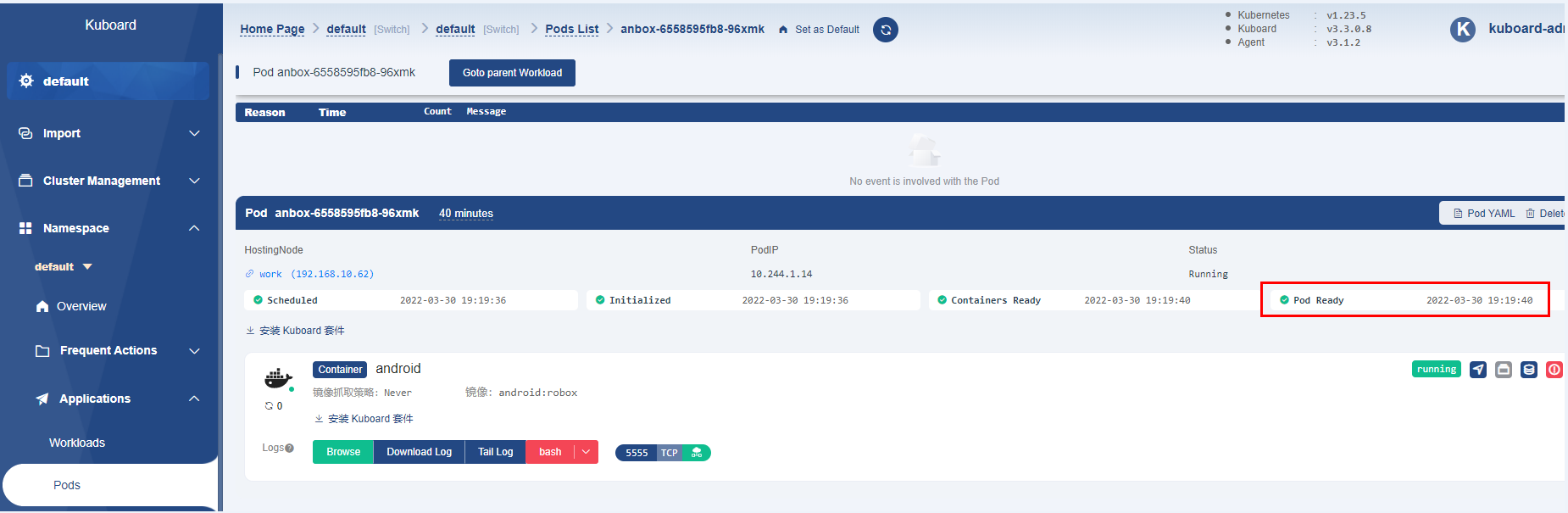

Cluster deployment and access by K8S It is troublesome to start anbox through commands to deploy in a real environment. Here, the well-known K8S system is used to complete the deployment of anbox instances. On this basis, anbox can be much more effectively deployed, run, monitored, and analyzed for multiple host nodes. Here is k8s cluster setup and container orchestration.

1 Basic Architecture

2 K8s Cluster construction

The k8s cluster construction can be completed through the warehouse code, you can do as follows

cd iec/deploy/compass && bash deployIEC.sh |

Here is the current configuration:

Ubuntu Version:18.04

Docker: 20+

k8s: 1.21.3

Host Network:

master: 192.168.10.66

work: 192.168.10.62

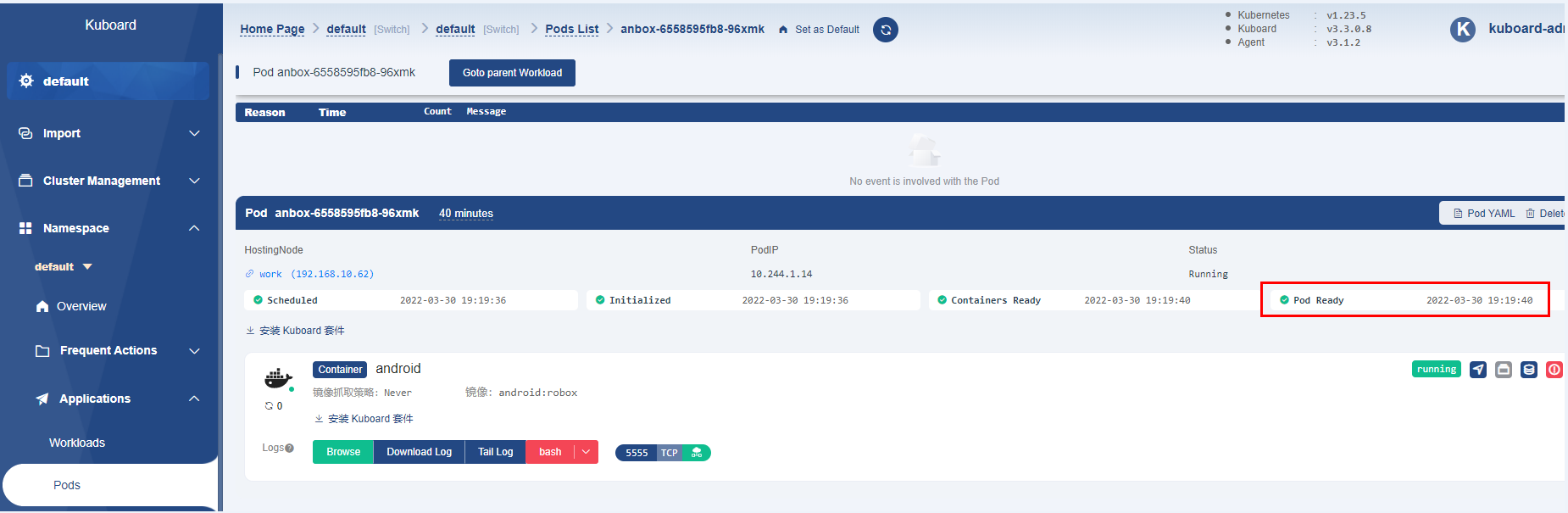

3 Check cluster running status

kubectl get cs Warning: v1 ComponentStatus is deprecated in v1.19+NAME STATUS MESSAGE ERRORscheduler Healthy ok controller-manager Healthy ok etcd-0 Healthy {"health":"true"} kubectl get nodeNAME STATUS ROLES AGE VERSIONmaster Ready control-plane,master 22h v1.21.3work Ready <none> 22h v1.21.5At the same time, we can present the status of the cluster through the k8s visual component. Here we use kuboard, which is very convenient to install and use |

then start kuboard with web browser

url:master-ip-address:30080

user:admin

password:Kuboard123

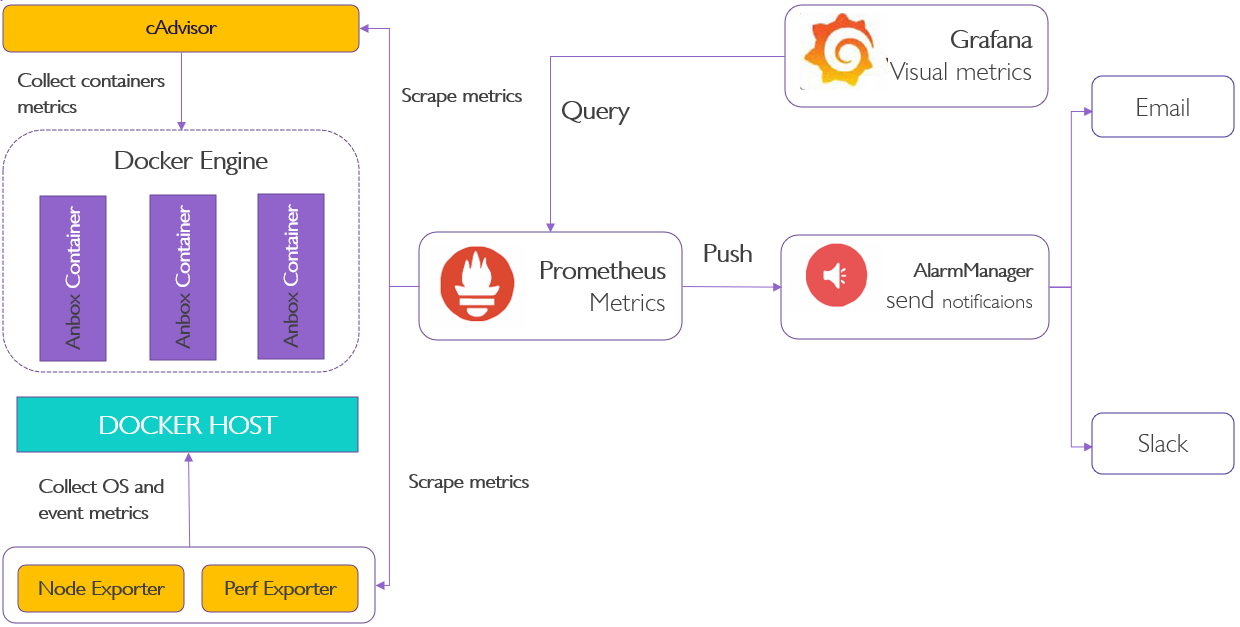

1 Basic Architecture

2 Bootup basic components

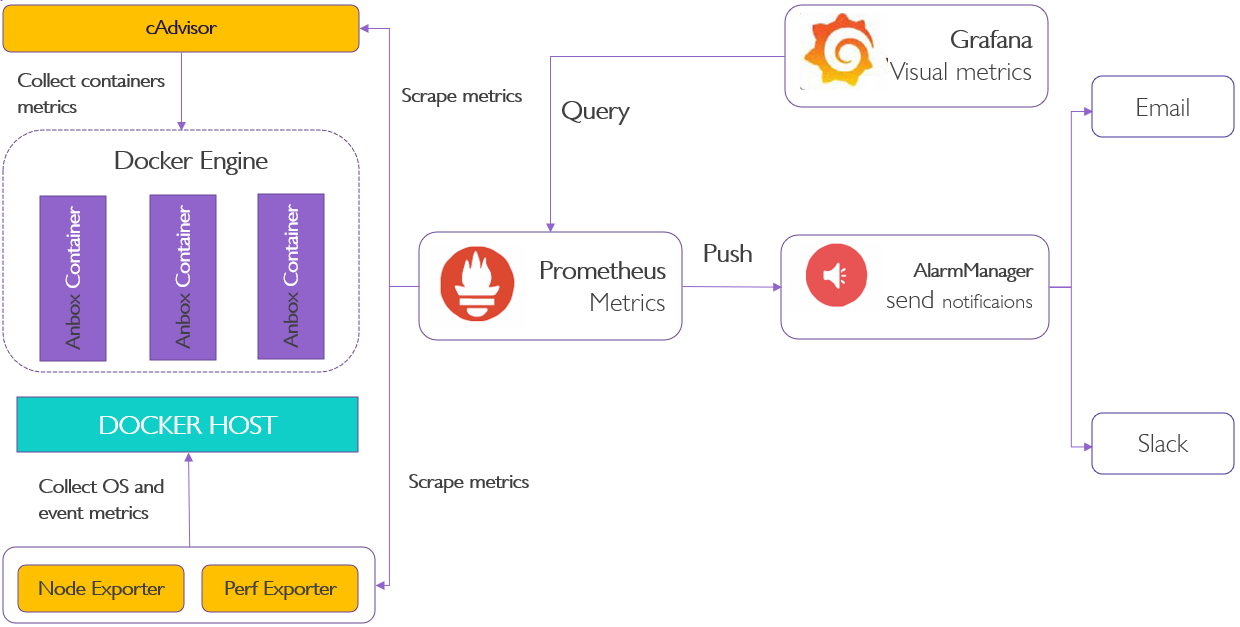

Prometheus is used for data monitoring, and then a better visual interface is grafana, the data it needs can just be obtained through Prometheus.

This project only needs to run the script below

.

After the script is executed, the required Prometheus and grafana are downloaded and run in the background

3 Analyze data by web browser

Let us explore data that Prometheus has collected about itself. To use Prometheus's built-in expression browser, navigate to http://localhost:9090/graph and choose the "Table" view within the "Graph" tab. Enter the below into the expression console and then click "Execute":

prometheus_target_interval_length_seconds

In addition, we can log in to grafana through a web browser and see the effect as shown below

user: admin

password: admin

Developer Guide and Troubleshooting

N/A

Post-deployment Configuration

N/A

N/A

N/A

Uninstall Guide

N/A

Troubleshooting

When the server restarts, the binder_linux module needs to be loaded and installed as follows:

sudo modprobe ashmem_linuxsudo rmmod binder_linux || true; sudo modprobe binder_linux num_devices=254sudo chmod 777 /dev/ashmem /dev/binder* |

If there is an external graphics card, you need to manually disable the built-in graphics card after each server restart. for example:

lspci | grep -in VGA6:0007:41:00.0 VGA compatible controller: Huawei Technologies Co., Ltd. Hi1710 [iBMC Intelligent Management system chip w/VGA support] (rev 01)echo 1 > /sys/bus/pci/devices/0007\:41\:00.0/remove |

Maintenance

Blue Print Package Maintenance

Software maintenance: N/A

Hardware maintenance:N/A

Blue Print Deployment Maintenance (N/A)

Frequently Asked Questions

N/A

License

N/A

References

N/A

Definitions, acronyms and abbreviations

N/A