Introduction

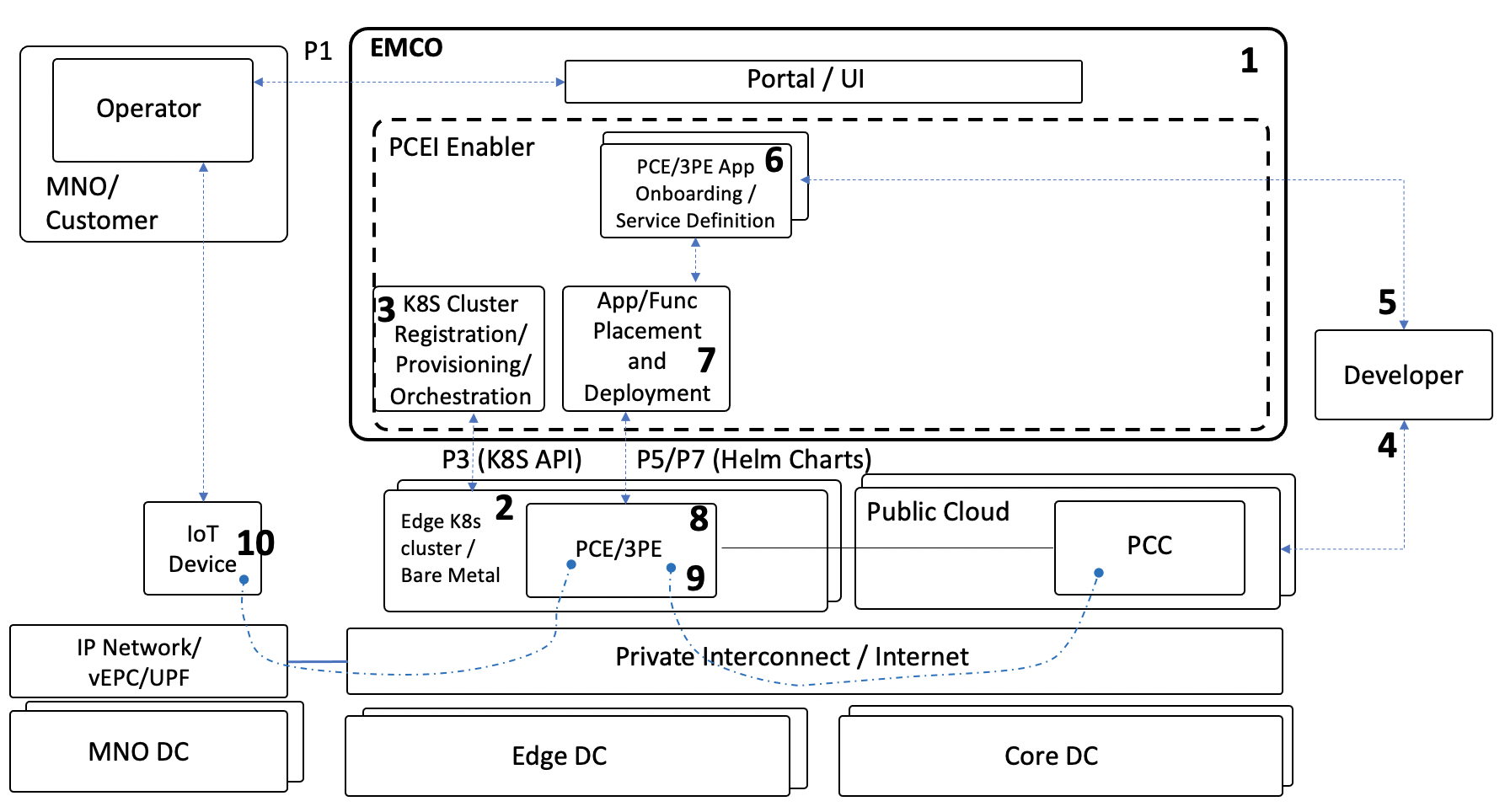

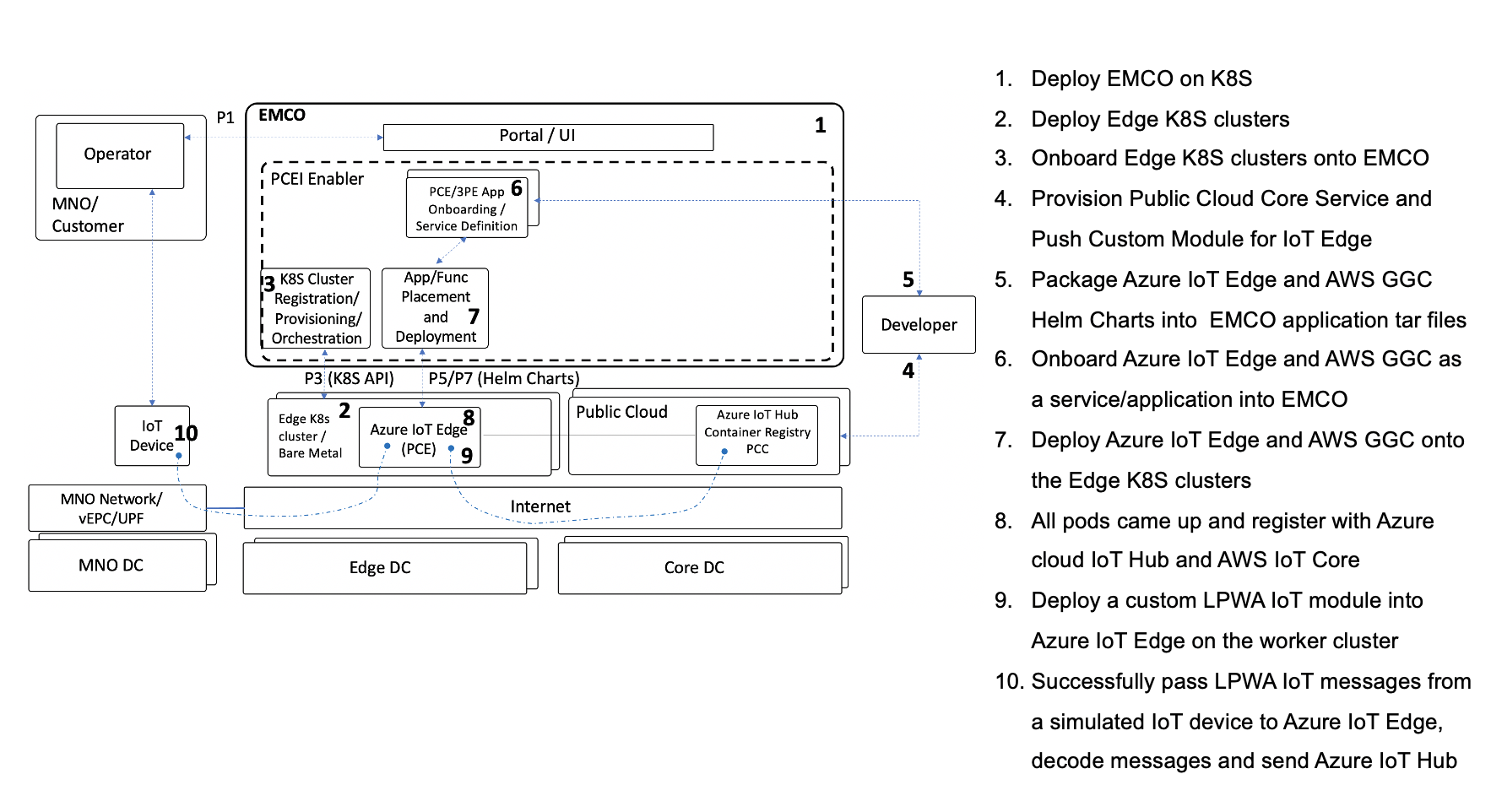

This document describes the use of Public Cloud Edge Interface (PCEI) implemented based on Edge Multi-Cluster Orchestrator (EMCO) for deployment of Public Cloud Edge (PCE) Apps from multiple clouds (Azure and AWS), deployment of a 3rd-Party Edge (3PE) App (an implementation of ETSI MEC Location API App), as well as the end-to-end operation of the deployed PCE Apps using simulated Low Power Wide Area (LPWA) IoT client.

End-to-End Validation Environment

The end-to-end validation environment and flow of steps is shown below:

Description of components of the end-to-end validation environmen:

- EMCO - Edge Multi-Cloud Orchestrator. PCEI Enabler functions are based on EMCO implementation. EMCO is deployed in the K8S cluster.

- Edge K8S Clusters - Kubernetes clusters on which PCE Apps (Azure IoT Edge, AWS GGC), 3PE App (ETSI Location API Handler) are deployed.

- Public Cloud - IaaS/SaaS (Azure, AWS).

- PCE - Public Cloud Edge App (Azure IoT Edge, AWS GGC)

- 3PE - 3rd-Party Edge App (ETSI MEC Location API App)

- Private Interconnect / Internet - Networkin between IoT Device/Client and PCE/3PE as well as connectivity between PCE and PCC.

- IoT Device - Simulated Low Power Wide Area (LPWA) IoT Client.

- IP Network/vEPC/UPF) - Network providing connectivity between IoT Device and PCE/3PE. Note that in this validation the vEPC (virtual Evolved Packet Core) /UPF (User Plane Function) are not used.

- MNO DC - Mobile Network Operator Data Center. Not used in the validation.

- Edge DC - Edge Data Center. Equinix DC used in this validation.

- Core DC - Public Cloud.

- Developer - an individual/entity providing PCE/3PE App.

- Operator - an individual/entity operating PCEI functions.

Note that P1, P3, P5/P7 Reference Points are shown to illustrate alignment with general PCEI architecture.

The end-to-end PCEI validation steps are described below:

- Deploy EMCO.

- Deploy Edge K8S clusters

- Onboard Edge K8S clusters onto EMCO

- Provision Public Cloud Core Service and PCE software.

- Package PCE/3PE Helm Charts into EMCO

- Onboard PCE/3PE as a service/application into EMCO

- Deploy PCE/3PE onto the Edge K8S clusters

- All PCE pods came up and register with PCC. All 3PE pods come up.

- Deploy software onto PCE on the worker cluster

- Successfully pass LPWA IoT messages from a simulated IoT device to PCE, decode messages and send to PCC.

For performing Steps 1 and 2 please refer to the PCEI R4 Installation Guide.

Provisioning PCEI Orchestration Infrastructure (EMCO)

Please refer to the PCEI R4 Installation Guide to deploy EMCO and Edge K8S Clusters.

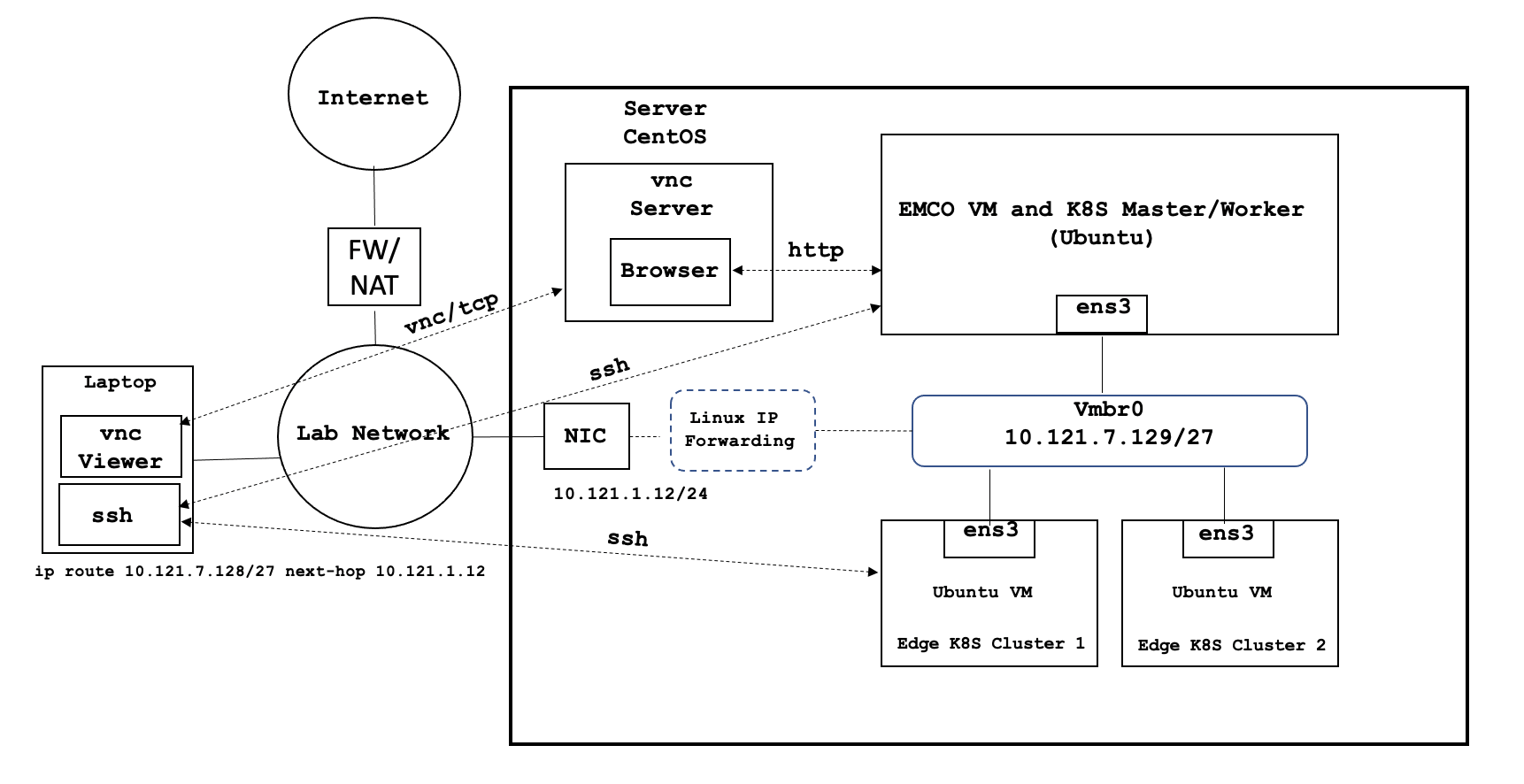

Due to current limitations in EMCO functionality, it is recommended that VNC is used to access the EMCO UI via a Web Browser as shown in the diagram below:

In order to be able to access the EMCO cluster and the Edge K8S cluster via ssh directly from a laptop as shown in the diagram above, please copy the ssh key used in the PCEI Installation to you local machine:

### copy id_rsa key from deployment host to be able to ssh to eco and cluster vm’s sftp onaplab@10.121.1.12 get ~/.ssh/id_rsa pcei-emco ### ssh to VMs ssh -i pcei-emco onaplab@10.121.7.145

Accessing EMCO UI

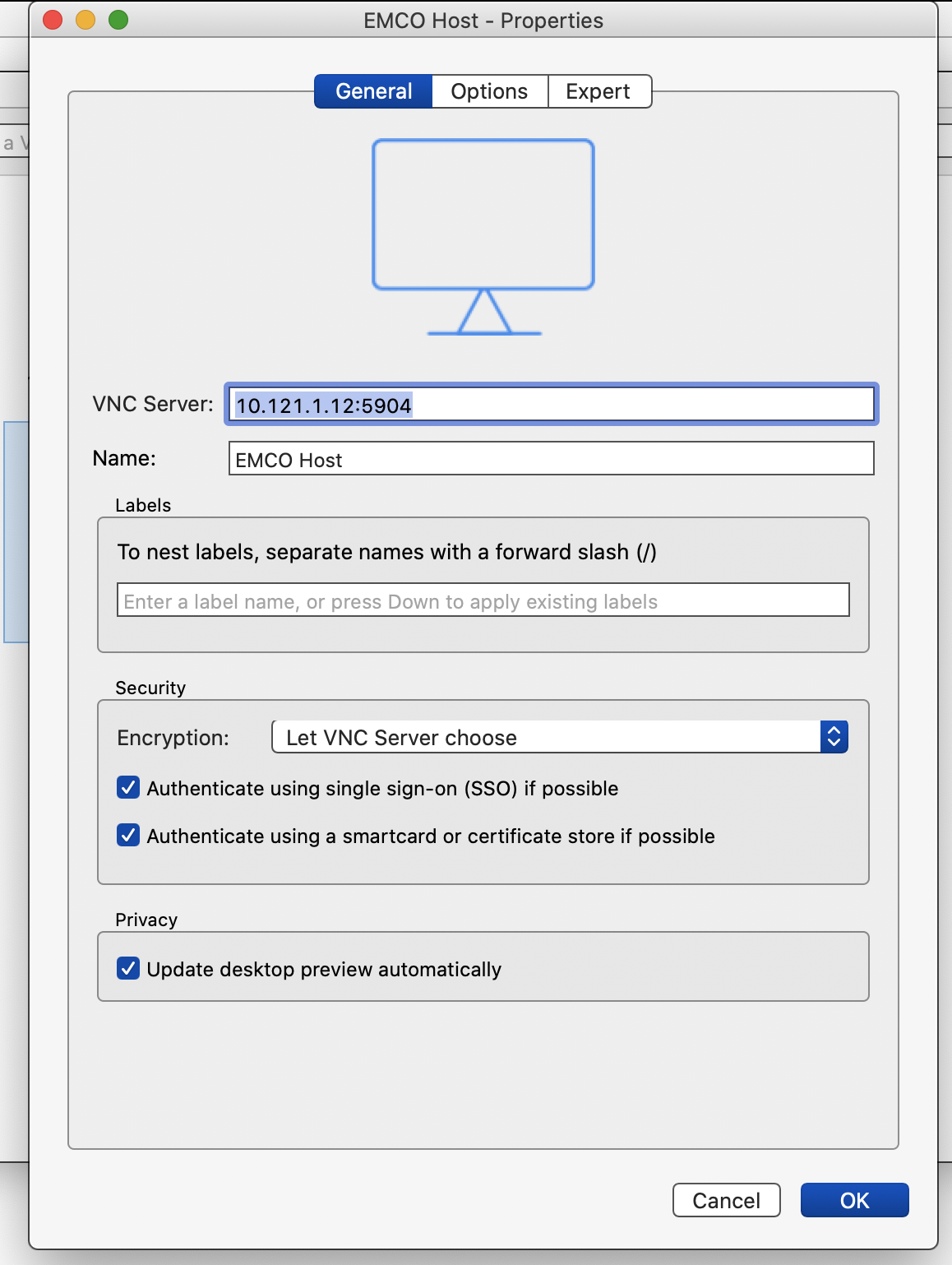

In order to access EMCO UI please install VNC Viewer on your local laptop and configure it to connect to the VNC server that was installed on the EMCO Host Server (refer to the PCEI R4 Installation Guide for VNC server installation instructions).

Connect to the VNC server, start the Browser and point it to the EMCO VM (amcop-vm-01) IP address and port 30480:

http://10.121.7.145:30480

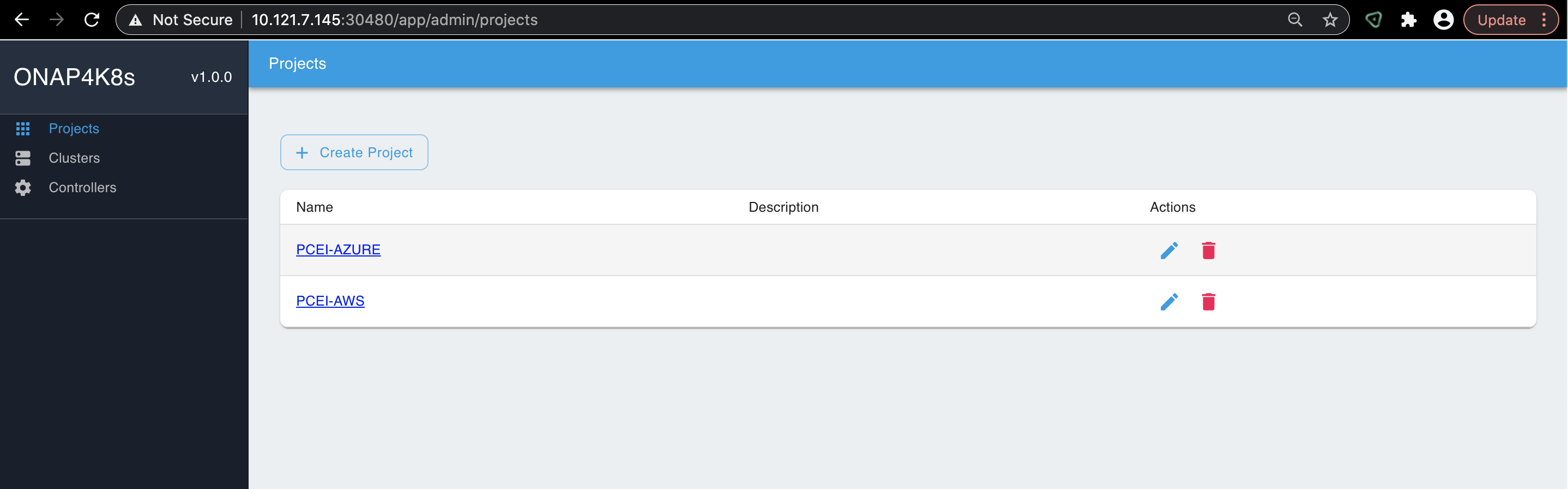

You should be able to connect to EMCO UI as shown below:

Provisioning Network Controllers

The following Network Controllers must be provisioned in EMCO:

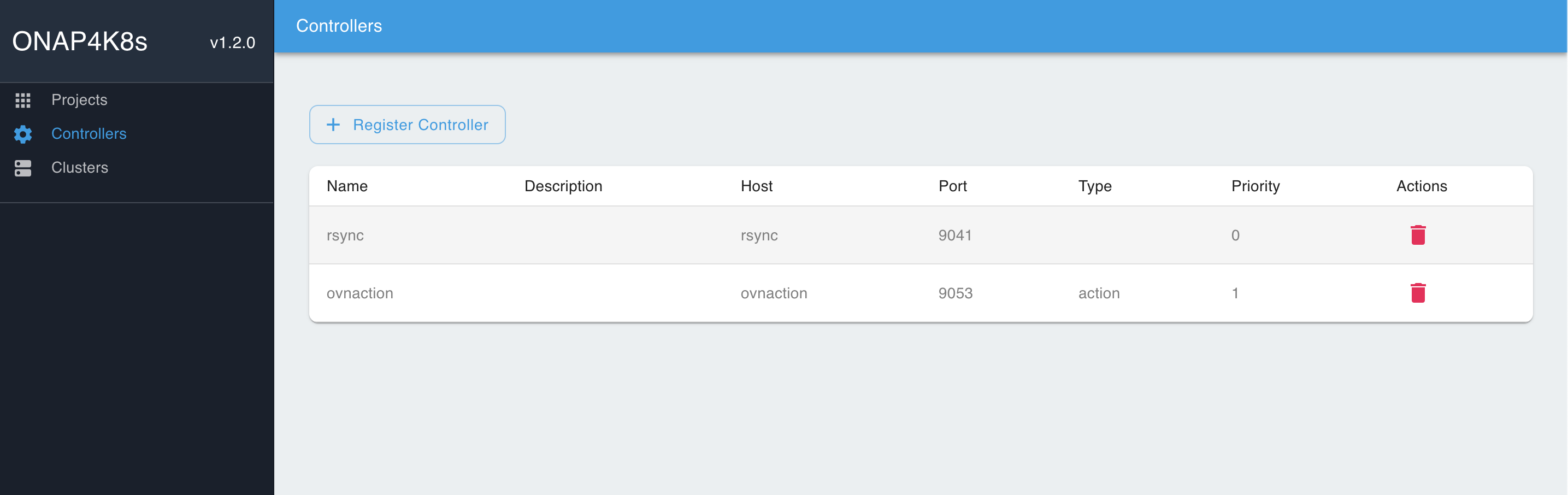

### Add controllers rsync: host : rsync port: 9041 type: < leave blank, not required.> priority: < leave blank, not required> ovnaction: host: ovnaction port: 9053 type: action priority: 1

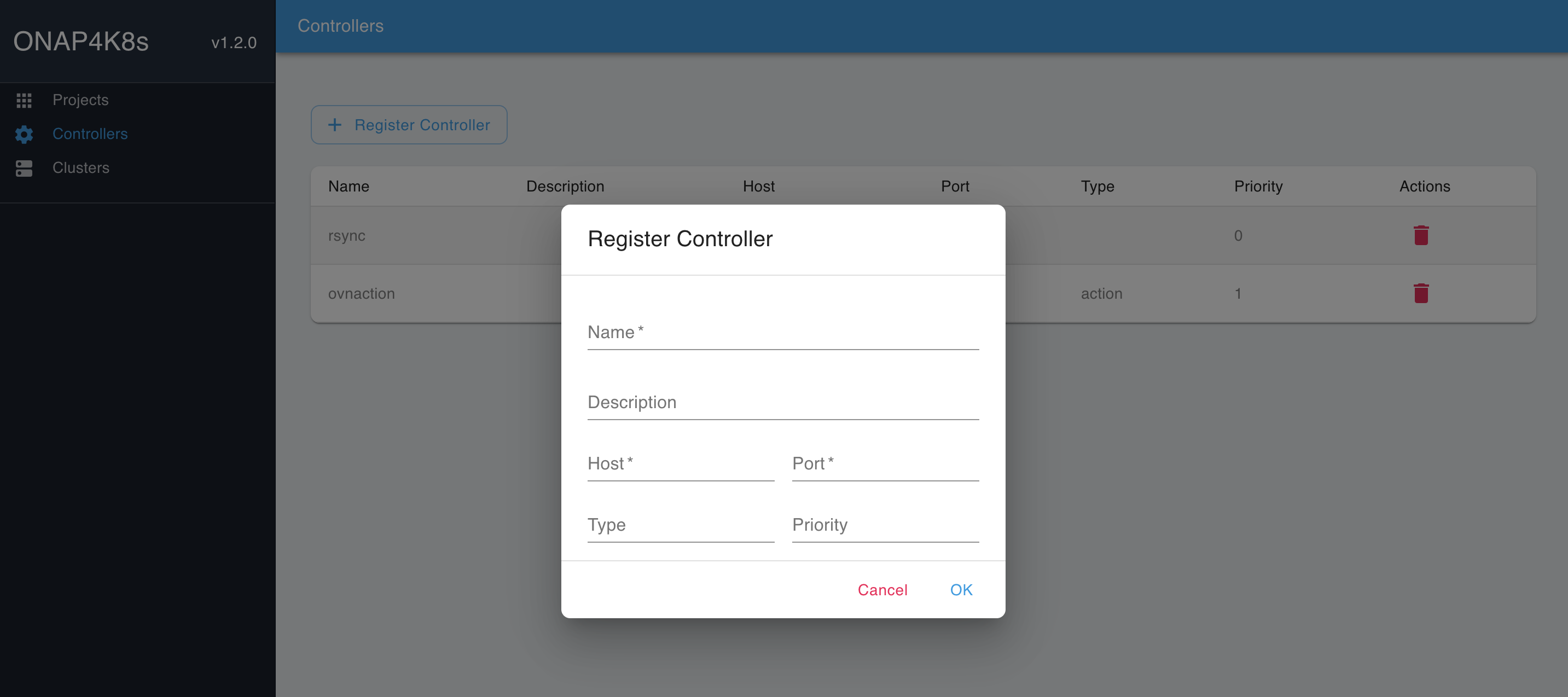

To provision Network Controllers, please use the parameters shown above and EMCO UI "Controllers → Register Controllers" tab:

Fill in the parameters for the "rsync" and the "ovnaction" controllers:

Registering Edge Clusters

To register the two Edge K8S Clusters created during PCEI installation (refer to PCEI R4 Installation Guide), the cluster configuration files need to be copied from the corresponding VMs to the machine that is used to run the VNC server to access EMCO UI. Please make sure that the ssh key used to access the VMs is present on the machine that runs the VNC server. The example below assumes that the VNC server is running on the Host Server used to deploy EMCO and Edge Cluster VMs

# Determine VM IP addresses: [onaplab@os12 ~]$ sudo virsh list --all Id Name State ---------------------------------------------------- 6 amcop-vm-01 running 9 edge_k8s-1 running 10 edge_k8s-2 running [onaplab@os12 ~]$ sudo virsh domifaddr edge_k8s-1 Name MAC address Protocol Address ------------------------------------------------------------------------------- vnet1 52:54:00:19:96:72 ipv4 10.121.7.152/27 [onaplab@os12 ~]$ sudo virsh domifaddr edge_k8s-2 Name MAC address Protocol Address ------------------------------------------------------------------------------- vnet2 52:54:00:c0:47:8b ipv4 10.121.7.146/27 sftp -i pcei-emco onaplab@10.121.7.146 cd .kube get config kube-config-edge-k8s-2 sftp -i pcei-emco onaplab@10.121.7.152 cd .kube get config kube-config-edge-k8s-1

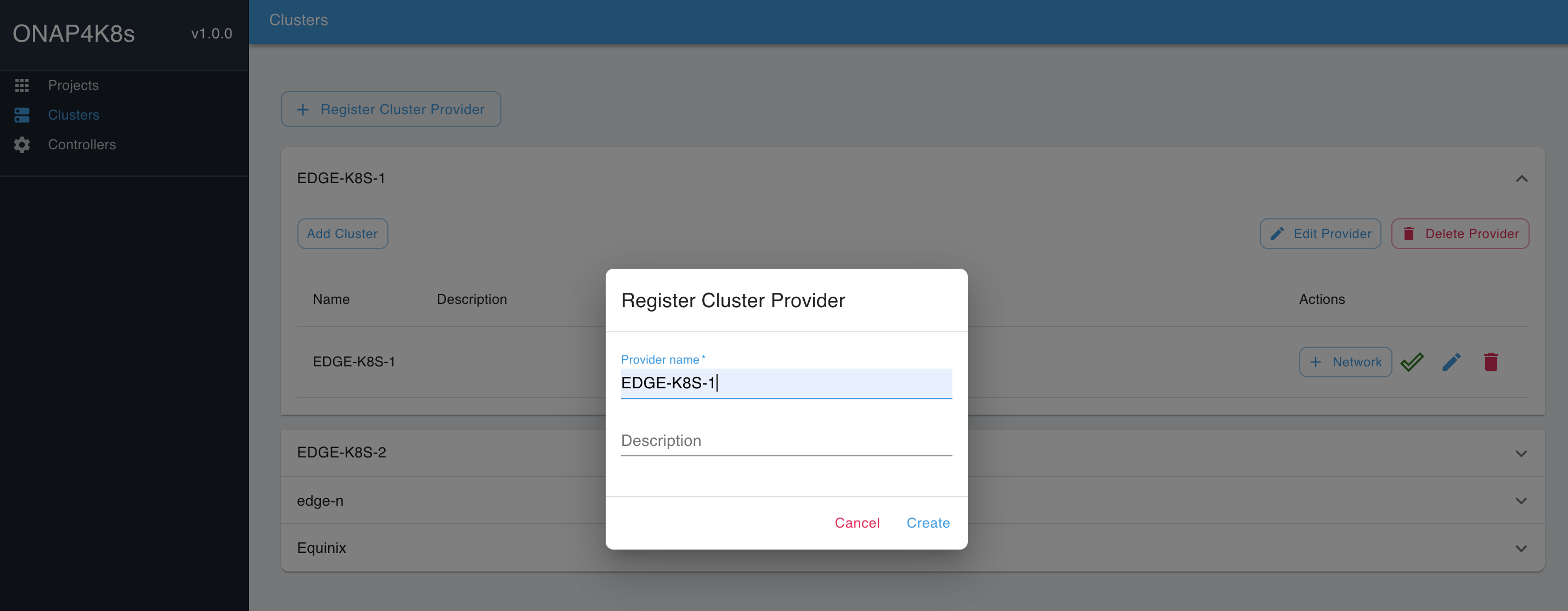

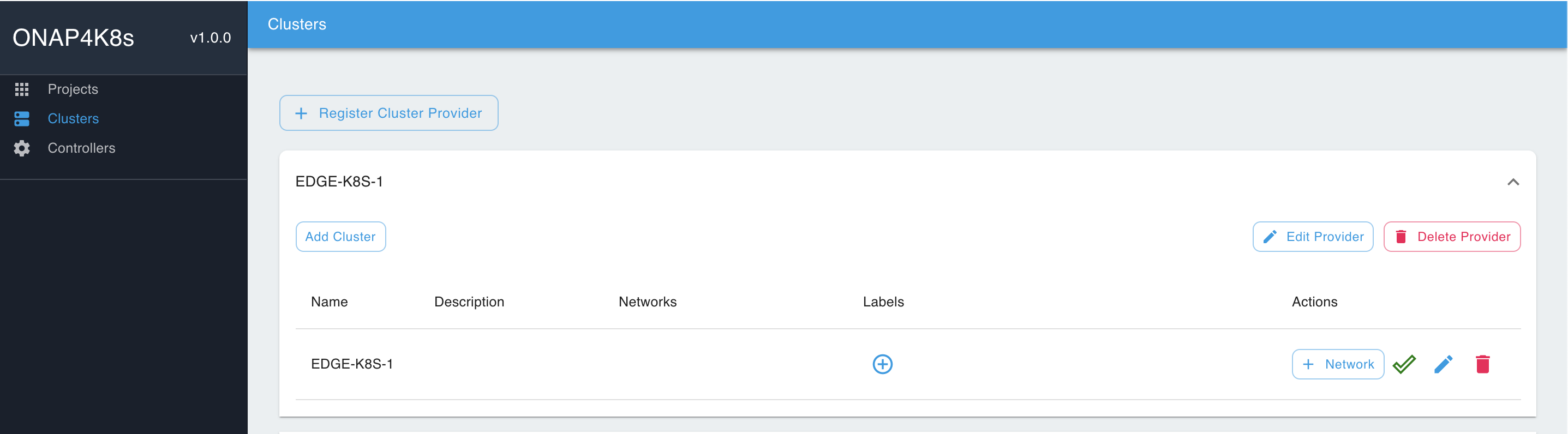

Using EMCO UI "Cluster → Register Cluster Provider" tabs, provision Cluster Providers for EDGE-K8S-1 and EDGE-K8S-2 clusters:

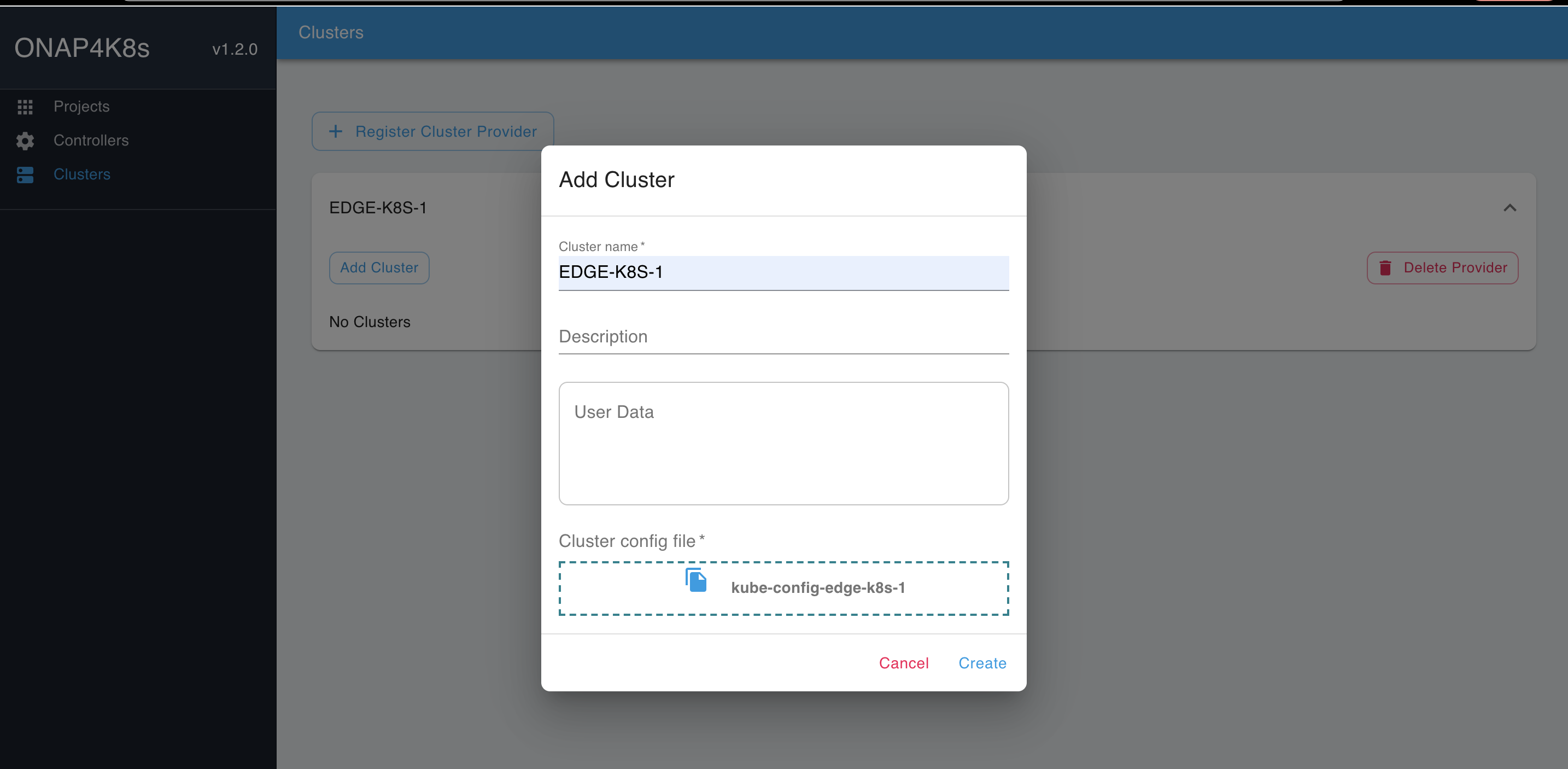

Click on the Cluster Provider and click on "Add Cluster". Fill in the Cluster Name and add the config file downloaded earlier. Repeat for the two clusters EDGE-K8S-1 and EDGE-K8S-2:

You should see the following result:

At this point the edge clusters have been registered with the orchestrator and are redy for placing PCE and 3PE apps.

Deploying Azure IoT Edge with PCEI

Overall Deployment Summary

The deployment of Azure IoT Edge cloud native application as a PCE involves the following steps:

- Provision Azure Cloud (PCC).

- Provision IoT Hub.

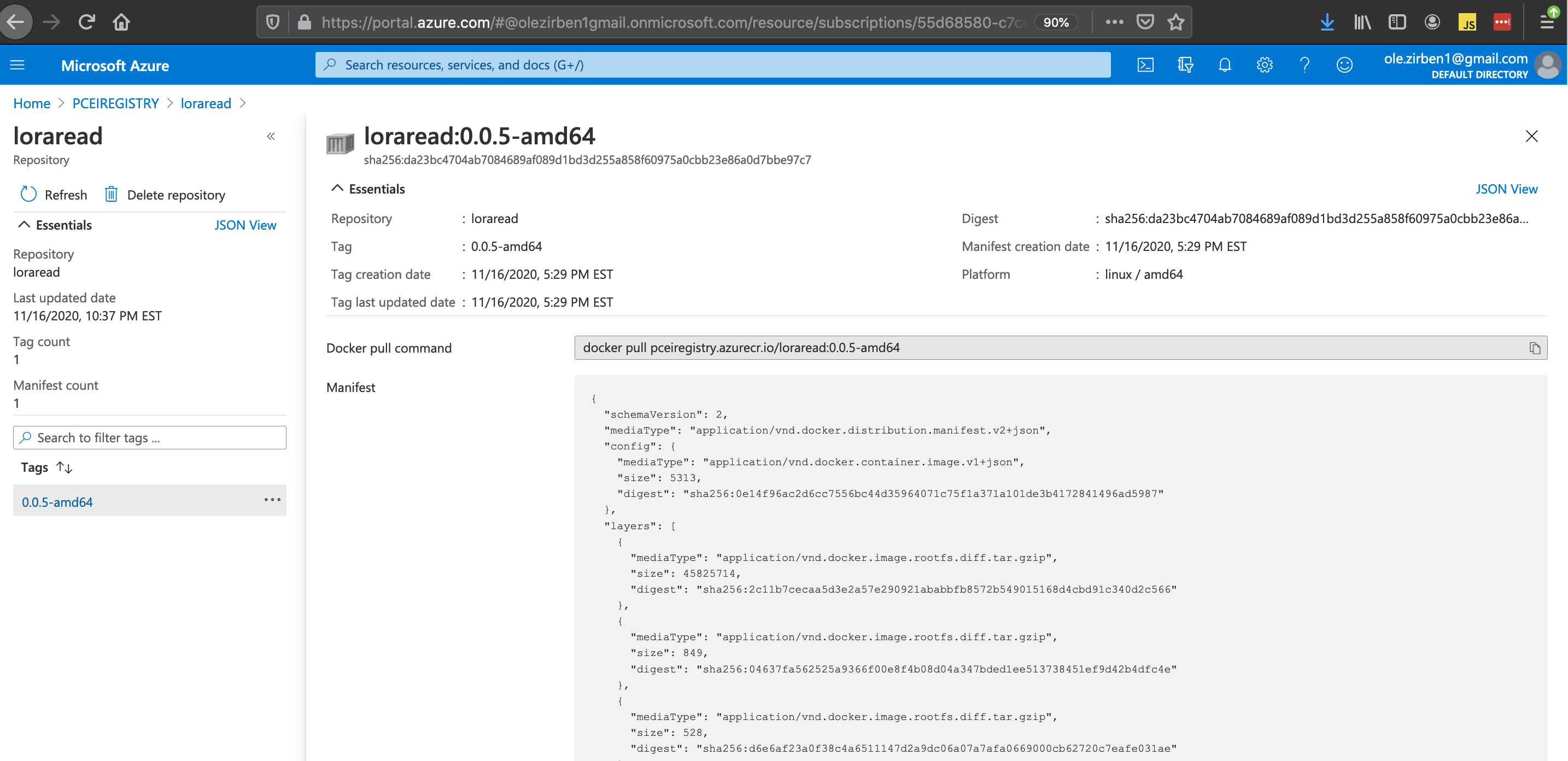

- Provision Azure Container Registry.

- Enable custom software module for Azure IoT Hub (optional). This step is used to show end-to-end operation of Azure IoT Edge with a simulated LPWA IoT device.

- Install Visual Studio Code IDE.

- Add LoRaEdgeSolution code.

- Build and push the LoRaRead custom module to Azure Container Registry.

- Package Azure IoT Edge cloud native application.

- Download Helm charts.

- Modify values.yaml with IoT Edge authentication parameters.

- Package Helm charts into a tar file.

- Define Azure IoT Edge Service and PCE App in EMCO.

- Deploy Azure IoT Edge onto Edge K8S Cluster using EMCO.

- Verify IoT end-to-end IoT operation.

- Connect LPWA IoT device and pass encoded IoT messages to Azure IoT Edge.

- Decode LPWA messages using custom LoRaRead module.

- Pass decoded messaged to Azure Cloud.

Provisioning Azure Public Cloud Core (PCC) IoT Environment

Provisioning Azure Public Cloud Core

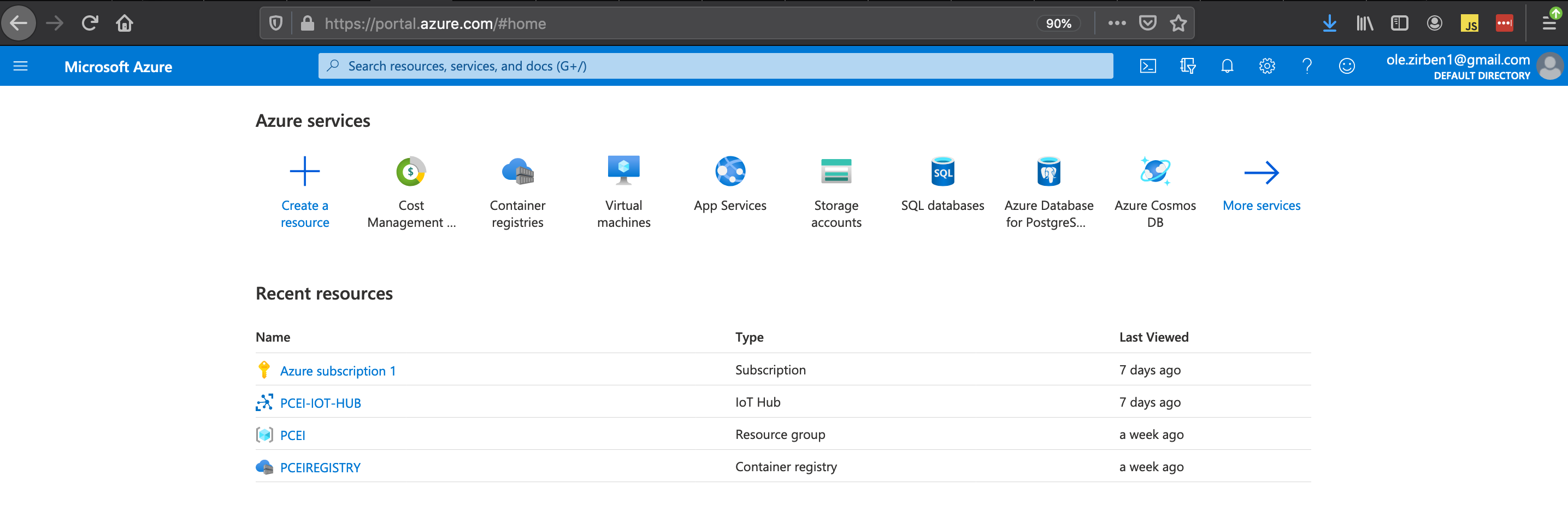

Login to your Azure Portal and add Subscription, IoT Hub and Container Registry Resources:

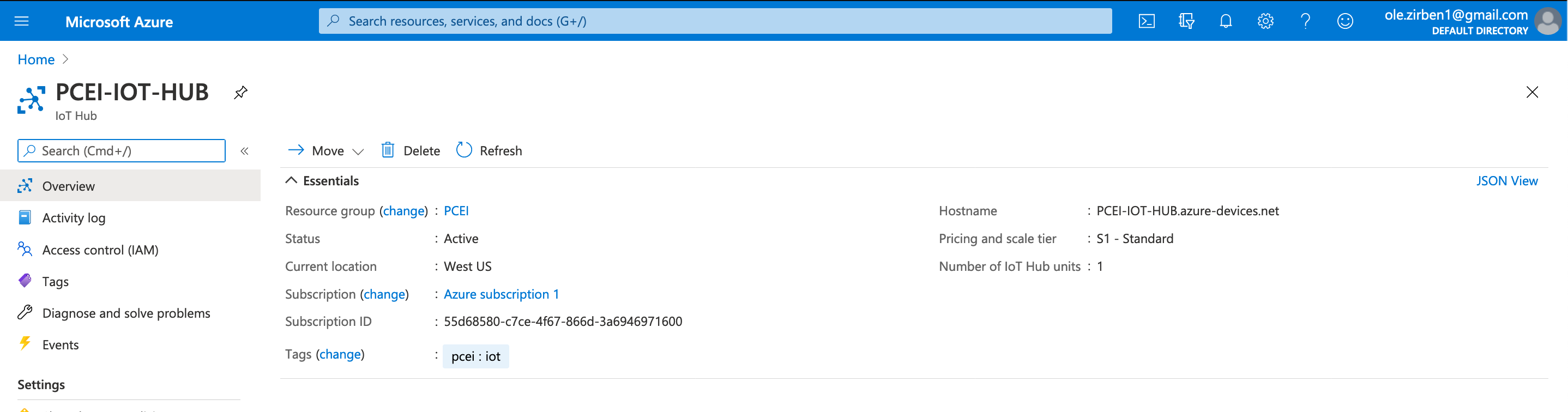

Provision IoT Hub:

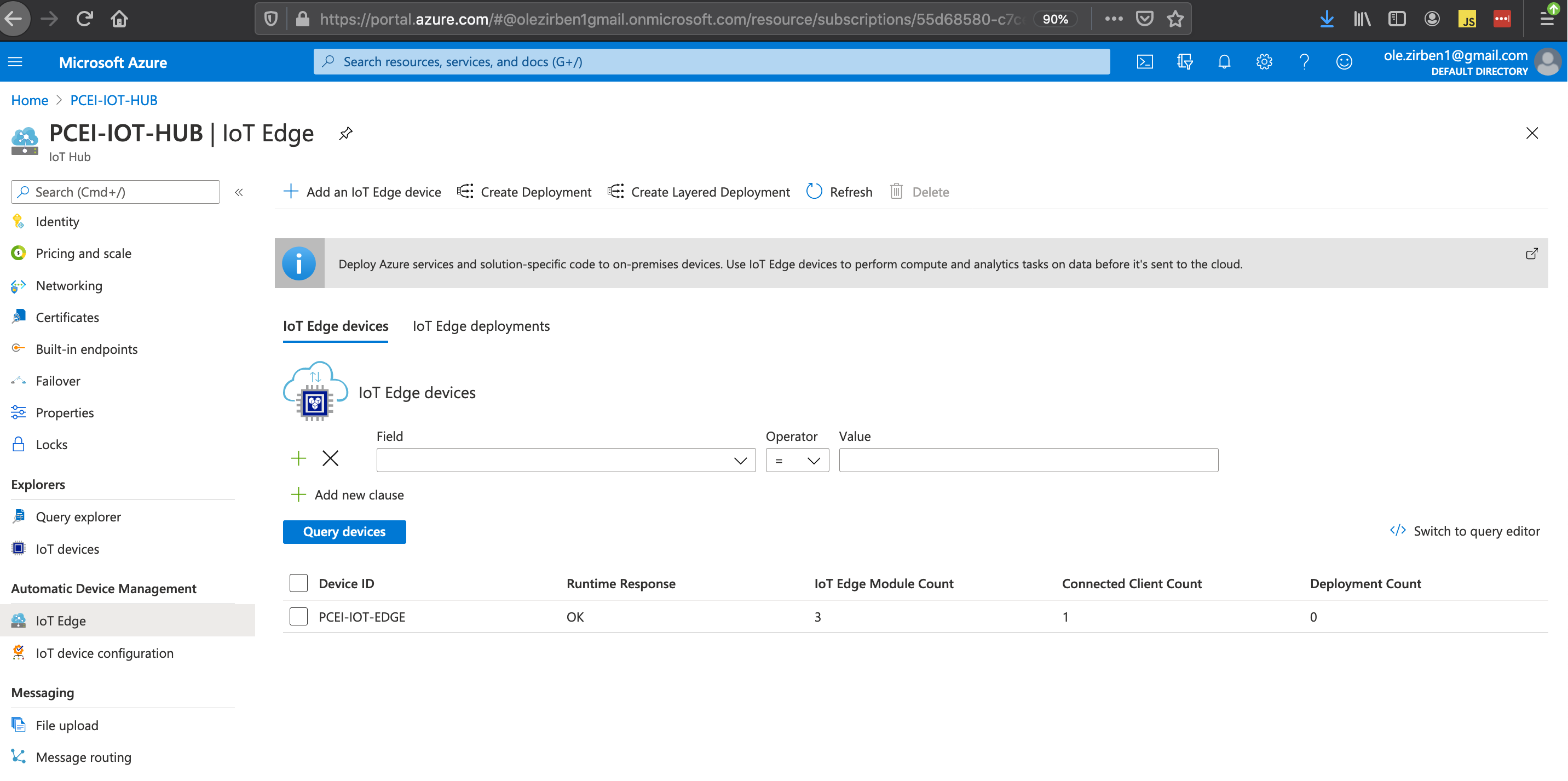

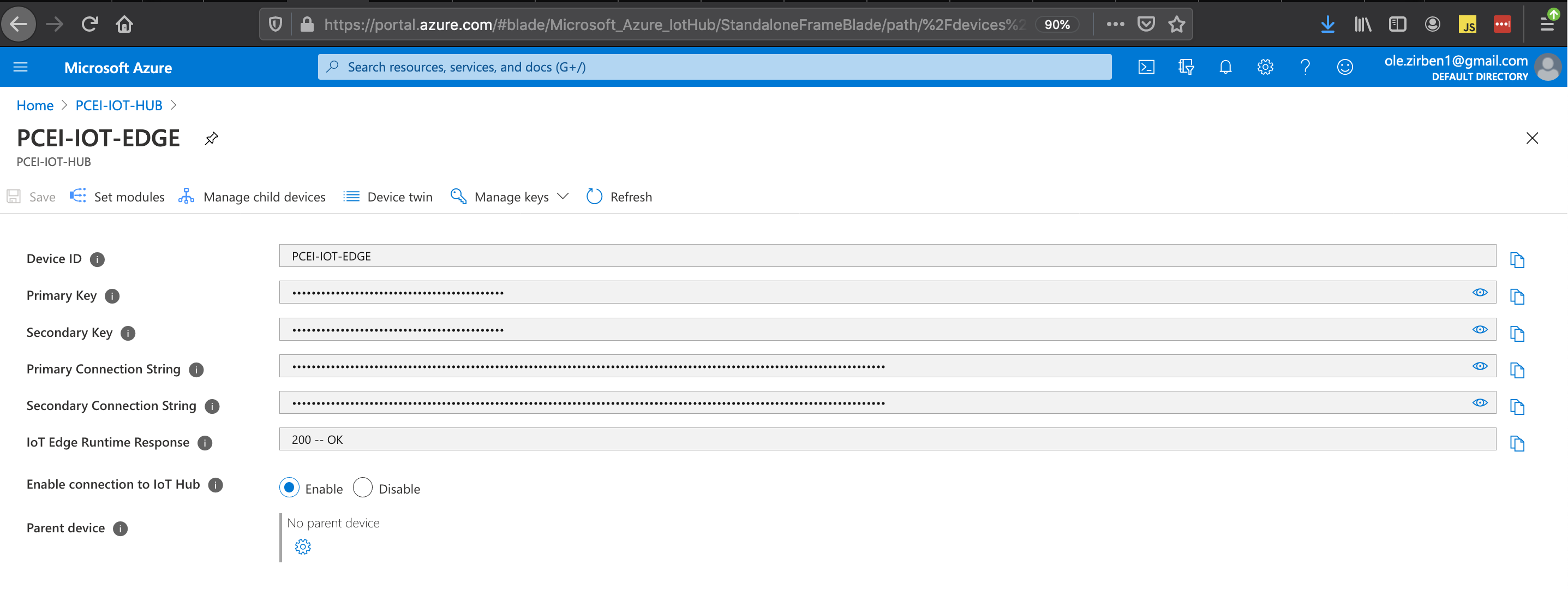

Provision IoT Edge under IoT Hub:

Copy the "Primary Connection String" from the IoT Edge parameters. This string will be used later in the values.yaml file for the Azure IoT Edge Helm Charts.

Enabling Azure IoT Edge Custom Module

Use this link for information on developing custom software modules for Azure IoT Edge:

The example below is optional. It shows how to build a custom module for Azure IoT Edge to read and decode Low Power IoT messages from a simulated LPWA IoT device. Follow the above link to:

- Install Docker.

- Download and install Visual Studio Code (VSC).

- Setup VSC with Azure IoT Tools.

- Setup Azure Container Registry in Azure Cloud.

- Create Module Project. For this step, please refer to instructions below on downloading the LoRaEdgeSolution from PCEI repo.

- Build and push solution to Azure Container Registry.

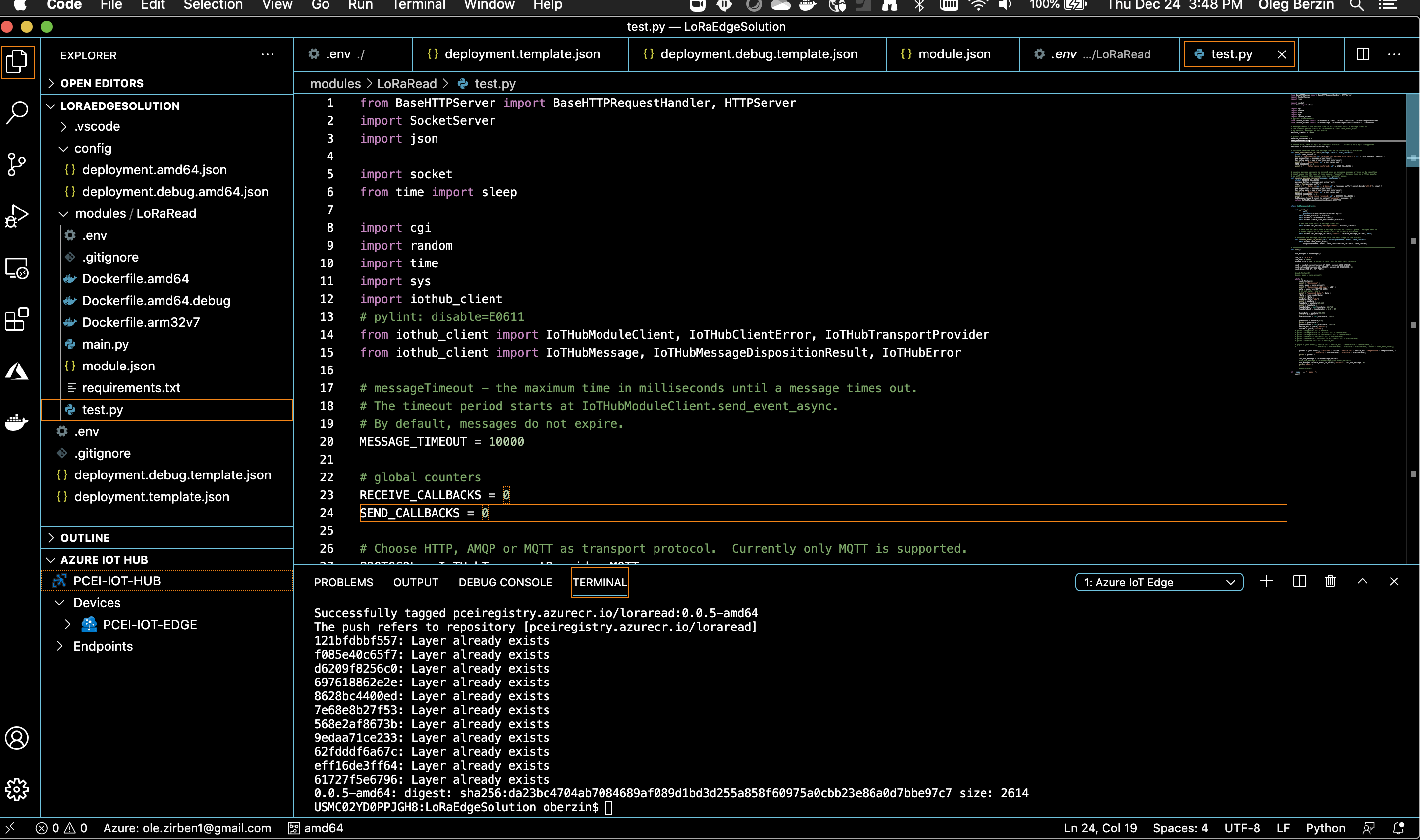

The steps below show how to build custom IoT module for Azure IoT Edge using "LoRaEdgeSolution" code from PCEI repo:

Download PCEI repo to the machine that has VSC and Docker installed (per above instructions):

git clone "https://gerrit.akraino.org/r/pcei" cd pcei ls -l total 0 drwxr-xr-x 8 oberzin staff 256 Dec 24 15:44 LoRaEdgeSolution drwxr-xr-x 3 oberzin staff 96 Dec 24 15:44 iotclient drwxr-xr-x 5 oberzin staff 160 Dec 24 15:44 locationAPI

Using VSC open the LoRaEdgeSolution folder that was downloaded from PCEI repo.

Add required credentials for Azure Container Registry (ACR) using .env file.

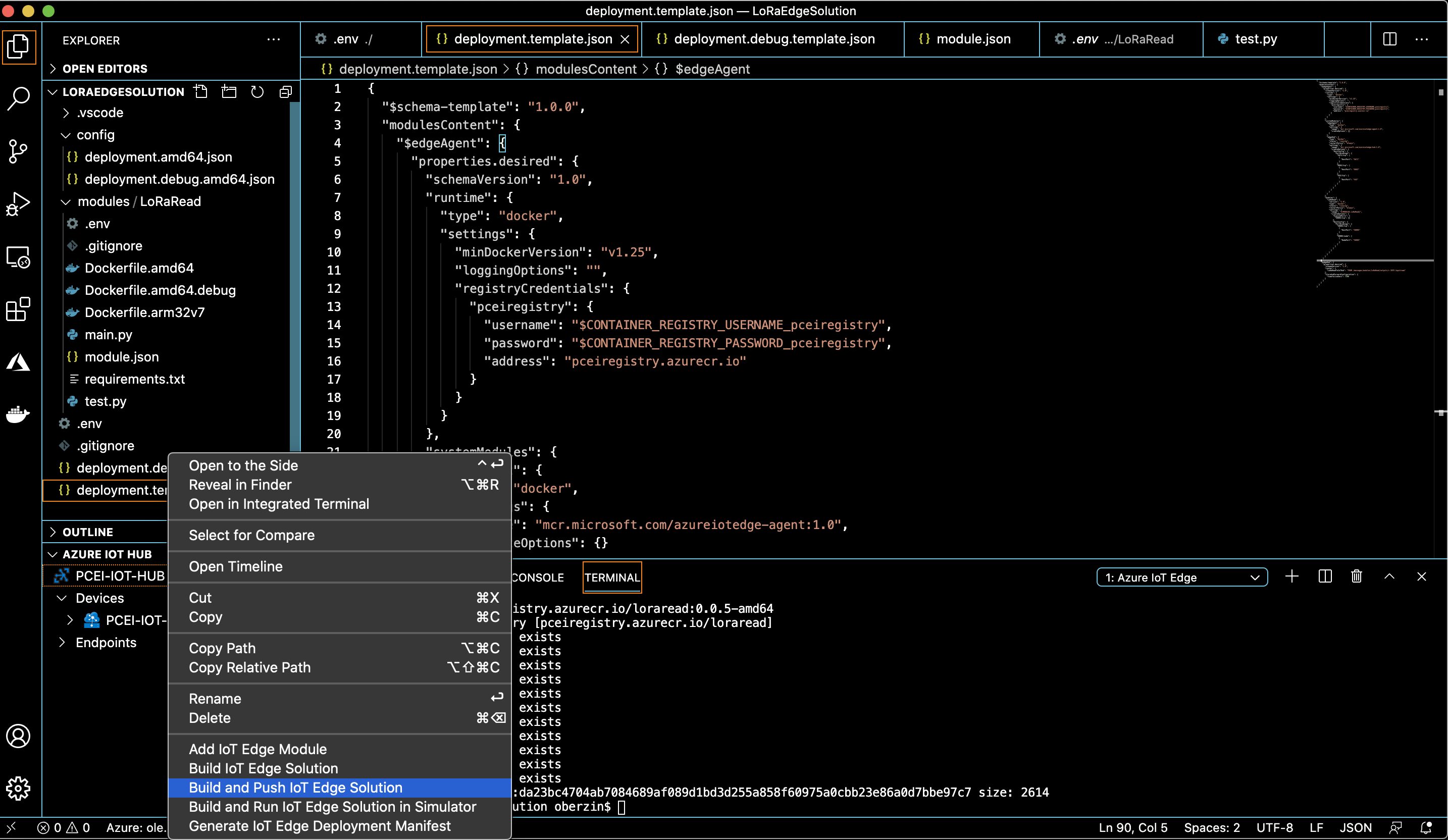

Build and push the solution to ACR as shown below. Righ-click on "deployment.template.json":

The docker image for the custom module should now be visible in Azure Cloud ACR:

Packaging Azure IoT Edge Public Cloud Edge (PCE) Application

Use this link to understand the architecture of Azure IoT Edge cloud native application:

https://microsoft.github.io/iotedge-k8s-doc/architecture.html

For the purposes of this document we use the Host Server on which EMCO has been deployed and on which we run the VNC server to package Helm charts for Azure IoT Edge.

Clone Azure IoT Edge from github:

git clone https://github.com/Azure/iotedge cd iotedge/kubernetes/charts/ mkdir azureiotedge1 cp -a edge-kubernetes/. azureiotedge1/ cd azureiotedge1 ls -al total 24 drwxrwxr-x. 3 onaplab onaplab 79 Dec 24 13:14 . drwxrwxr-x. 5 onaplab onaplab 77 Dec 24 13:14 .. -rw-rw-r--. 1 onaplab onaplab 137 Dec 24 13:02 Chart.yaml -rw-rw-r--. 1 onaplab onaplab 333 Dec 24 13:02 .helmignore drwxrwxr-x. 2 onaplab onaplab 220 Dec 24 13:02 templates -rw-rw-r--. 1 onaplab onaplab 14226 Dec 24 13:02 values.yaml

Modify values.yaml file to specify the "Primary Connection String" from Azure Cloud generated during IoT Hub/IoT Edge provisioning:

vi values.yaml # Change the line below and save the file provisioning: source: "manual" deviceConnectionString: "PASTE PRIMARY CONNECTION STRING FROM AZURE IOT HUB / IOT EDGE SCREEN" #dynamicReprovisioning: false

Create a tar file with Azure IoT Edge Helm Charts:

# Make sure to change to the "charts" directory cd .. pwd /home/onaplab/iotedge/kubernetes/charts # zip the "azureiotedge1" directory. Be sure to use "azureiotedge1.zip" file name. tar -czvf azureiotedge1.zip azureiotedge1/ azureiotedge1/ azureiotedge1/.helmignore azureiotedge1/Chart.yaml azureiotedge1/templates/ azureiotedge1/templates/NOTES.txt azureiotedge1/templates/_helpers.tpl azureiotedge1/templates/edge-rbac.yaml azureiotedge1/templates/iotedged-config-secret.yaml azureiotedge1/templates/iotedged-deployment.yaml azureiotedge1/templates/iotedged-proxy-config.yaml azureiotedge1/templates/iotedged-pvc.yaml azureiotedge1/templates/iotedged-service.yaml azureiotedge1/values.yaml [onaplab@os12 charts]$ ls -al total 12 drwxrwxr-x. 5 onaplab onaplab 102 Dec 24 13:23 . drwxrwxr-x. 4 onaplab onaplab 31 Dec 24 13:02 .. drwxrwxr-x. 3 onaplab onaplab 79 Dec 24 13:14 azureiotedge1 -rw-rw-r--. 1 onaplab onaplab 8790 Dec 24 13:23 azureiotedge1.zip drwxrwxr-x. 3 onaplab onaplab 79 Dec 24 13:14 edge-kubernetes drwxrwxr-x. 3 onaplab onaplab 60 Dec 24 13:02 edge-kubernetes-crd

Add Azure CRD to Edge K8S Cluster

Due to limitations in the current EMCO implementation the following step must be performed manually:

SSH to EDGE-K8S-1 VM:

# Determine VMs IP [onaplab@os12 ~]$ sudo virsh domifaddr edge_k8s-1 Name MAC address Protocol Address ------------------------------------------------------------------------------- vnet1 52:54:00:19:96:72 ipv4 10.121.7.152/27 # ssh from your laptop ssh -i pcei-emco onaplab@10.121.7.152

Deploy Azure CRD:

helm install edge-crd --repo https://edgek8s.blob.core.windows.net/staging edge-kubernetes-crd NAME: edge-crd LAST DEPLOYED: Thu Dec 24 21:43:12 2020 NAMESPACE: default STATUS: deployed REVISION: 1 TEST SUITE: None kubectl get crd NAME CREATED AT edgedeployments.microsoft.azure.devices.edge 2020-12-24T21:43:13Z