This document describes the blueprint test environment for the Smart Data Transaction for CPS blueprint. The test results and logs are posted in the Akraino Nexus at the link below:

https://nexus.akraino.org/content/sites/logs/fujitsu/job/

N/A

Testing has been carried out at Fujitsu Limited labs without any Akraino Test Working Group resources.

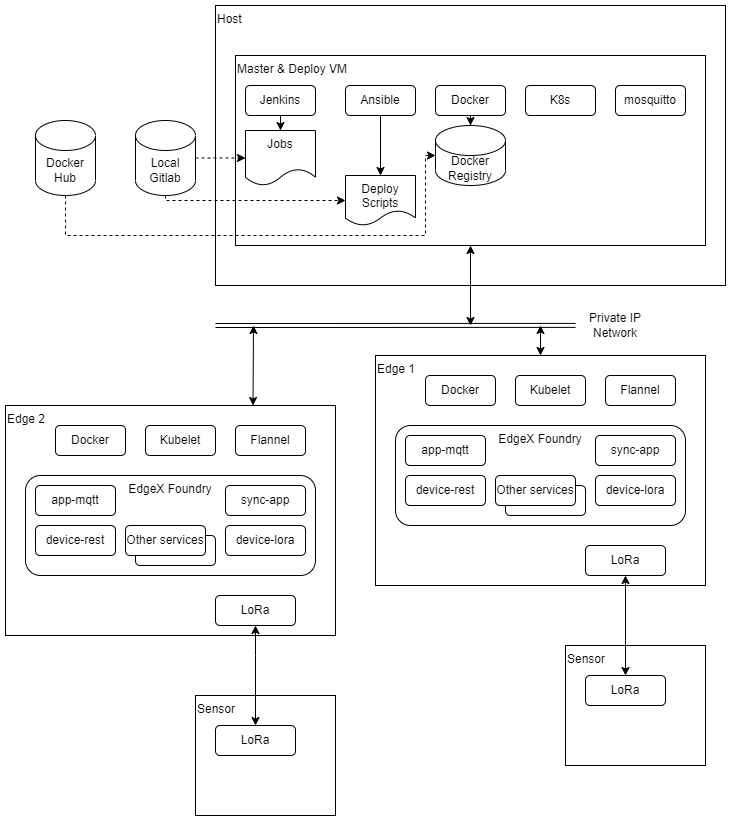

Tests are carried out on the architecture shown in the diagram below.

The test bed consists of 4 VMs running on x86 hardware, performing deploy and ci/cd and build and master node roles, two edge nodes on ARM64 (Jetson Nano) hardware, and two sensor nodes on ARM32 (Raspberry Pi) hardware.

| Node Type | Count | Hardware | OS |

|---|---|---|---|

Deploy Master CI/CD Build | 4 | Intel i5, 2 cores VM | Ubuntu 20.04 |

| Edge | 2 | Jetson Nano, ARM Cortex-A57, 4 cores | Ubuntu 20.04 |

| Camera | 2 | H.View HV-500E6A | N/A (pre-installed) |

The Build VM is used to run the BluVal test framework components outside the system under test.

BluVal and additional tests are carried out using Robot Framework.

N/A

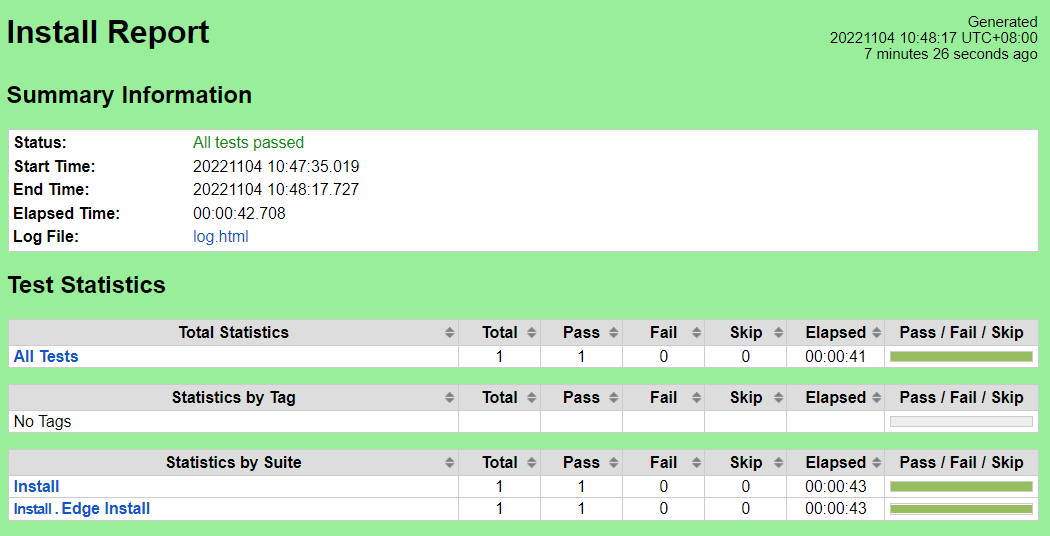

This set of test cases confirms the scripting to change the default runtime of edge nodes.

The test scripts and data are stored in the source repository's cicd/tests/sdt_step2/install/ directory.

The test bed is place in a state where all nodes are prepared with required software. No EdgeX or Kubernetes services are running.

Execute the test scripts:

robot cicd/tests/sdt_step2/install/

The test scripts will change the default runtime of edge nodes from runc to nvidia. The robot command should report success for all test cases.

Nexus URL:

Pass (1/1 test case)

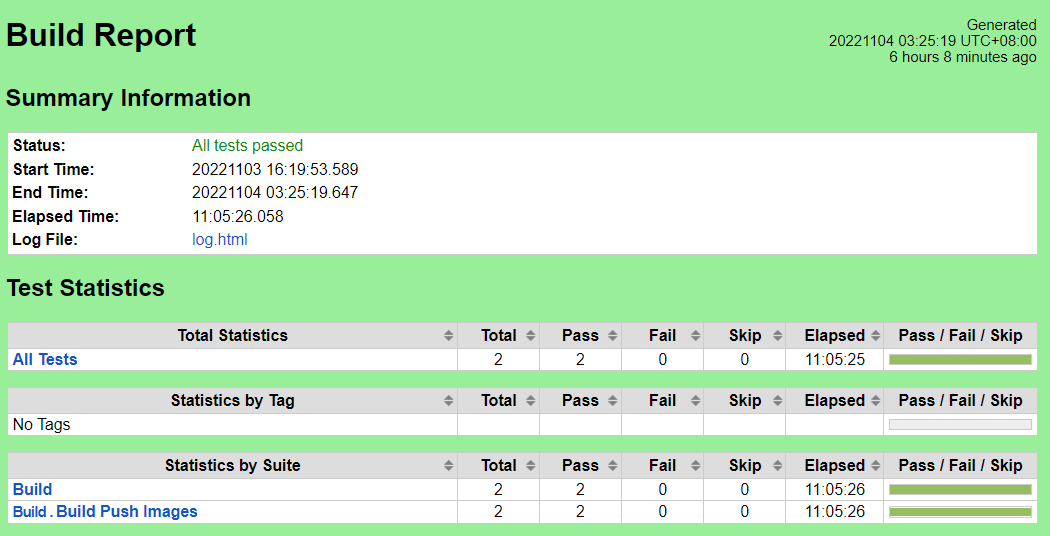

These test cases verify that the images for EdgeX microservices can be constructed, and pushed to private registry.

The test scripts and data are stored in the source repository's cicd/tests/sdt_step2/build/ directory.

The test bed is placed in a state where all nodes are prepared with required software and the Docker registry is running.

Execute the test scripts:

robot cicd/tests/sdt_step2/build/The test scripts will build images of changed services(sync-app/image-app/device-camera), add push the images to private registry. The robot command should report success for all test cases.

Nexus URL:

Pass (2/2 test cases)

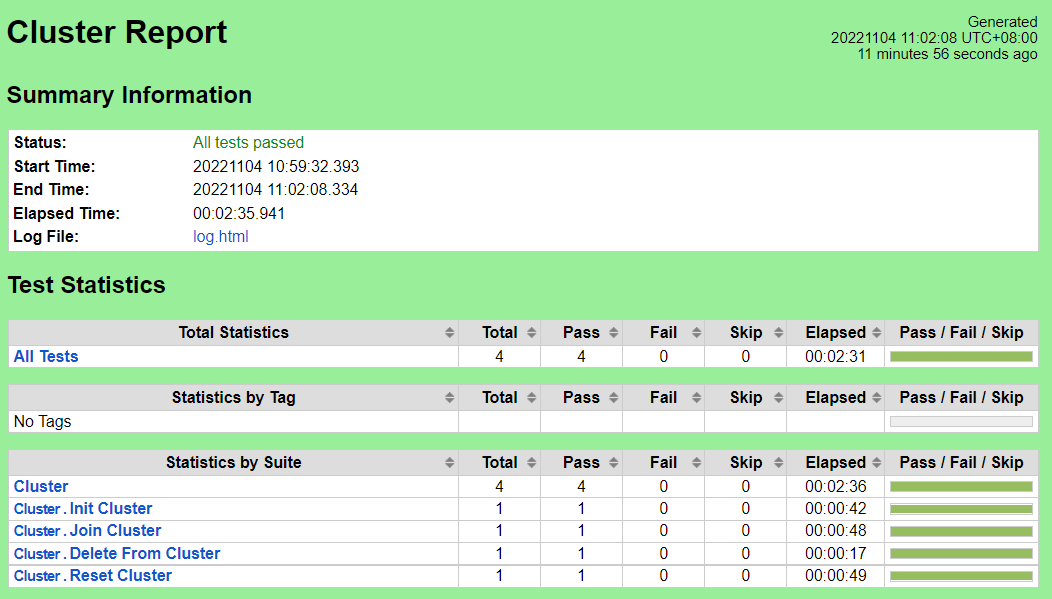

These test cases verify that the Kubernetes cluster can be initialized, edge nodes added to it and removed, and the cluster torn down.

The test scripts and data are stored in the source repository's cicd/tests/sdt_step2/cluster/ directory.

The test bed is placed in a state where all nodes are prepared with required software and the Docker registry is running. The registry must be populated with the Kubernetes and Flannel images from upstream.

Execute the test scripts:

robot cicd/tests/sdt_step2/cluster/The test scripts will start the cluster, add all configured edge nodes, remove the edge nodes, and reset the cluster. The robot command should report success for all test cases.

Nexus URL:

Pass (4/4 test cases)

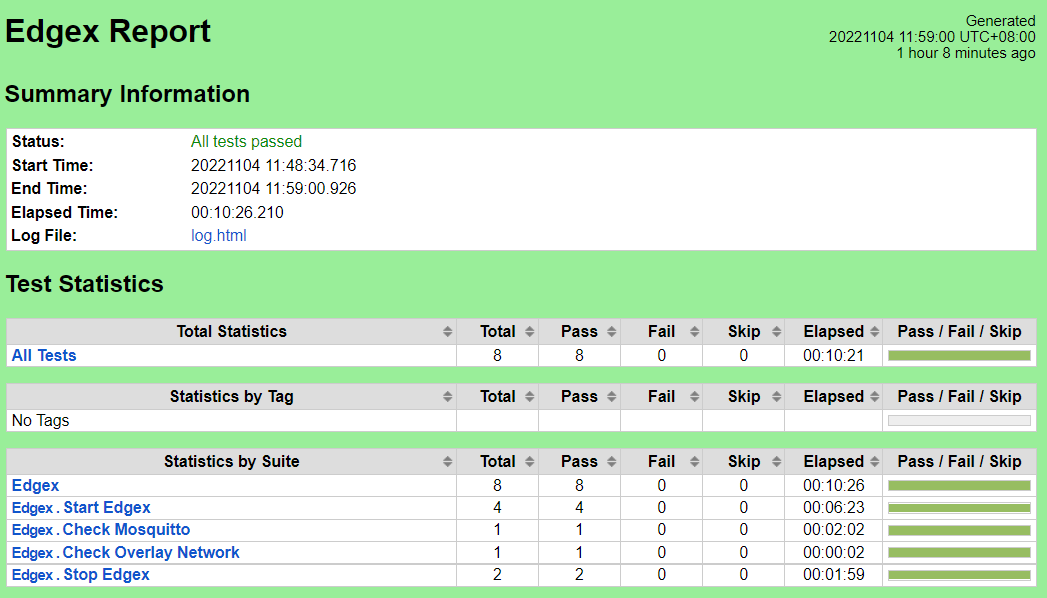

These test cases verify that the EdgeX micro-services can be started and that MQTT messages are passed to the master node from the services.

The test scripts and data are stored in the source repository's cicd/tests/sdt_step2/edgex/ directory.

The test bed is placed in a state where the cluster is initialized and all edge nodes have joined. The Docker registry and mosquitto MQTT broker must be running on the master node. The registry must be populated with all upstream images and custom images. Either the device-camera service should be enabled, or device-virtual should be enabled to provide readings.

Execute the test scripts:

robot cicd/tests/sdt_step2/edgex/The test scripts will start the EdgeX micro-services on all edge nodes, confirm that MQTT messages are being delivered from the edge nodes, and stop the EdgeX micro-services. The robot command should report success for all test cases.

Nexus URL:

Pass (8/8 test cases)

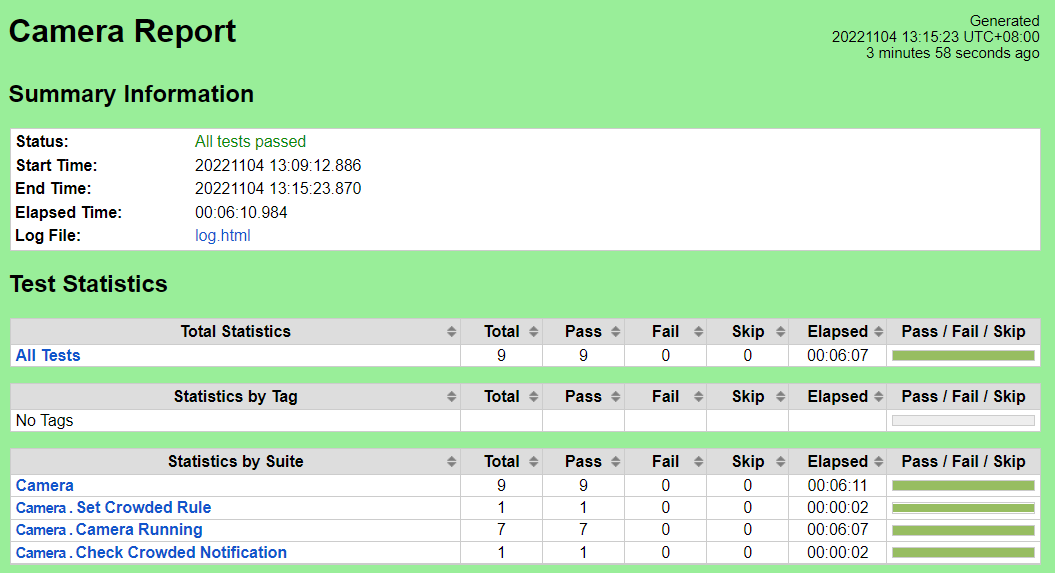

These test cases verify that the device-camera service can get image from IP Camera, and the sync-app service can share the image to other edge node, and the image-app service can analyze the image.

The test steps and data are contained in the scripts in the source repository cicd/tests/sdt_step2/camera/ directory.

The test bed is initialized to the point of having all EdgeX services running, with device-camera and image-app enabled.

Execute the test scripts:

robot cicd/tests/sdt_step2/camera/

The test cases will check if MQTT messages containing camera image gathered from the camera nodes are arriving at the master node on the topic for each each edge node.

The Robot Framework should report success for all test cases.

Nexus URL:

Pass (9/9 test cases)

N/A

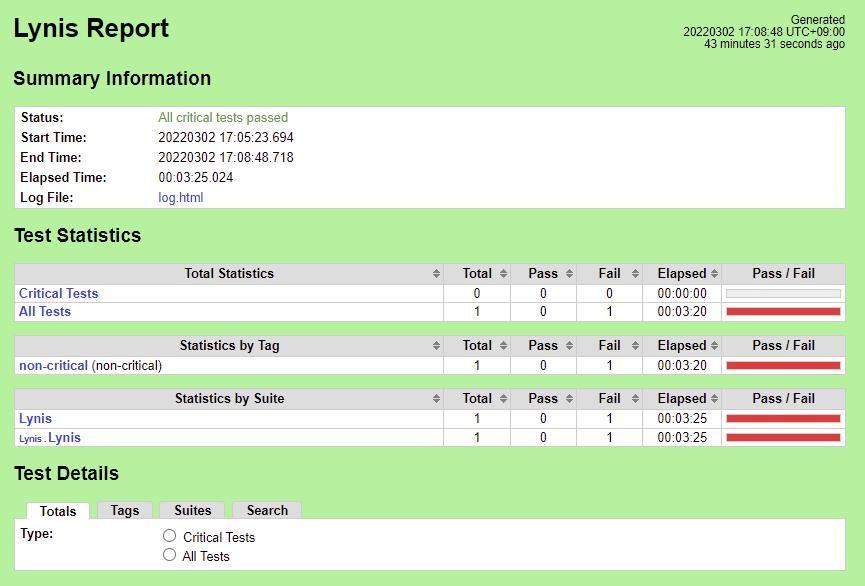

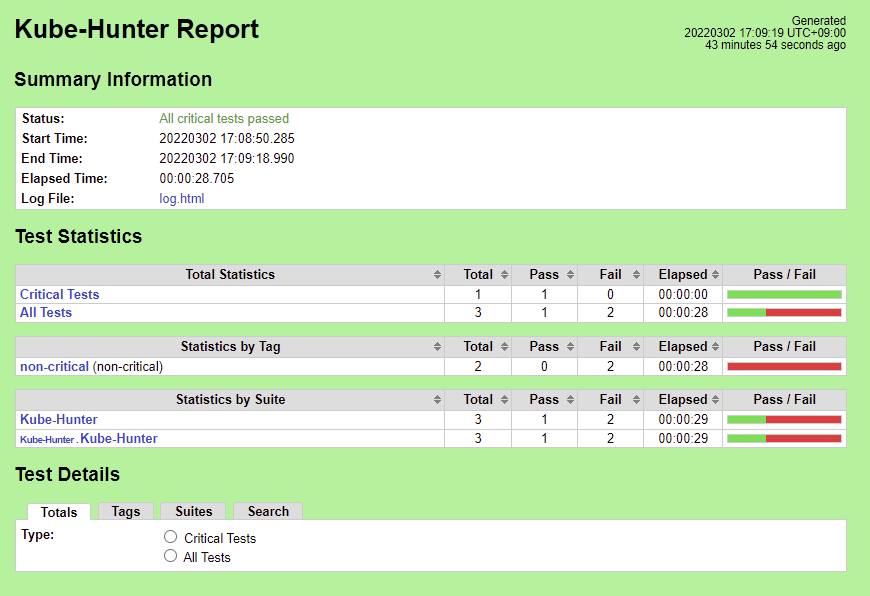

BluVal tests for Lynis, Vuls, and Kube-Hunter were executed on the test bed.

Steps To Implement Security Scan Requirements

https://vuls.io/docs/en/tutorial-docker.html

We use Ubuntu 20.04, so we ran Vuls test as follows:

Create directory

$ mkdir ~/vuls $ cd ~/vuls $ mkdir go-cve-dictionary-log goval-dictionary-log gost-log |

Fetch NVD

$ docker run --rm -it \

-v $PWD:/go-cve-dictionary \

-v $PWD/go-cve-dictionary-log:/var/log/go-cve-dictionary \

vuls/go-cve-dictionary fetch nvd

|

Fetch OVAL

$ docker run --rm -it \

-v $PWD:/goval-dictionary \

-v $PWD/goval-dictionary-log:/var/log/goval-dictionary \

vuls/goval-dictionary fetch ubuntu 16 17 18 19 20

|

Fetch gost

$ docker run --rm -i \

-v $PWD:/gost \

-v $PWD/gost-log:/var/log/gost \

vuls/gost fetch ubuntu

|

Create config.toml

[servers] [servers.master] host = "192.168.51.22" port = "22" user = "test-user" keyPath = "/root/.ssh/id_rsa" # path to ssh private key in docker |

Start vuls container to run tests

$ docker run --rm -it \

-v ~/.ssh:/root/.ssh:ro \

-v $PWD:/vuls \

-v $PWD/vuls-log:/var/log/vuls \

-v /etc/localtime:/etc/localtime:ro \

-v /etc/timezone:/etc/timezone:ro \

vuls/vuls scan \

-config=./config.toml

|

Get the report

$ docker run --rm -it \

-v ~/.ssh:/root/.ssh:ro \

-v $PWD:/vuls \

-v $PWD/vuls-log:/var/log/vuls \

-v /etc/localtime:/etc/localtime:ro \

vuls/vuls report \

-format-list \

-config=./config.toml

|

Create ~/validation/bluval/bluval-sdtfc.yaml to customize the Test

blueprint:

name: sdtfc

layers:

- os

- k8s

os: &os

-

name: lynis

what: lynis

optional: "False"

k8s: &k8s

-

name: kube-hunter

what: kube-hunter

optional: "False"

|

Update ~/validation/bluval/volumes.yaml file

volumes:

# location of the ssh key to access the cluster

ssh_key_dir:

local: '/home/ubuntu/.ssh'

target: '/root/.ssh'

# location of the k8s access files (config file, certificates, keys)

kube_config_dir:

local: '/home/ubuntu/kube'

target: '/root/.kube/'

# location of the customized variables.yaml

custom_variables_file:

local: '/home/ubuntu/validation/tests/variables.yaml'

target: '/opt/akraino/validation/tests/variables.yaml'

# location of the bluval-<blueprint>.yaml file

blueprint_dir:

local: '/home/ubuntu/validation/bluval'

target: '/opt/akraino/validation/bluval'

# location on where to store the results on the local jumpserver

results_dir:

local: '/home/ubuntu/results'

target: '/opt/akraino/results'

# location on where to store openrc file

openrc:

local: ''

target: '/root/openrc'

# parameters that will be passed to the container at each layer

layers:

# volumes mounted at all layers; volumes specific for a different layer are below

common:

- custom_variables_file

- blueprint_dir

- results_dir

hardware:

- ssh_key_dir

os:

- ssh_key_dir

networking:

- ssh_key_dir

docker:

- ssh_key_dir

k8s:

- ssh_key_dir

- kube_config_dir

k8s_networking:

- ssh_key_dir

- kube_config_dir

openstack:

- openrc

sds:

sdn:

vim:

|

Update ~/validation/tests/variables.yaml file

### Input variables cluster's master host host: <IP Address> # cluster's master host address username: <username> # login name to connect to cluster password: <password> # login password to connect to cluster ssh_keyfile: /root/.ssh/id_rsa # Identity file for authentication |

Run Blucon

$ bash validation/bluval/blucon.sh sdtfc |

BluVal tests should report success for all test cases.

Vuls results (manual) Nexus URL: https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-vuls/2/

Lynis results (manual) Nexus URL: https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-lynis/3/

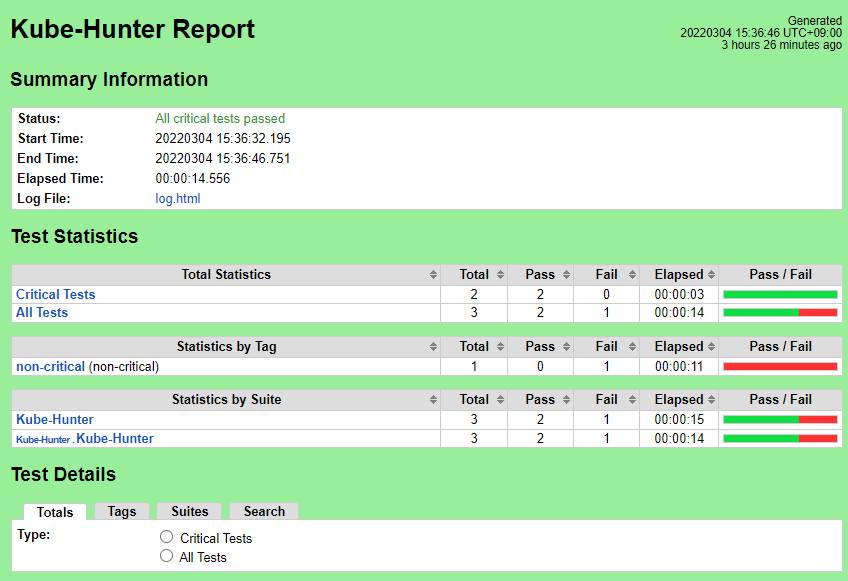

Kube-Hunter results Nexus URL: https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-bluval/2/

Nexus URL: https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-vuls/2/

There are 17 CVEs with a CVSS score >= 9.0. These are exceptions requested here:

Release 5: Akraino CVE Vulnerability Exception Request

| CVE-ID | CVSS | NVD | Fix/Notes |

|---|---|---|---|

| CVE-2016-1585 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2016-1585 | No fix available |

| CVE-2021-20236 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-20236 | No fix available (latest release of ZeroMQ for Ubuntu 20.04 is 4.3.2-2ubuntu1) |

| CVE-2021-31870 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-31870 | No fix available (latest release of klibc for Ubuntu 20.04 is 2.0.7-1ubuntu5) |

| CVE-2021-31872 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-31872 | No fix available (latest release of klibc for Ubuntu 20.04 is 2.0.7-1ubuntu5) |

| CVE-2021-31873 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-31873 | No fix available (latest release of klibc for Ubuntu 20.04 is 2.0.7-1ubuntu5) |

| CVE-2021-33574 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-33574 | Will not be fixed in Ubuntu stable releases |

| CVE-2021-45951 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-45951 | No fix available (vendor disputed) |

| CVE-2021-45952 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-45952 | No fix available (vendor disputed) |

| CVE-2021-45953 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-45953 | No fix available (vendor disputed) |

| CVE-2021-45954 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-45954 | No fix available (vendor disputed) |

| CVE-2021-45955 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-45955 | No fix available (vendor disputed) |

| CVE-2021-45956 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-45956 | No fix available (vendor disputed) |

| CVE-2021-45957 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2021-45957 | No fix available (vendor disputed) |

| CVE-2022-23218 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2022-23218 | Reported fixed in 2.31-0ubuntu9.7 (installed), but still reported by Vuls |

| CVE-2022-23219 | 9.8 | https://nvd.nist.gov/vuln/detail/CVE-2022-23219 | Reported fixed in 2.31-0ubuntu9.7 (installed), but still reported by Vuls |

| CVE-2016-9180 | 9.1 | https://nvd.nist.gov/vuln/detail/CVE-2016-9180 | No fix available |

| CVE-2021-35942 | 9.1 | https://nvd.nist.gov/vuln/detail/CVE-2021-35942 | Reported fixed in 2.31-0ubuntu9.7 (installed), but still reported by Vuls |

Nexus URL (run via Bluval, without fixes): https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-bluval/2/

Nexus URL (manual run, with fixes): https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-lynis/2/

The initial results compare with the Lynis Incubation: PASS/FAIL Criteria, v1.0 as follows.

2022-03-04 15:33:28 Test: Checking for program update...

2022-03-04 15:33:31 Current installed version : 301

2022-03-04 15:33:31 Latest stable version : 307

2022-03-04 15:33:31 Minimum required version : 297

2022-03-04 15:33:31 Result: newer Lynis release available!

2022-03-04 15:33:31 Suggestion: Version of Lynis outdated, consider upgrading to the latest version [test:LYNIS] [details:-] [solution:-]

Fix: Download and run the latest Lynis directly on SUT.

Steps To Implement Security Scan Requirements#InstallandExecute

| No. | Test | Result | Fix |

|---|---|---|---|

| 1 | Test: Checking PASS_MAX_DAYS option in /etc/login.defs | Result: password minimum age is not configured Suggestion: Configure minimum password age in /etc/login.defs [test:AUTH-9286] | Set PASS_MAX_DAYS 180 in /etc/login.defs |

| 2 | Performing test ID AUTH-9328 (Default umask values) | Result: found umask 022, which could be improved Suggestion: Default umask in /etc/login.defs could be more strict like 027 [test:AUTH-9328] | Set UMASK 027 in /etc/login.defs |

| 3 | Performing test ID SSH-7440 (Check OpenSSH option: AllowUsers and AllowGroups) | Result: SSH has no specific user or group limitation. Most likely all valid users can SSH to this machine. Hardening: assigned partial number of hardening points (0 of 1). | Configure AllowUsers in /etc/ssh/sshd_config |

| 4 | Test: checking for file /etc/network/if-up.d/ntpdate | Test: checking for file /etc/network/if-up.d/ntpdate Result: file /etc/network/if-up.d/ntpdate does not exist ... Hardening: assigned maximum number of hardening points for this item (3). | OK |

| 5 | Performing test ID KRNL-6000 (Check sysctl key pairs in scan profile) : Following sub-tests required | N/A | N/A |

| 5a | sysctl key fs.suid_dumpable contains equal expected and current value (0) | Result: sysctl key fs.suid_dumpable has a different value than expected in scan profile. Expected=0, Real=2 | Set recommended value in /etc/sysctl.d/90-lynis-hardening.conf and disable apport in /etc/default/apport |

| 5b | sysctl key kernel.dmesg_restrict contains equal expected and current value (1) | Result: sysctl key kernel.dmesg_restrict has a different value than expected in scan profile. Expected=1, Real=0 | Set recommended value in /etc/sysctl.d/90-lynis-hardening.conf |

| 5c | sysctl key net.ipv4.conf.default.accept_source_route contains equal expected and current value (0) | Result: sysctl key net.ipv4.conf.default.accept_source_route has a different value than expected in scan profile. Expected=0, Real=1 | Set recommended value in /etc/sysctl.d/90-lynis-hardening.conf |

| 6 | Test: Check if one or more compilers can be found on the system | Result: found installed compiler. See top of logfile which compilers have been found or use /usr/bin/grep to filter on 'compiler' Hardening: assigned partial number of hardening points (1 of 3). | Uninstall gcc and remove /usr/bin/as (installed with binutils) |

Results after the above fixes are as follows:

2022-03-07 15:19:07 Test: Checking for program update...

2022-03-07 15:19:10 Current installed version : 308

2022-03-07 15:19:10 Latest stable version : 307

2022-03-07 15:19:10 No Lynis update available.

| No. | Test | Result |

|---|---|---|

| 1 | Test: Checking PASS_MAX_DAYS option in /etc/login.defs | Result: max password age is 180 days |

| 2 | Performing test ID AUTH-9328 (Default umask values) | Result: umask is 027, which is fine |

| 3 | Performing test ID SSH-7440 (Check OpenSSH option: AllowUsers and AllowGroups) | Result: SSH is limited to a specific set of users, which is good |

| 5a | sysctl key fs.suid_dumpable contains equal expected and current value (0) | Result: sysctl key fs.suid_dumpable contains equal expected and current value (0) Hardening: assigned maximum number of hardening points for this item (1). |

| 5b | sysctl key kernel.dmesg_restrict contains equal expected and current value (1) | Result: sysctl key kernel.dmesg_restrict contains equal expected and current value (1) Hardening: assigned maximum number of hardening points for this item (1). |

| 5c | sysctl key net.ipv4.conf.default.accept_source_route contains equal expected and current value (0) | Result: sysctl key net.ipv4.conf.default.accept_source_route contains equal expected and current value (0) Hardening: assigned maximum number of hardening points for this item (1). |

| 6 | Test: Check if one or more compilers can be found on the system | Result: no compilers found |

The post-fix manual logs can be found at https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-lynis/3/.

Nexus URL (initial run without fixes): https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-bluval/1/

Nexus URL (with fixes): https://nexus.akraino.org/content/sites/logs/fujitsu/job/sdt-bluval/2/

There are 5 Vulnerabilities.

Fix for KHV002

$ kubectl replace -f - <<EOF

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "false"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:public-info-viewer

rules:

- nonResourceURLs:

- /healthz

- /livez

- /readyz

verbs:

- get

EOF

|

Fix for KHV005, KHV050, Access to pod's secrets

$ kubectl replace -f - <<EOF apiVersion: v1 kind: ServiceAccount metadata: name: default namespace: default automountServiceAccountToken: false EOF |

The above fixes are implemented in the Ansible playbook deploy/playbook/init_cluster.yml and configuration file deploy/playbook/k8s/fix.yml

Fix for CAP_NET_RAW Enabled:

Create a PodSecurityPolicy with requiredDropCapabilities: NET_RAW. The policy is shown below. The complete fix is implemented in the Ansible playbook deploy/playbook/init_cluster.yml and configuration files deploy/playbook/k8s/default-psp.yml and deploy/playbook/k8s/system-psp.yml, plus enabling PodSecurityPolicy checking in deploy/playbook/k8s/config.yml.

apiVersion: policy/v1beta1 |

Results after fixes are shown below:

Note that in spite of all Kube-Hunter vulnerabilities being fixed, the results still show one test failure. The "Inside-a-Pod Scanning" test case reports failure, apparently because the log ends with "Kube Hunter couldn't find any clusters" instead of "No vulnerabilities were found." Because vulnerabilities were detected and reported earlier by this test case, and those vulnerabilities are no longer reported, we believe this is a false negative, and may be caused by this issue: https://github.com/aquasecurity/kube-hunter/issues/358

Single pane view of how the test score looks like for the Blue print.

| Total Tests | Test Executed | Pass | Fail | In Progress |

|---|---|---|---|---|

| 26 | 26 | 24 | 2 | 0 |

*Vuls is counted as one test case.

*One Kube-Hunter failure is counted as a pass. See above.

Vuls and Lynis test cases are failing, an exception request is filed for Vuls-detected vulnerabilities that cannot be fixed. The Lynis results have been confirmed to pass the Incubation criteria.

None at this time.

None at this time.