Introduction

Installing a new RC on a VM from the Build Server is a subset of the process of installing a new RC on a bare metal server. The major difference being the Build Server does not configure the target RC server's BIOS nor install the linux operating system but rather only installs the Network Cloud Regional Controller software only.

This installation procedure creates a new Regional Controller on a pre-prepared VM. The VM which will become the RC is termed the 'Target RC' or just 'Target VM' in this guide.

Unlike when an RC is built by the Build Server on a bare metal server this installation is performed directly on a pre-prepared Ubuntu 16.04 VM and only installs the Network Cloud specific and other software packages on the Target VM to create a new Regional Controller. Once the RC is build it is used to subsequently deploy either Rover or Unicycle pods.

The installation procedure is executed directly on the Target VM and automatically installs the following on the Target Server:

- Install Network Cloud Regional Controller specific software including

- PostgreSQL DB

- Camunda Workflow and Decision Engine

- Akraino Web Portal

- LDAP configuration

- Install a number of supporting supplementary software components including

- OpenStack Tempest tests

- YAML builds

- ONAP scripts

- Sample VNFs

Preflight requirements

Networking

During the layer stages of the installation the Target Server's 'host' interface must have connectivity to the internet to be able to download the necessary repos and packages.

Software

When the RC is installed on a VM the an Ubuntu 16.04 Linux operating system must be installed and updated before the a RC can be built.

Preflight checks

None

Preflight RC Region Specific Input Data

None

Deploying the RC

RC Specific Software Installation

If you haven't done so already, elevate yourself to root:

user@regional_controller_vm:/# sudo -i

Clone the Akraino Regional Controller repository:

## Download the latest Regional_controller artifacts from LF Nexus ## root@regional_controller_vm:/# mkdir -p /opt/akraino/region root@regional_controller_vm:/# NEXUS_URL=https://nexus.akraino.org root@regional_controller_vm:/# curl -L "$NEXUS_URL/service/local/artifact/maven/redirect?r=snapshots&g=org.akraino.regional_controller&a=regional_controller&v=0.0.2-SNAPSHOT&e=tgz" | tar -xozv -C /opt/akraino/region

Change to the /opt/akraino/region directory and run the start_regional_controller.sh script:

root@regional_controller_vm:/# cd /opt/akraino/region/ root@regional_controller_vm:/# ./start_akraino_portal.sh

This will also take 10 to 20 minutes.

A successful installation will end with the following message:

... Setting up tempest content/repositories Setting up ONAP content/repositories Setting up sample vnf content/repositories Setting up airshipinabottle content/repositories Setting up redfish tools content/repositories SUCCESS: Portal can be accessed at http://10.51.34.230:8080/AECPortalMgmt/ SUCCESS: Portal install completed

Note: The enumerated IP shown (10.51.34.230) is an example 'host' address for a RC deployed in a validation lab.

The Regional Controller Node installation is now complete.

At this point there will be one new directories where the cloned NC artifacts have been created.

root@regional_controller_vm:/# ls /opt/akraino/ region

Please note: It will be necessary to generate rsa keys on the newly commissioned RC which must then be copied and inserted into the 'genesis_ssh_public_key' attribute in site input yaml file used when subsequently deploying each Unicycle pod at any edge site controlled by the newly built RC. This will be covered in the Unicycle installation instructions.

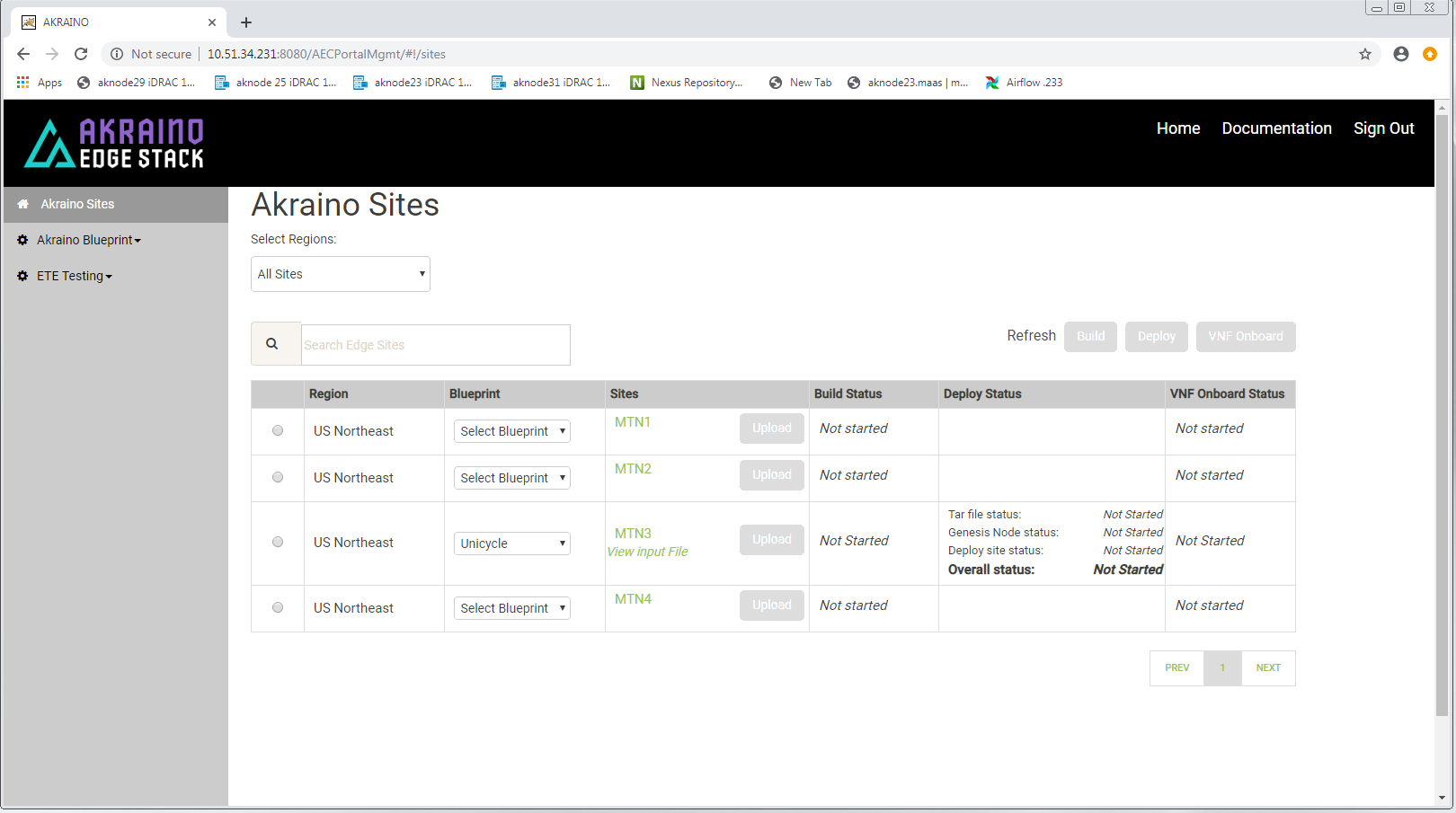

Accessing the new Regional Controller's Portal UI

During the installation a UI will have been installed on the newly deployed RC. This UI will be used to subsequently deploy all Rover and Unicycle pods to edge locations. The RC's portal can be opened in Chrome via the portal URL http://TARGET_SERVER_IP:8080/AECPortalMgmt/ where TARGET_SERVER_IP is the RC's 'host' IP address. Note: IE or Edge browsers may not currently work with this UI.

Use the following credentials:

- Username: akadmin

- Password: akraino

Upon successful login, the Akraino Portal home page will appear. Please not the extra entries in the MTN3 site is due to the fact this screenshot was taken after a Unicycle pod was deployed from this RC.

1 Comment

Shweta Sachdeva

Hi,

I had tried installing RC on a vritual machine. It is installed successfully and i am able to see the login page. However, the credentials- akadmin/akraino are not working. It is saying - 'Invalid'. Could you please suggest on this.