Overall Architecture

- Fully automated deployment on different platforms: AWS, GCP, baremetal and virtualBaremetal (KVM)

- Deploy kubernetes cluster properly configured and tuned for NFV/MEC workloads

- Enablement of real time workloads

- Possibility of deploying apps on virtual machines and containers in parallel

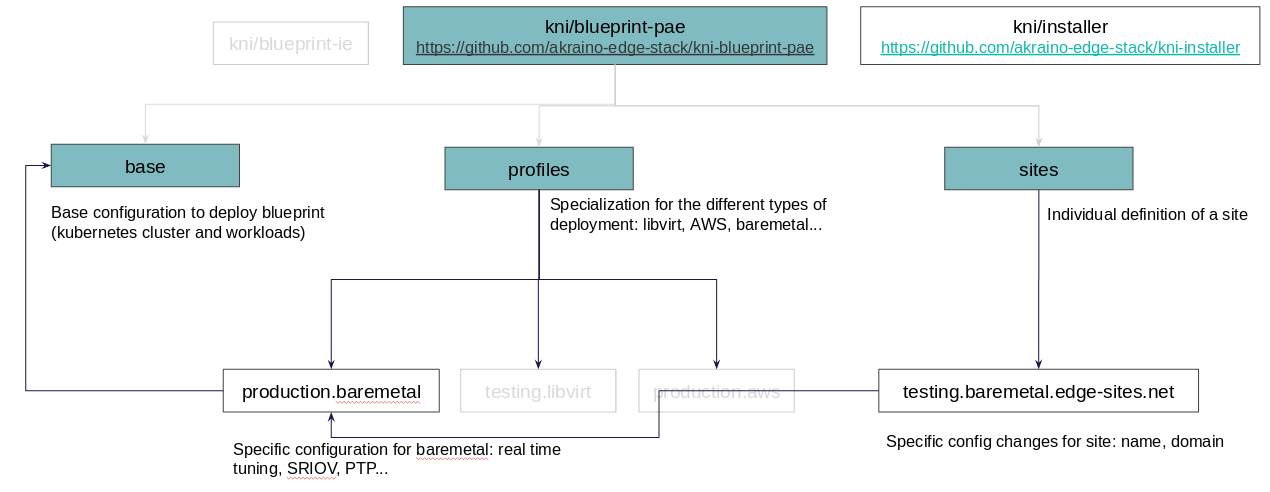

The blueprint is based on site/profile/base pattern:

- base - it will contain a set of Kubernetes manifests that define the common settings of the Kubernetes cluster to be deployed, and the common workloads to be applied on top of it

- profile - specialization of the cluster depending on profiles (libvirt, AWS and baremetal). It will contain specific configs for each platform, and specific workloads to be applied on top of it

- site - individual definition of a site, based on a chosen profile. It will contain specific configurations for the site (name, domain, network/servers settings, etc...)

Platform Architecture

This blueprint is expected to run on multiple environments (libvirt, AWS and baremetal).

Deployments to AWS

| nodes | instance type |

|---|---|

| 1x bootstrap (temporary) | EC2: m4.xlarge, EBS: 120GB GP2 |

| 3x masters | EC2: m4.xlarge, EBS: 120GB GP2 |

| 3x workers | EC2: m4.large, EBS: 120GB GP2 |

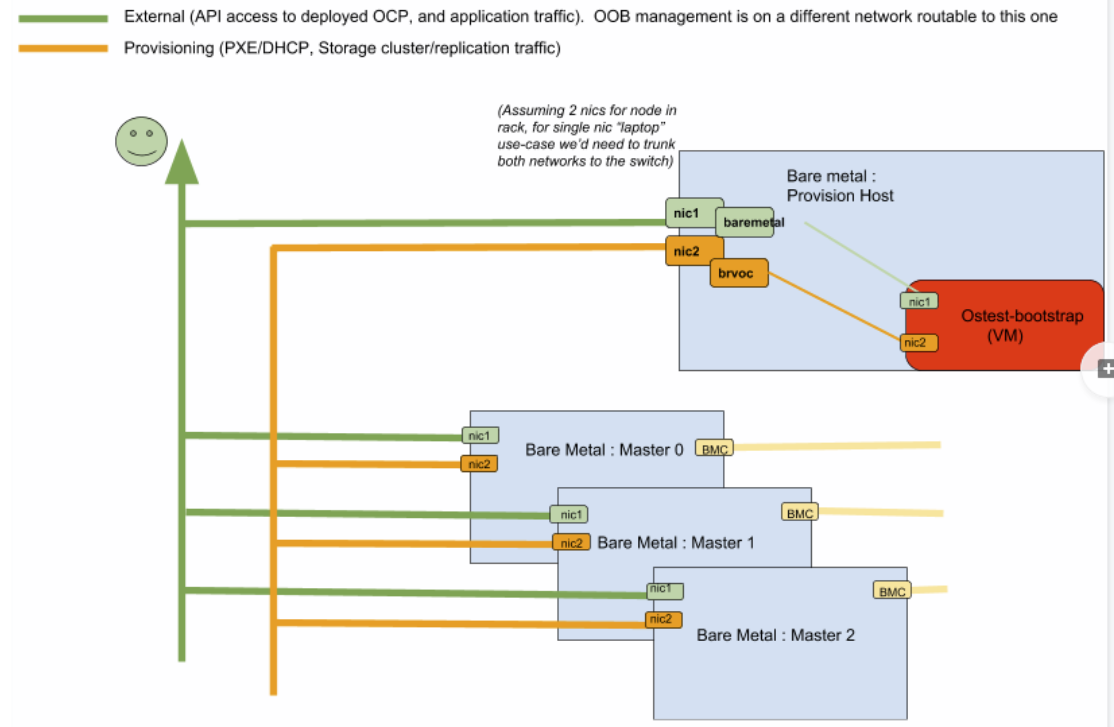

Deployments to Bare Metal

| nodes | requirements |

|---|---|

| 1x provisioning host (temporary) | 12 cores, 16GB RAM, 200GB disk free, 3 NICs (1 internet connectivity, 1 provisioning+storage, 1 cluster) |

| 3x masters | 12 cores, 16GB RAM, 200GB disk free, 2 NICs (1 provisioning+storage, 1 cluster) |

| 3x workers | 12 cores, min. 16GB RAM, 200GB disk free, 2 SR/IOV-capable NICs (1 provisioning+storage, 1 cluster) |

The blueprint validation lab uses 7 SuperMicro SuperServer 1028R-WTR (Black) with the following specs:

| Units | Type | Description |

|---|---|---|

| 2 | CPU | BDW-EP 12C E5-2650V4 2.2G 30M 9.6GT QPI |

| 8 | Mem | 16GB DDR4-2400 2RX8 ECC RDIMM |

| 1 | SSD | Samsung PM863, 480GB, SATA 6Gb/s, VNAND, 2.5" SSD - MZ7LM480HCHP-00005 |

| 4 | HDD | Seagate 2.5" 2TB SATA 6Gb/s 7.2K RPM 128M, 512N (Avenger) |

| 2 | NIC | Standard LP 40GbE with 2 QSFP ports, Intel XL710 |

Networking for the machines has to be set up as follows:

Deployments to vBaremetal (KVM)

| nodes | requirements |

|---|---|

| 1x bootstrap (temporary) | 2 vCPUs, 2GB RAM, 2GB (sparse) |

| 3x masters | 4 vCPUs, 8GB RAM, 2GB (sparse) |

| 3x workers | 2 vCPUs, 4GB RAM, 2GB (sparse) |

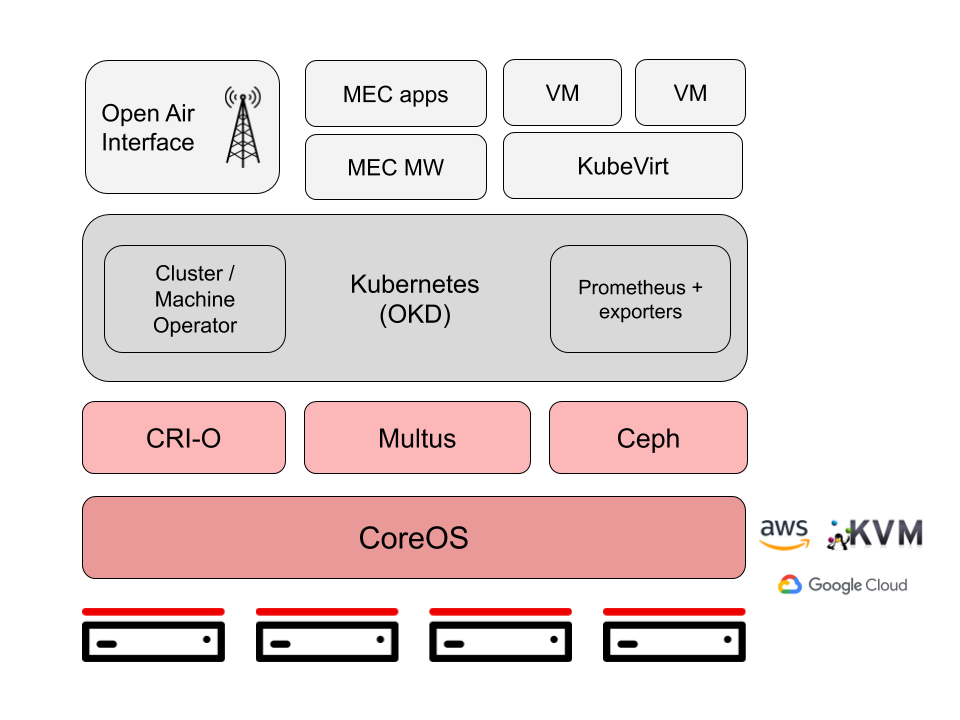

Software Platform Architecture

deploy on AWS, baremetal, Google Cloud, KVM (libvirt)

Release 2 components:

In Release 2 Ceph support for storage, and Centos-RT support to enable realtime workloads have been added.

Also the other components have bumped their versions, to use latest code upstream. Following there is a list of components with versions, used in release 2 (colored in orange)

- CentOS-RT: Linux testing-worker-0 3.10.0-1062.4.3.el7.x86_64 #1 SMP Wed Nov 13 23:58:53 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

- CoreOS: Linux testing-worker-1 4.18.0-147.el8.x86_64 #1 SMP Thu Sep 26 15:52:44 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

- CRI-O: 1.14.11-0.23.dev.rhaos4.2.gitc41de67.el8

- Kubernetes (OKD) version: openshift-install v4.2.4, built from commit 425e4ff0037487e32571258640b39f56d5ee5572, release image quay.io/openshift-release-dev/ocp-release@sha256:cebce35c054f1fb066a4dc0a518064945087ac1f3637fe23d2ee2b0c433d6ba8

- Multus, Cluster/Machine operator, Prometheus: versions provided in 4.2.4 Openshift release

- Ceph: v14.2.3-20190904

- Kubevirt: v1alpha3

Release 3 components:

- CentOS-RT: Linux 3.10.0-1062.4.3.el7.x86_64 #1 SMP Wed Nov 13 23:58:53 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

- CoreOS: Red Hat Enterprise Linux CoreOS release 4.3

- CRI-O: 1.16.3-22.dev.rhaos4.3.git11c04e3.el8

- Kubernetes (OKD) version: openshift-install v4.3.3, built from commit c7325a3c6045c7f4c8f1ac98d037ffca919be05a, release image quay.io/openshift-release-dev/ocp-release@sha256:9b8708b67dd9b7720cb7ab3ed6d12c394f689cc8927df0e727c76809ab383f44

- Multus, Cluster/Machine operator, Prometheus: versions provided in 4.3.3 Openshift release

- Ceph: 14.2.8 (2d095e947a02261ce61424021bb43bd3022d35cb) nautilus (stable)

- Kubevirt: 0.27.2

Release 4 components:

- CoreOS for all nodes (RT workers too): Red Hat Enterprise Linux CoreOS release 4.6

- CRI-O: xxxxxxx

- Kubernetes (OKD) version: openshift-install v4.6.6, built from commit db0f93089a64c5fd459d226fc224a2584e8cfb7e

release image quay.io/openshift-release-dev/ocp-release@sha256:c7e8f18e8116356701bd23ae3a23fb9892dd5ea66c8300662ef30563d7104f39 - Multus, Cluster/Machine operator, Prometheus: versions provided in 4.6.6 Openshift release

- Ceph: 14.2.8 (2d095e947a02261ce61424021bb43bd3022d35cb) nautilus (stable)

- Kubevirt: 0.27.2

APIs

No specific APIs involved on this blueprint. It relies on Kubernetes cluster so all the APIs used are Kubernetes ones.

Hardware and Software Management

Licensing

Apache license