...

Integrated Edge Cloud(IEC) is an Akraino approved blueprint family and part of Akraino Edge Stack, which intends to develop a fully integrated edge infrastructure solution, and the project is completely focused towards Edge Computing. This open source software stack provides critical infrastructure to enable high performance, reduce latency, improve availability, lower operational overhead, provide scalability, address security needs, and improve fault management. The IEC project will address multiple edge use cases and industry, not just Telco Industry. IEC intends to develop solution and support of carrier, provider, and the IoT networks.

Use Case

The purpose of this release is to automate the provisioning of ultra low latency light weight micro-MEC (μMEC) environment on AWS cloud and centrally manage multiple μMECs from a single dashboard. μMEC run on low foot print hardware and can cater to mission critical workloads. It takes an opinionated approach to spinning up an environment with pre-built configuration that are ready to use. It is cost effective compared to a fully configurable MEC environment as the and can be setup quickly.

...

Blueprint System Requirements

| Item | Capacity |

|---|

| Number of nodes | 3 |

| Node Size | TBD |

Disks in Storidge HA Clustering mode | Status |

|---|

| subtle | true |

|---|

| title | Not Yet Supported |

|---|

|

| 3 Disks per node - 100 GB each. |

| VPC | Pre-existing VPC |

| Subnet | Public (for now). Will switch to private subnet with Gateway configuration in future releases. |

Automation

Terraform Automation

...

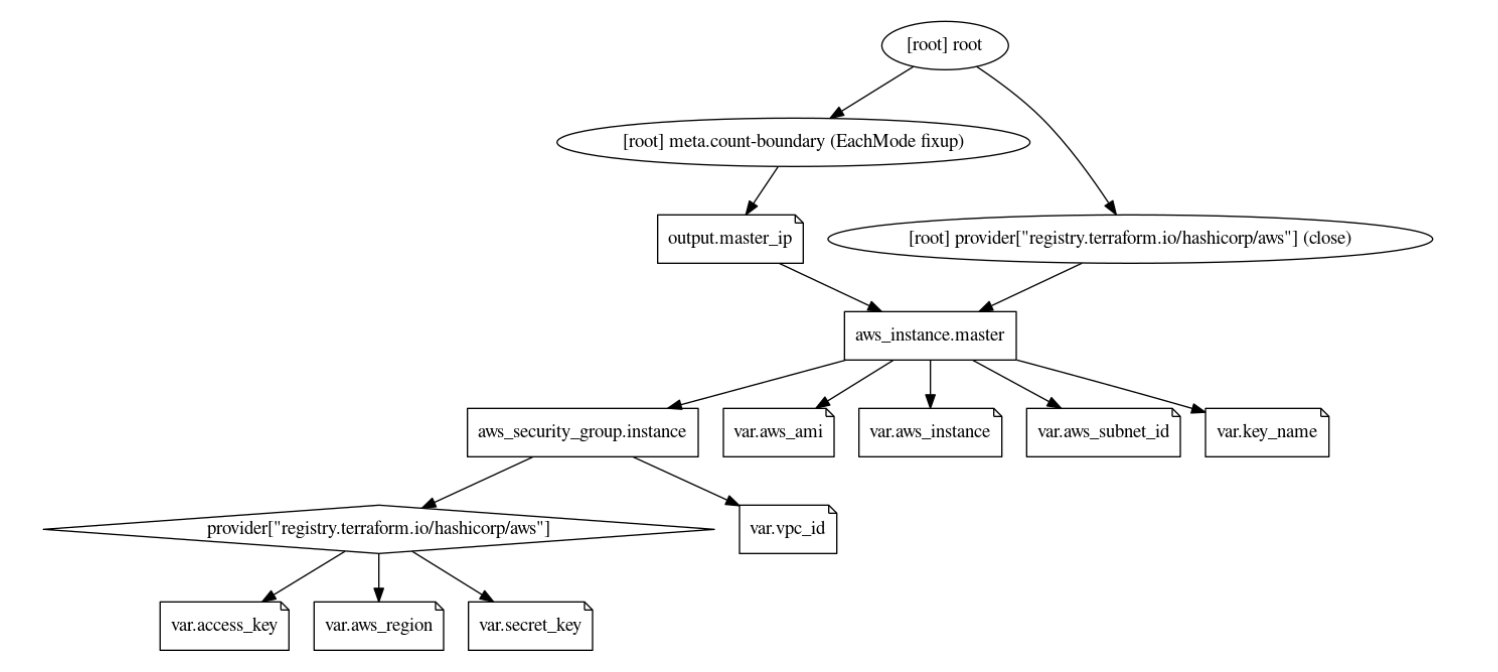

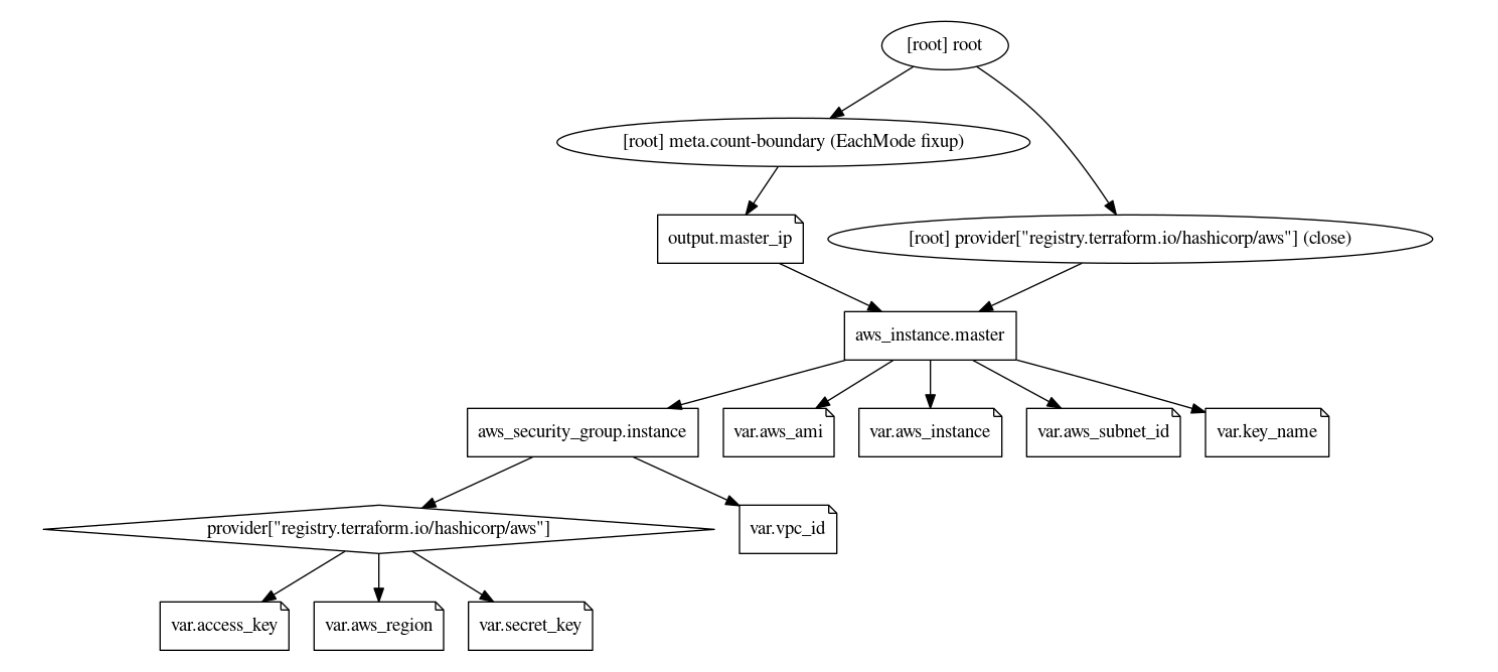

Image Modified

Image Modified

- The user provides the VPC ID, region, instance type, ami, subnet ID and the IAM access/secret as the input in the variable.tf file. The values are configurable at the time of applying the template.

- The variable ‘count’ indicates the number of worker nodes to be provisioned. A count of 2 brings up a 2 node cluster.

- We also need the PEM file to remote SSH into the EC2 instances. This file needs to be present in the same location as the main.tf and vairable.tf files.

- The security group is automatically provisioned based on the VPC and the Subnet ID provided.

- Currently, loadbalancer is not provisioned. Any workloads deployed in the cluster can be accessed via the Kubernetes Node port.

...

- Provision master node. The template executes EC2 user_data on the master node that uses snap package manager to install microk8s.

- Once the microk8s master is installed on the first node, the template then does a remote SSH command to the master node and generates a token by executing the command - ‘microk8s.add-node’ This makes use of the PEM file in the local directory to execute the remote SSH command. The token generated in this step is used to join the remaining nodes to the cluster.

- Copy the generated token on the remote machine to the local machine using the terraform ‘datasource’ plugin.

- Now provision the worker nodes and install microk8s on the remaining nodes

- Use the local datasource to read the join token and add the worker nodes to the master node using the command ''microk8s.join <token>"

Tasks and progress

| S.No | Tasks | Progress |

|---|

| 1 | Automated Provisioning of multi-node microk8s | |

| 2 | Install within a pre-existing VPC | |

| 3 | Dynamic template using Variables | |

| 4 | Restricted Security groups | |

| 5 | Test for different regions | |

| 6 | Install and Configure Storidge | |

| 7 | Install and Configure EdgeX Foundry | |

| 8 | Bring up microk8s in HA mode | |

| 9 | Configure Auto-scaling | |