| Table of Contents |

|---|

@MIGU 补充应用安装相关部分

@huawei 补充控制面相关部分

Introduction

How to use this document

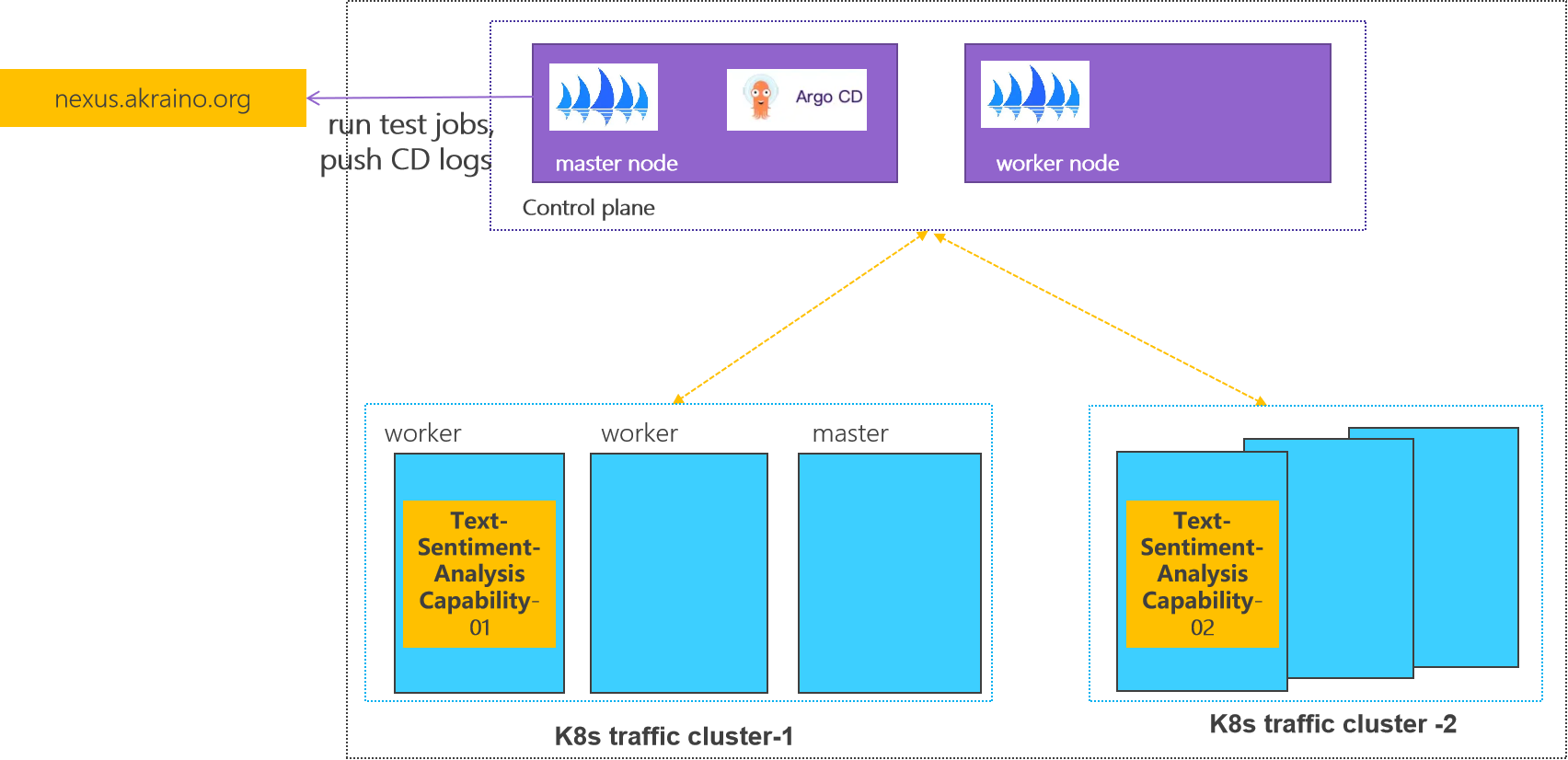

Deployment Architecture

This document describes steps required to deploy a sample environment and test for CFN (Computing Force Network) Ubiquitous Computing Force Scheduling Blueprint.

Deployment Architecture

Control plane: one k8s cluster is deployed in private lab.

Traffic plane: two K8s clusters are deployed in private lab.

Pre-Installation Requirements

...

kubeadm-1.23.7

kubectl-1.23.7

N/A

- Database Perequisites

schema scripts: N/A

...

0 Environmental description

Two centos At least two CentOS machines are required, one as the master node and the other as the worker node. The installed k8s version is 1.23.7.

There will be a comment like #master in front of each bash command, which is used to indicate which type of machine the command is used on. If there is no comment, the bash command needs to be executed on both types of machines

This document contains the operation and execution process, you can compare the screenshots of the document during the installation process.

1 Basic environment preparation

Both master node and worker node need to execute.

Preparing the The main content is to prepare the basic environment to ensure the normal execution of subsequent operations.

...

Confirm that the operating system of the current machine is CentOS 7 .

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

cat /etc/redhat-release |

Execute screenshot

1.2

...

Set hostname

If the name is long, it is recommended to use a combination of letters and dashes, such as "aa-bb-cc", here directly set to master and worker1.

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# master hostnamectl set-hostname master hostnamectl # worker hostnamectl set-hostname worker1 hostnamectl |

...

The changed host name needs to take effect after reboot.

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

reboot |

1.3 Set address mappinmapping

Set address mapping, and test the network.

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

cat <<EOF>> /etc/hosts

${YOUR IP} master

${YOUR IP} worker1

EOF

ping master

ping worker1 |

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

systemctl stop firewalld

systemctl disable firewalld

setenforce 0 sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

swapoff -a

sed -i 's/.*swap.*/#&/' /etc/fstab |

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

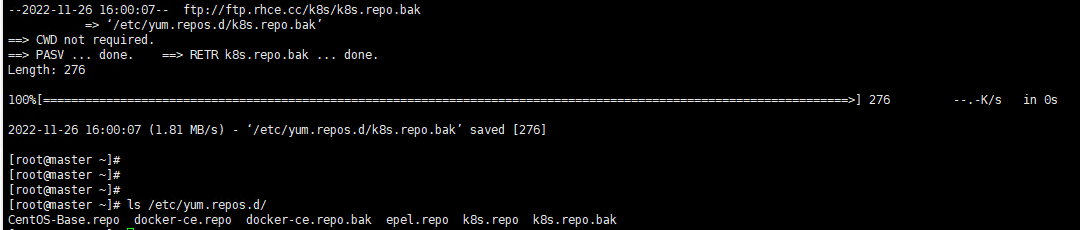

rm -rf /etc/yum.repos.d/* ;wget ftp://ftp.rhce.cc/k8s/* -P /etc/yum.repos.d/

ls /etc/yum.repos.d/ |

Execute screenshot

...

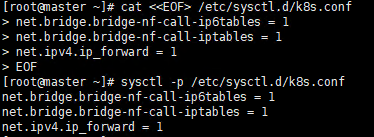

1.6 Set iptables

...

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

cat <<EOF> /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF sysctl -p /etc/sysctl.d/k8s.conf |

Execute screenshot

1.7 Make sure the time zone and time are correct

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

timedatectl set-timezone Asia/Shanghai systemctl restart rsyslog |

Execute screenshot

2 Install docker

Both master node and worker node need to execute.

The main content is to install docker-ce, and configure the cgroup driver of docker as systemd, confirm the driver.

2.1

...

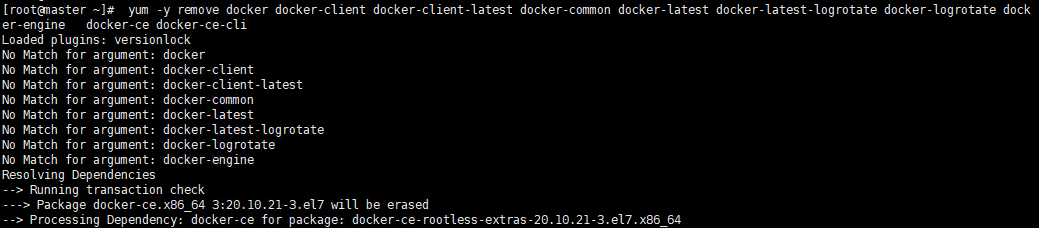

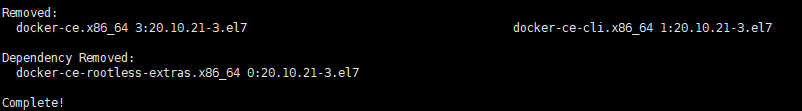

Uninstall old docker

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

yum -y remove docker docker-client docker-client-latest docker-common docker-latest docker-latest-logrotate docker-logrotate docker-engine docker-ce docker-ce-cli |

Execute screenshot

2.2

...

Install docker

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

yum -y install docker-ce |

Execute screenshot

2.3 Set docker to boot and confirm docker status

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

systemctl enable docker systemctl start docker systemctl status docker |

Execute screenshot

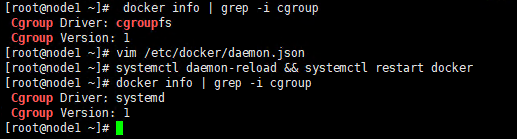

2.4 Configure the driver of docker's cgroup

...

Check the current configuration, if it is the system in the figure below, skip the follow-up and go directly to the third section

Execute screenshot

If it is cgroupfs, add the following statement

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

systemctl daemon-reload && systemctl restart docker docker info | grep -i cgroup |

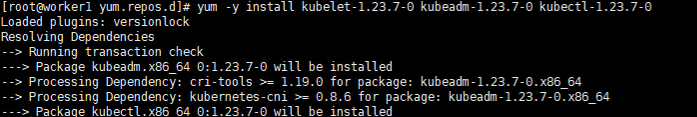

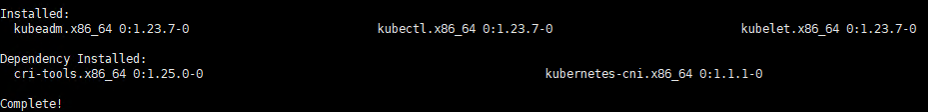

3 Install k8s basic components

Both master node and worker node need to execute.

The main content is to install the 1.23.7 version of the component kubeadm kubectl kubelet

3.1

...

Check kubeadm kubectl kubelet

If it is the version inconsistent, you need to uninstall it through yum remove ${name}.

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

yum list installed | grep kube |

Execute screenshot

3.2 Install kubelet kubeadm kubectl version 1.23.7

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

yum -y install kubelet-1.23.7 kubeadm-1.23.7 kubectl-1.23.7 |

Execute screenshot

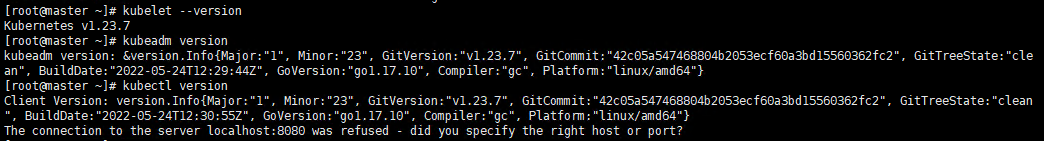

3.3 Verify installation

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

kubelet --version kubeadm version kubectl version |

Execute screenshot

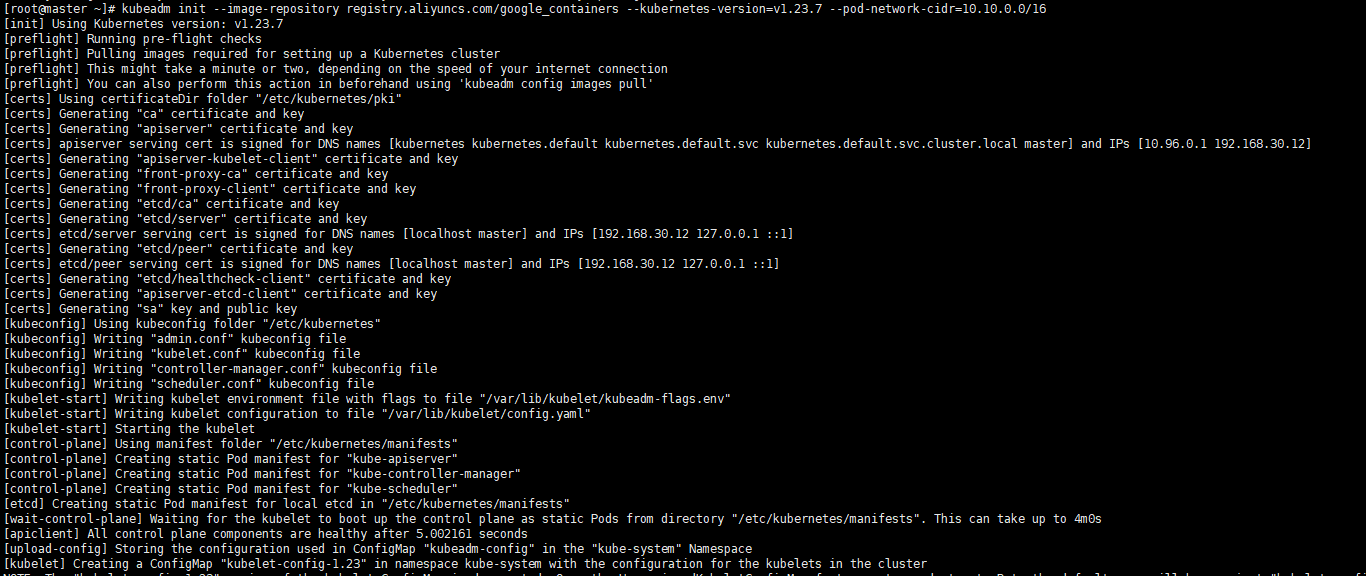

4 Initialize the master

Execute only on the master node.

The main content is to pull the image of version 1.23.7, initialize the master node, and configure the calico cilium network plug-in plugin for the master node

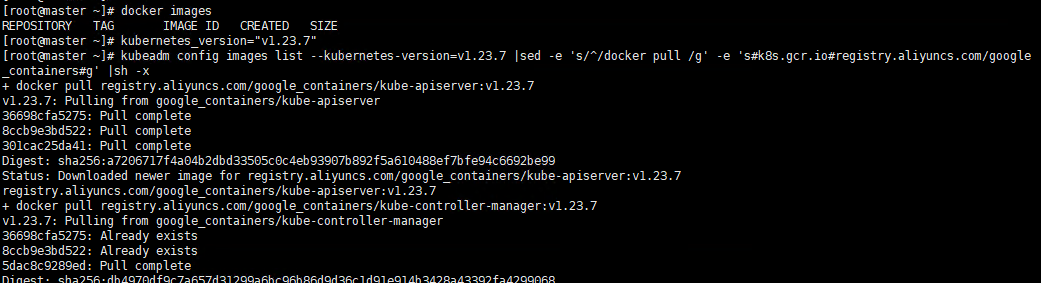

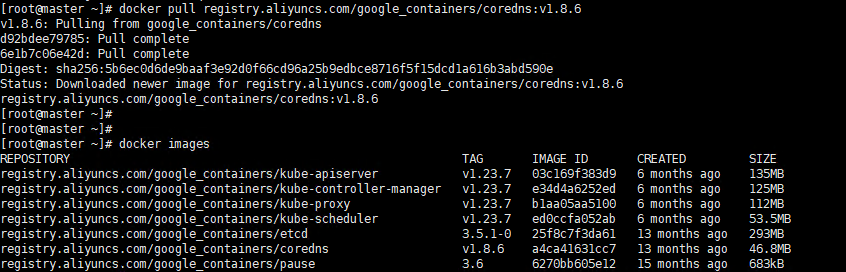

4.1 Pull the k8s image Pull the k8s image

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# master kubeadm config images list --kubernetes-version=v1.23.7 |sed -e 's/^/docker pull /g' -e 's#k8s.gcr.io#registry.aliyuncs.com/google_containers#g' |sh -x docker pull registry.aliyuncs.com/google_containers/coredns:v1.8.6 docker images |

Execute screenshot

Please make sure that the above 7 images have been pulled down

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# master kubeadm init --image-repository registry.aliyuncs.com/google_containers --kubernetes-version=v1.23.7 --pod-network-cidr=10.10.0.0/16 |

Execute screenshot

You can see that the prompt initialization is successful, and at the end of the prompt, the way to join the worker node is provided. The prompt executes the following command to use kubectl normally

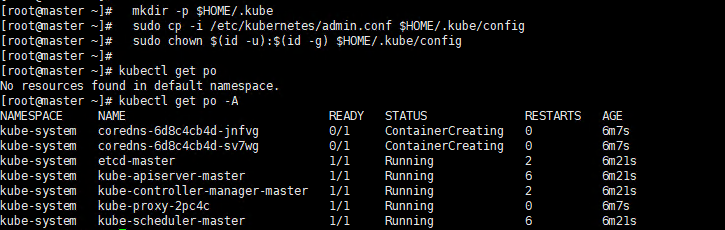

Let kubectl take effect

Execute Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# master

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

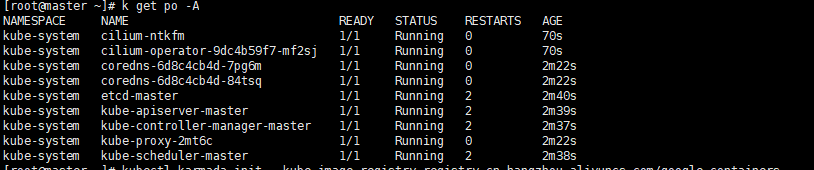

kubectl get po -A |

Execute screenshot

We can see that the coredns is not ready, so we need configure the network plugin

Note that if an error occurs and you need to re-initreinit, you need to execute the following statement first to ensure that kubeadm is re-executed normally

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# master kubeadm, reset if an error occurs kubeadm reset -f rm -rf ~/.kube/ rm -rf /etc/kubernetes/ rm -rf /var/lib/etcd rm -rf /var/etcd |

...

Here select cilium as the network plug-inplugin

Confirm that your current default version of the kernel is above 4.9

Check the current kernel version

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# master

uname -sr |

If current version ist not satisfied, you need update kernel

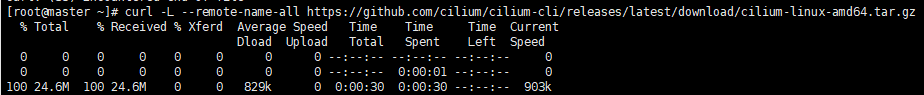

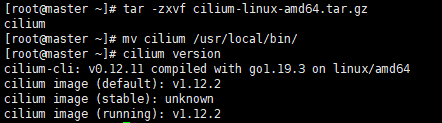

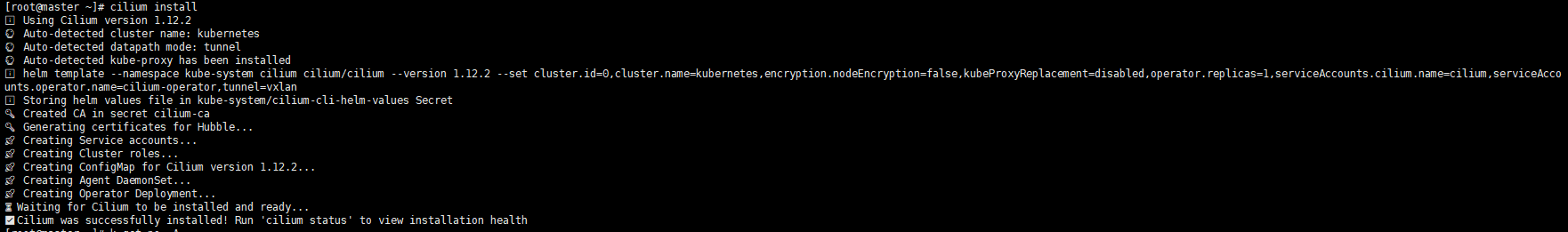

Cilium install

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# master curl -L --remote-name-all https://github.com/cilium/cilium-cli/releases/latest/download/cilium-linux-amd64.tar.gz tar -zxvf cilium-linux-amd64.tar.gz mv cilium /usr/local/bin/ cilium version cilium install |

Execute screenshot

kubectl get po -A |

Execute screenshot

We can see that the all the pod is ready

If an error occur, you can use cilium uninstall to reset.

5 Initialize workers

Add The main content is to add worker nodes to the cluster

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

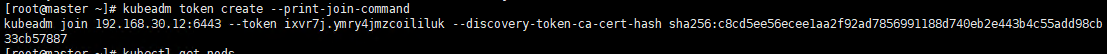

# master kubeadm token create --print-join-command |

Execute screenshot

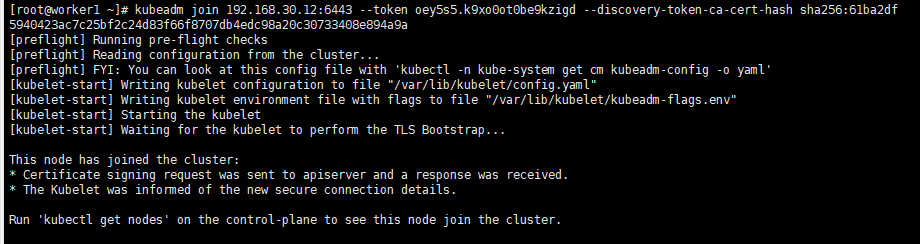

5.2 Join the master node

...

When you have the join statement, copy it and execute it on the worker node, you can see

Execute screenshot

Note that if an error occurs and you need to re-init, you need to execute the following statement first to ensure that kubeadm is re-executed normally

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

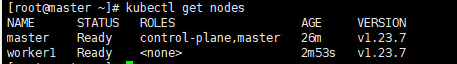

# master kubectl get nodes |

Execute

...

screenshot

6 Install karmada

The main content is to install karmada on control plane cluster

6.1 Install the Karmada kubectl plugin

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

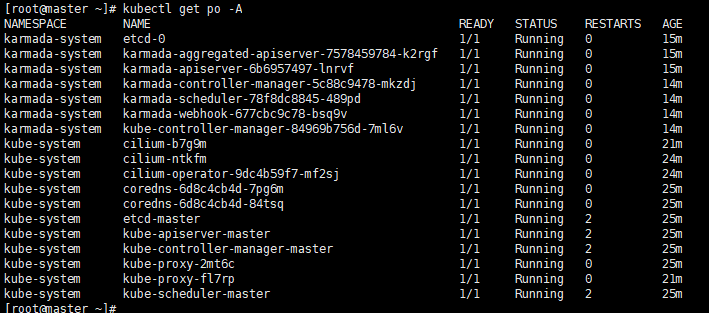

kubectl get po -A |

Execute screenshot

Reference

7 Propagate a deployment by Karmada

Before propagating a deployment, make sure the worker cluster is already working properly And get the latest config currently running

In the following steps, we are going to propagate a deployment by Karmada. We use the installation of nginx as an example

7.1 Join a worker/member cluster to karmada control plane

Here we add the working node cluster through push mode

It is worth noting that /root/.kube/config is Kubernetes host config and the /etc/karmada/karmada-apiserver.config is karmada-apiserver config

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

kubectl karmada --kubeconfig /etc/karmada/karmada-apiserver.config join ${YOUR MEMBER NAME} --cluster-kubeconfig=${YOUR MEMBER CONFIG PATH} --cluster-context=${YOUR CLUSTER CONTEXT} |

Here is example command for your information: kubectl karmada --kubeconfig /etc/karmada/karmada-apiserver.config join member1 --cluster-kubeconfig=/root/.kube/member1-config --cluster-context=kubernetes-admin@kubernetes

--kubeconfig specifies the Karmada'skubeconfigfile and the CLI- --cluster-kubeconfig

specifies the member's config. Generally, it can be obtained from the worker cluster in "/root/.kube/config" --cluster-context the value of current-context from --cluster-kubeconfig

If you want unjoin the member cluster, just change the join to unjoin: kubectl karmada --kubeconfig /etc/karmada/karmada-apiserver.config unjoin member2 --cluster-kubeconfig=/root/.kube/192.168.30.2_config --cluster-context=kubernetes-admin@kubernetes

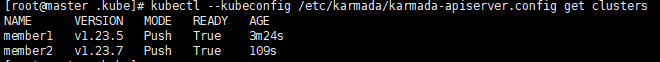

check the members of karmada

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

kubectl --kubeconfig /etc/karmada/karmada-apiserver.config get clusters |

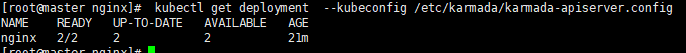

7.2 Create nginx deployment in Karmada

deployment.yaml are obtained through here https://github.com/karmada-io/karmada/tree/master/samples/nginx

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

kubectl create -f /root/sample/nginx/deployment.yaml --kubeconfig /etc/karmada/karmada-apiserver.config

kubectl get deployment --kubeconfig /etc/karmada/karmada-apiserver.config |

7.3 Create PropagationPolicy that will propagate nginx to member cluster

propagationpolicy.yaml are obtained through here https://github.com/karmada-io/karmada/tree/master/samples/nginx

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

kubectl create -f /root/sample/nginx/propagationpolicy.yaml --kubeconfig /etc/karmada/karmada-apiserver.config |

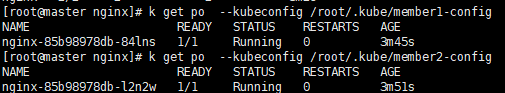

7.4 Check the deployment status from Karmada

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

kubectl get po --kubeconfig /root/.kube/member1-config

kubectl get po --kubeconfig /root/.kube/member2-config |

Reference

https://https://lazytoki.cn/index.php/archives/4/

...

https://zhuanlan.zhihu.com/p/368879345

https://docs.docker.com/engine/install/centos/

...

https://docs.cilium.io/en/stable/gettingstarted/k8s-install-kubeadm/

https://karmada.io/docs/get-started/nginx-example

Installation worker cluster

...

The main content is to install docker, and configure the cgroup driver of docker as systemd, confirm the driver

2.1 uninstall old docker

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

yum -y remove docker docker-client docker-client-latest docker-common docker-latest docker-latest-logrotate docker-logrotate docker-engine docker-ce docker-ce-cli |

2.2 install docker

Execute command

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

yum -y install docker-ce-20.10.11 |

...

Uninstall Guide

Troubleshooting

1. Network problem: the working cluster uses the default communication mode of calico, and the access between nodes is blocked; After many attempts, calico vxlan is feasible and flannel is feasible at present;

2. Disaster recovery scenario scheduling, test scenario 2, requires the karmada control plane to install the deschedule component;

Maintenance

Blue Print Package Maintenance

- Software maintenance: N/A

- Hardware maintenance:N/A

...