...

- Generic: Infrastructure Orchestration shall be as generic. Even though this work is being done on behalf of one BP (MICN), infrastructure orchestration shall be common across all BPs in the ICN family. Also, it shall be possible to use this component in other BPs outside of ICN family.

- Leverage open source projects:

- Leverage cluster-API for infra-global-controller. Identify gaps and provide fixed and also provide UI/CLI for good user experience.

- Leverage Ironic and metal3 for infra-local-controller to do bare-metal provisioning. Identify any gaps to make it work with Cluster-API.

- Leverage KuD in infra-local-controller to do Kubernetes installation. Identify any gaps and fix them.

- Figure out ways to use the bootstrap machine also as workload machine (Not in scope for Akraino-R2)

- Flexible and Extensible :

- Adding any new package in future shall be a simple addition.

- Interaction with workload orchestrator shall not be limited to K8S. Shall be able to talk to any workload orchestrator.

- Data Model driven:

- Follow CRD models as much as possible.

- Security:

- Infra-global and infra-local controller may have privileged access to secrets, keys etc.. Shall ensure to protect them by putting them in HW RoT or at least ensure that they are not visible in clear in HDD/SSDs.

- Redundancy: Infra-global controller shall be redundant, especially, if it used to manage multiple sites.

- Performance:

- Shall be able to complete the first time installation or patching across multiple servers in a site shall be in minutes < 10minutes for 10 server site. (May need to ensure that jobs are done in parallel - Multi-threading of infra-local-controller).

- Shall be able to complete the patching across sites shall be done in <10 minutes for 100 sites.

Architecture:

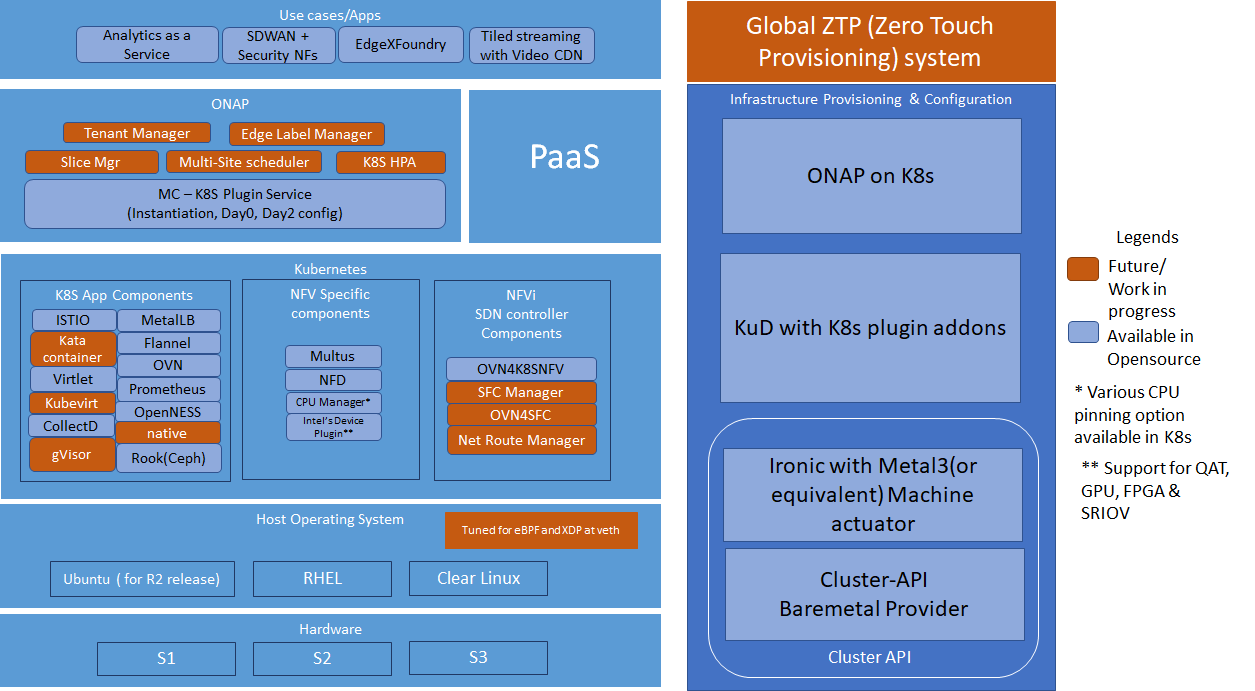

Simplified ICN layout(WIP)

Blocks and Modules

...

Global ZTP:

Global ZTP system is used for Infrastructure provisioning and configuration in ICN family. It is subdivided into 3 deployments Cluster-API, KuD and ONAP on K8s.

Cluster-API & Baremetal Operator

One of the major challenges to cloud admin managing multiple clusters in different edge location is coordinate control plane of each cluster configuration remotely, managing patches and updates/upgrades across multiple machines. Cluster-API provides declarative APIs to represent clusters and machines inside a cluster. Cluster-API provides the abstraction for various common logic that can be seen in various cluster provider such as GKE, AWS, Vsphere. Cluster-API consolidated all those logic provide abstractions for all those logic functions such as grouping machines for the upgrade, autoscaling mechanism.

In ICN family stack, Cluster-API Baremetal provider is metal3 Baremetal Operator, it is used to as a machine actuator that uses Ironic to provide k8s API to manage the physical servers that also run Kubernetes clusters on bare metal kuberneteshost. Cluster-api API manages the kubernetes control plane through cluster CRD, and Kubernetes node(host machine) through machine CRDs, Machineset CRDs and MachineDeployment CRDS. It also has an autoscaler mechanism that checks the Machineset CRD that is similar to the analogy of K8s replica set and MachineDeployment CRD similar to the analogy of K8s Deployment. MachineDeployment CRDs are used to update/upgrade of software drivers in

Cluster-API provider with Baremetal operator is used to provision physical server, and initiate the kubernetes cluster with user configuration

KuD

Kubernetes deployer(KUD) in ONAP can be reused to deploy the K8s App components(as shown in fig. II), NFV Specific components and NFVi SDN controller in the edge cluster. In R2 release KuD will be used to deploy the K8s addon such as Prometheus, Rook, Virlet, OVN, NFD, and Intel device plugins in the edge location(as shown in figure I). In R3 release, KuD will be evolved as "ICN Operator" to install all K8s addons.

ONAP on K8s

One of the Kubernetes clusters with high availability, which is provisioned and configured by Cluster-API will be used to deploy ONAP on K8s. ICN family uses ONAP Operations Manager(OOM) to deploy ONAP installation. OOM provides a set of helm chart to be used to install ONAP on a K8s cluster. ICN family will create OOM installation and automate the ONAP installation once a kubernetes cluster is configured by cluster-API

ONAP Block and Modules:

ONAP will be the Service Orchestration Engine in ICN family and is responsible for the VNF life cycle management, tenant management and Tenant resource quota allocation and managing Resource Orchestration engine(ROE) to schedule VNF workloads with Multi-site scheduler awareness and Hardware Platform abstraction(HPA)

Kubernetes Block and Modules:

Kubernetes will be the Resource Orchestration Engine in ICN family to manage Network, Storage and Compute resource for the VNF application. ICN family will be using multiple container runtimes as Virtlet, Kata container, Kubevirt and gVisor. Each release supports different container runtimes that are focused on use cases.

Kubernetes module is divided into 3 groups - K8s App components, NFV specific components and NFVi SDN controller components, all these components will be installed using KuD addons

K8s App components: This block has k8s storage plugins, container runtime, OVN for networking, Service proxy and Prometheus for monitoring, and responsible application management

NFV Specific components: This block is responsible for k8s compute management to support both software and hardware acceleration(include network acceleration) with CPU pinning and Device plugins such as QAT, FPGA, SRIOV & GPU.

SDN Controller components: This block is responsible for managing SDN controller and to provide additional features such as Service Function chaining(SFC) and Network Route manager.

Apps/ Use cases:

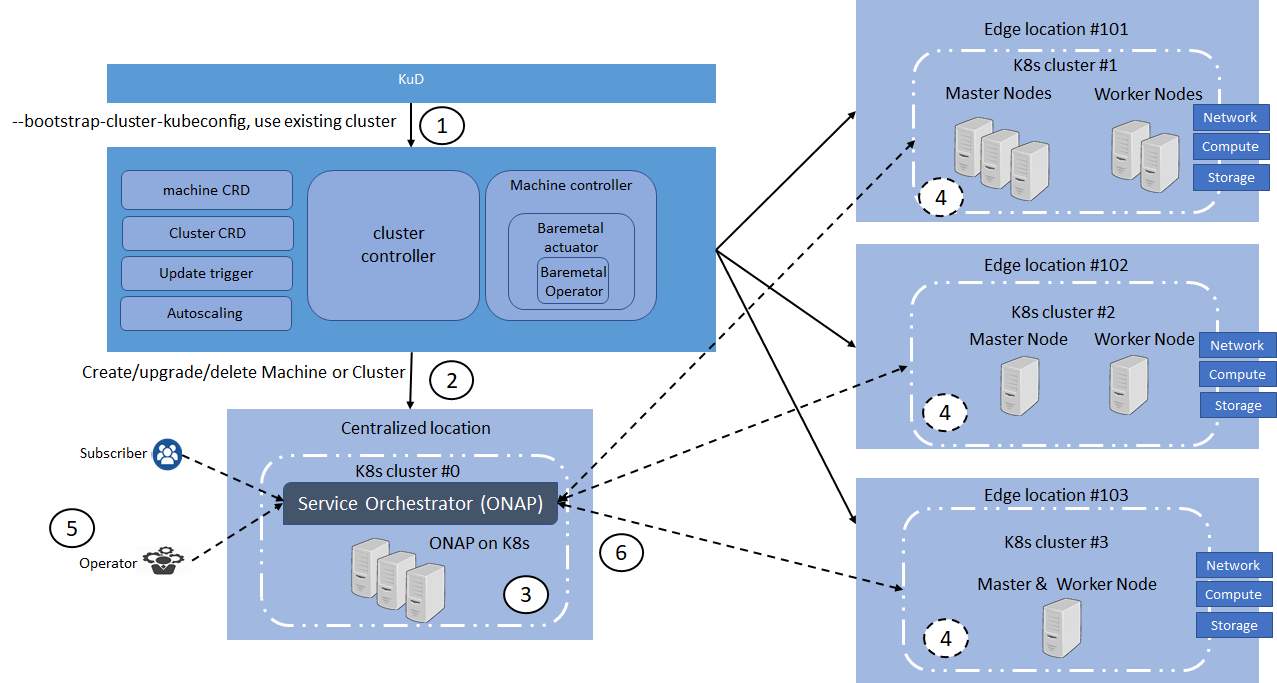

ICN Infrastructure layout:

- Use Clusterctl command to create the cluster for the cluster-api-provider-baremetal provide

- Machine CRD and Cluster CRD in configured to instated 4 clusters as #0 - #3

- Automation script for OOM deployment is trigged to deploy ONAP on cluster #0

- KuD addons script in trigger in all edge location to deploy K8s App components, NFV Specific and NFVi SDN controller

- Subscriber or Operator requires to deploy the workload in Service Orchestration

- ONAP should place the workload in the edge location based on Mult-site scheduling and K8s HPA

Software components

Components | Link | Akraino Release target |

Cluster-API | R2 | |

Cluster-API-Provider-bare metal | R2 | |

Provision stack - Metal3 | R2 | |

Host Operating system | Ubuntu 18.04 | R2 |

Quick Access Technology(QAT) drivers | Intel® C627 Chipset - https://ark.intel.com/content/www/us/en/ark/products/97343/intel-c627-chipset.html | R3 |

NIC drivers | R3 | |

ONAP | Latest release 3.0.1-ONAP - https://github.com/onap/integration/ | R2 |

Workloads | OpenWRT SDWAN - https://openwrt.org/ | R3 |

KUD | R2 | |

Kubespray | R2 | |

K8s | R2 | |

Docker | https://github.com/docker - 18.09 | R2 |

Virtlet | R2 | |

SDN - OVN | 0.3.0 | R2 |

OpenvSwitch | R2 | |

Ansible | https://github.com/ansible/ansible - 2.7.10 | R2 |

Helm | https://github.com/helm/helm - 2.9.1 | R2 |

Istio | https://github.com/istio/istio - 1.0.3 | R2 |

Kata container | R3 | |

Kubevirt | https://github.com/kubevirt/kubevirt/ - v0.18.0 | R3 |

Collectd | R2 | |

Rook/Ceph | R3 | |

MetalLB | R3 | |

Kube - Prometheus | R3 | |

OpenNESS | Will be updated soon | R3 |

Multi-tenancy | R2 | |

Knative | R3 | |

Device Plugins | https://github.com/intel/intel-device-plugins-for-kubernetes - | R2 |

Node Feature Discovery | R2 | |

CNI | https://github.com/coreos/flannel/ - release tag v0.11.0 https://github.com/containernetworking/cni - release tag v0.7.0 https://github.com/containernetworking/plugins - release tag v0.8.1 https://github.com/containernetworking/cni#3rd-party-plugins - Multus v3.3tp, SRIOV CNI v2.0( withSRIOV Network Device plugin) | R2 |

Conformance Test for K8s | R2 |

Gaps(WIP)

| Release | Block | Components | Identified Gaps | Initial thought |

|---|---|---|---|---|

R2 | ZTP | Cluster-API | The cluster upgrade yet to be support | |

| No node repair mechanism | ||||

| R3 | ||||

Solution

Overview

Flows & Sequence Diagrams

...