Pre-Installation Requirements for REC Cluster

Hardware Requirements:

- Minimum of 3 nodes.

- Total Physical Compute Cores: 60 (120 vCPUs)

- Total Physical Compute Memory: 192GB minimum per node

- Total SSD-based OS Storage: 2.8 TB (6 x 480GB SSDs)

- Total Application-based Raw Storage: 5.7 TB (6 x 960GB SSD0

- Networking Per Server: Apps - 2 x 25GbE (per Server) and DCIM - 2 x 10GbE + 1 1Gbt (shared)

BIOS Requirements:

- BIOS set to Legacy (Not UEFI)

- CPU Configuration/Turbo Mode Disabled

- Virtualization Enabled

- IPMI Enabled

- Boot Order set with Hard Disk listed as first in list.

Network Requirements:

The REC cluster requires the following segmented (VLAN), routed networks accessible by all nodes in the cluster:

- External (OAM) Network

- OOB (iLO/iDRAC) network(s)

- Storage/Ceph network(s)

- Internal network for Kubernetes connectivity

- NTP and DNS accessibility

The REC installer will configure NTP and DNS using the parameters entered in the user_config.yaml. However, the network

must be configured for the REC cluster to be able to access the NTP and DNS servers prior to the install.

User_config.yaml

The user_config.yaml file contains details for your REC cluster such as required network cidrs, usernames, passwords,

DNS and NTP server ip addresses, etc. The REC configuration is flexible, but there are dependencies: e.g. using dpdk

requires networking profile with ovs-dpdk type, performance profile with cpu pinning & hugepages and performance

profile link on compute node

*Note: the version number listed in the user_config.yaml needs to be 2.0.0.

---

version: 2.0.0

name: rec-sample

description: REC Deployment on Nokia OpenEdge Server

time:

ntp_servers: [216.239.35.4, 216.239.35.5]

zone: America/New_York

users:

admin_user_name: cloudadmin

admin_user_password: "$9$bl0ck=959000$V07qrQ4tKMbDTWTj$wl9cTTqThWTEWm33THH29SZeIGU66K2FHffF$1wIvh9CACKJ/HvZFGdbedw79ag2.2AqtDRoTTTCWK8Eq0kQn/"

initial_user_name: myadmin

initial_user_password: FY625czv5R

admin_password: ycjPSE4mA

networking:

dns: [ 8.8.8.8,c8.8.4.4 ]

mtu: 9000

infra_external:

network_domains:

rack-1:

cidr: 192.168.10.0/24

vlan: 141

gateway: 192.168.10.1

ip_range_start: 192.168.10.210

ip_range_end: 192.168.10.213

infra_storage_cluster:

network_domains:

rack-1:

cidr: 192.169.10.0/24

ip_range_start: 192.169.10.211

ip_range_end: 192.169.10.213

vlan: 142

infra_internal:

network_domains:

rack-1:

cidr: 192.167.10.0/24

ip_range_start: 192.167.10.211

ip_range_end: 192.167.10.250

vlan: 144

provider_networks:

providerInternal:

vlan_ranges: "2002:2003"

providerExternal:

vlan_ranges: "2004:2005"

providerSriov:

vlan_ranges: "2006:2008"

caas:

docker_size_quota: 2G

helm_operation_timeout: 900

docker0_cidr: 172.17.0.1/16

instantiation_timeout: 60

helm_parameters: { "registry_url": "registry.kube-system.svc.nokia.net" }

encrypted_ca: ["U2FsdGVkX1+iaWyYk3W01IFpfVdughR5aDKo2NpcBw2UStYnepHlr5IJD3euo1lS\n7agR5K2My8zYdWFTYYqZncVfYZt7Tc8zB2yzATEIHEV8PQuZRHqPdR+/OrwqjwA6\ni/R+4Ec3Kko6eS0VWvwDfdhhK/nwczNNVFOtWXCwz/w7AnI9egiXnlHOq2P/tsO6\np3e9J6ly5ZLp9WbDk2ddAXChnJyC6PlF7ou/UpFOvTEXRgWrWZV6SUAgdxg5Evam\ndmmwqjRubAcxSo7Y8djHtspsB2HqYs90BCBtINHrEj5WnRDNMR/kWryw1+S7zL1G\nwrpDykBRbq/5jRQjqO/Ct98yNDdGSWZ+kqMDfLriH4pQoOzMcicT4KRplQNX2q9O\nT/7CXKmmB3uBxM7a9k2LS22Ljszyd2vxth4jA+SLNOB5IT8FmfDY3PvNnvKaDGQ4\nuWPASyjpPjms3LwsKeu+T8RcKcJJPoZMNZGLm/5jVqm3RXbMvtI0oEaHWsVaSuwX\nnMgGQHNHop+LK+5a0InYn4ZJo9sbvrHp9Vz4Vo+AzqTVXwA4NEHfqMvpphG+aRCb\ncPJggJqnF6s5CAPDRvwXzqjjVQy2P1/AhJugW7HZw3dtux4xe3RZ+AMS2YW+fSi1\nIxAGlsLL28KJMc5ACxX5cuSB/nO19afpf6zyOPIk0ZVh8+bxmB4YBRzGLTSnFNr3\ndauT9/gCU85ThE93rIfPW6PRyp9juEBLjgTpqDQPn5APoJIIW1ZQWr6tvSlT04Hc\nw0HZ7EcAC7EmmaQYTyL6iifHiZHop9g2clXA0MU9USQggMOKxFrxEyF4iWdsCCXP\nfTA3bgzvlvqfk9p2Cu9DOmRHGLby2YSj+oghsFDCfhfM1v2Ip2YGPdJM6y7kNX19\nkBpV4Rfcw0NCg2hhXbHZ7LtejlQ1ht8HnmY5/AnJ/HRdnPb+fcdgS9ZFcGsAH2ze\nSe7hb+MNp80JsuX4A+jOjBacjwL+KbX5RDJp//5dEmqJDkbfMctL1KukBaDrbpci\np/TeVmLhwlQogeVuF/Y5vCokq6M5+f28jFJ+R+P2oBY3fAvBhmd+ZmGbUWXxmMF+\nV3mpFkYqXWS+mtVh8Fs0nhrCkqRLTmBj5UNhsMcZ4vGfiu+dPMQi62wa6GoGVjus\nIj/Upal9RYwthSykUKcWu0KEB929/e4Sz0Y6s3Pzy1+xdmKDPtaBUH9UT3LjMVvY\nordeL0UjKYqWcvpb7Vfma3UD0tz6n/CyHNDVhA/FioadEy6iJvL316Kf3to69cN+\nvKWav/IeazxdhBSbatPKN3qwESkzr3el2yrdZL4qehflRMp0rFuzZfRB69UFPbgq\nkTQlJHb0OaJTt6er/XfjtMZoctW7xtYf58CqMJ06QxK5kLKc5Yib73cVyzhmmIz4\nEtUs10QCA5AihHgVES8ZrgZKWDhR+pmFPG3eVitJoUeDNEe9vVEEX8TiWu+H1OHG\n8UyCKFyyPCj5OwVbwGSgQg=="]

encrypted_ca_key: ["U2FsdGVkX1+WlNST+WkysFUHYAPfViWe01tCCQsXPsWsUskB4oNNC78bXdEv33+3\ncDlubc9F0ZiHxkng70LKCFV5KQneHfg6c3lPaM4zwaJ34UCf80riIoYVozxqnK/S\nTAs0i0rJmzRz4hkTre4xV0I2ZucW3gquP4/s1yUK3IJF84SDfEi26uPsBOrUpU9Q\nIBxY2rldK+yZUZUFehQb82dvin0CSiXDY63cYLJMYEwWBfJEeY+RGMuZuuGp3qgy\nyVfByZ5/kwF9qa6+ToYw2zXiokGFfBqiAFnXU7Q6Wcu2qndMQoiy3jFU2DjEQi6N\nVgZHzrPUUUrmQGALyA5blVvNHVQyq4rmMmsTEI02xclz8m7Yzd/HEFo/C5z5x+My\n2SOIBIRCy6bTSpzU7iixl5U6r5/XfrfQoJ+OwRq1/P2QmJ2swqzcLOUpDlquDeuP\nd46ceWMO8nlimRps4cX5nQRI1SLaypH1rRiQpnIP7q+jrHEco6wStc458rzX1WxW\nhPMjnnlVhH4sJNqh5c5/1BvzSBdnx0qIBcFA6fR8XfL//DmRFsAfRaxVVWadpusc\nXfh4LNNqR9HmoNH6yfBpd66yBYsjFbWip0WKMwdhNBqN1a94OFvRS4+iUfskjC2w\n4w4YjPluRBxI5t9eT4wX8D328ikgP4ZQrPdUZoDpLThhRZ62pTOknOeVj+C7799O\nEbopqGg+6BIXZHakmzB6I/fyjthoLBbxpyqNvKlGGamMNI3d7wq1vwTHch5QLO+w\n5fuRqoIRUtGscSQXp8EOb4kiaxhXXJLkVJw7auOdqxqxQbIf+dt2ViwdyFNjdHz8\ngPFcAom0GO+T7xHMF1H6xqUXkB4QzTK934pMVoIwu5MezBlz8bxj5+EeF7Ptkdnj\nq4rwihGY7aEhPrXVoq19tsbMYwDGZQvbTKtWDOxrD6ruTDTwZxVZcEOAX5KCF0Oq\nqRcrCBcLNERm4FSAgUK90v71TNQoMpVea3/01Ec8GbHJfozvrmAVqBpbF0ajlM1/\nZvGrnmVrJEk/PelCEu+Ni9zrn7DxGZqJ7lbcDU7Nq/18KNvOQah4Ryh9aDKVSD4r\nvgZKzIHPRgKoHTxTZ2uP1LBgK2Ux1RjhlAcZFAmWYxg/qluxnHKCimZ04rIjI0if\nN0wSI7uh8TsyidZv+iKpG+JqW5oe7R8xLlU3ceFllkghAGVRn/UyirGXYPzxXbfB\naphYFBuj6FbtdisM7euX2A9F2OUM2reditR/z6q1Ety1xX9aNudQJ1YcL6yr7pGI\nIX3NANlp2Ra9Fr95ne9aEnwdMmGsQ5DjxHczEc3EcDEbFuH6C/XDzYqtOGyFe/pI\nZgPSiys157GB/GzSfOsErvA+EVWKmU8PiLl461s/OV25m0thG5+03yXKRsymX371\nXAg+hHqe2x5PRjwuUDmruEM/P3LHQeMb4YdhI3DfFyUExtJ/Q/38GgB1XNAuDu0R\n3EyV01Umm6IrYDQWpngjGGmiimOdpLFHkQbxDNiRr8QX5eshAbVlI19DINCiRl/u\njh4TqRZMl6YI4oQZDYqCrBrqZLljm/DBhgvr2jnq9ed3dIKlHbrkw3sjBuwINZjw\naduL3U+WTUvUCY/VtlxJZdU1kVLwSnkDh+8HK/eZ7AuHWjQjD9JzArCo5CCMMFJL\noY0IKxzhhP+4BmaMabwcuooxMjWR3fu3T0sgcTEZtG61wcSUDW0gw6c5QAxmq7It\nqzP2b1eNPp05oMJ6ALIe+8MQMM94HigbSiLB3/rFS8KkhZcdJliBc+Ig6TBFx9QW\nS0Jh4WgJn0B5laiI7DRp0E9bUUnLLEFTdA9P9T1DcIwngPuv6IYNQdzYluaX6cvy\nNhCH+XdbaFkA9KOsp69uZWqzweoejAo24Cj71J9H4yMzBDWi7/fL4YQqjS6zC9JY\ny3zhk8VGi9SYtMB1bPdmxBlCyLElZ6qf/cyjsWN89oTTITCYbSuIrB4piJH35t17\nd7eFZ7QXMampJzCQyAcKsxTDVdeKhHjVxsnSWuvmlR31Hmrxw3yQQH2pbGLcHBWJ\ngz+/xpgxh5x0dGzqOKqgfGOtBOSpzHFMuuoXToYbcAIwMVRcTPnVR7B1kOm2OiLG\nhuOxX29DypSM9HjsmoeffJaUoZ2wvBK4QZNpe5Jb80An/aO+8/oKmtaZgJqectsM\nfrVSLZtdPnH62lPy1i5CnoFI6JkX7oficJw8YQqswRp2z5HL9cSEAiR3MOr/Yco+\njJu5IidT3u5+hUlIdZtEtA=="]

storage:

backends:

lvm:

enabled: false

ceph:

osd_pool_default_size: 2

enabled: true

network_profiles:

controller_network:

linux_bonding_options: "mode=lacp"

ovs_bonding_options: "mode=lacp"

bonding_interfaces:

bond0: [enp94s0f0,enp94s0f1]

bond1: [enp135s0f0,enp135s0f1]

interface_net_mapping:

bond0: [infra_internal, infra_external, infra_storage_cluster]

provider_network_interfaces:

bond1:

type: ovs

provider_networks: [ providerInternal, providerExternal ]

compute_network:

linux_bonding_options: "mode=lacp"

ovs_bonding_options: "mode=lacp"

bonding_interfaces:

bond0: [ens94s0f0,ens94s0f1]

bond1: [enp135s0f0,enp135s0f1]

interface_net_mapping:

bond0: [ infra_internal ]

provider_network_interfaces:

bond1:

type: ovs

provider_networks: [ providerInternal, providerExternal ]

performance_profiles:

caas_cpu_profile:

caas_cpu_pools:

exclusive_pool_percentage: 34

shared_pool_percentage: 66

storage_profiles:

caas_worker_docker_profile:

lvm_instance_storage_partitions: ["1"]

mount_dir: /var/lib/docker

mount_options: noatime,nodiratime,logbufs=8,pquota

backend: bare_lvm

lv_name: docker

ceph_backend_profile:

backend: ceph

nr_of_ceph_osd_disks: 2

ceph_pg_openstack_caas_share_ratio: "0:1"

hosts:

controller-1:

service_profiles: [ caas_master, storage ]

network_profiles: [ controller_network ]

storage_profiles: [ ceph_backend_profile ]

performance_profiles: [ caas_cpu_profile ]

network_domain: rack-1

hwmgmt:

address: 192.166.10.211

user: root

password: c5zgUQ6f

controller-2:

service_profiles: [ caas_master, storage ]

network_profiles: [ controller_network ]

storage_profiles: [ ceph_backend_profile ]

performance_profiles: [ caas_cpu_profile ]

network_domain: rack-1

hwmgmt:

address: 192.166.10.212

user: root

password: c5zgUQ6f

controller-3:

service_profiles: [ caas_master, storage ]

network_profiles: [ controller_network ]

storage_profiles: [ ceph_backend_profile ]

performance_profiles: [ caas_cpu_profile ]

network_domain: rack-1

hwmgmt:

address: 192.166.10.213

user: root

password: c5zgUQ6f

host_os:

grub2_password: GRUB2_PASSWORD=grub.pbkdf2.sha512.10000.CC6F56BFCFB90C49E6E16DC7234BF4DE4159982B6D121DC8EC6BF0918C7A50E8604CA40689A8B26EA01BF2A76D33F7E6C614E6289ABBAA6944ECB2B6DEB2F3CF.4B929016A827C36142CC126EB47E86F5F98E92C8C2C924AD0C98436E4699DF7536894F69BB904FDB5E609B9A5D67E28A7D79E8521C0B0AE6C031589FA0452A21

...

Yaml Requirements

- The yaml files need to edited/created using Linux editors or in Windows Notepad++

- Yaml files do not support TABS. You must space over to the location for the text.

Note: You have better chance at creating a working yaml by editing an existing file rather the starting from scratch.

Installing REC

Accessing REC.ISO

HP Servers

Login to iLo for Controller 1 for the installation

Go to Remote Console & Media

Scroll to HTML 5 Console

- URL

http://XXX.XXX.XXX.XX:XXXX/REC_RC1/rec.iso -> Virtual Media URL →

- NFS

< IP to connect for NFS file system>/<file path>/rec.iso

Check “Boot on Next Reset” -> Insert Media

Reset System

Dell Servers

Go to Configuration/Virtual Media

Scroll down to Remote File Share and enter the url for ISO into the Image File Path field.

URL:

http://204.127.189.10:8090/dell_support/rec.iso

or NFS

< IP to connect for NFS file system>/<file path>/rec.iso>

Select Connect.

Open Virtual Console, and go to Boot

Set Boot Action to Virtual CD/DVS/ISO

Then Power/Reset System

Nokia OpenEdge Servers

<NOKIA INSTRUCTIONS NEEDED FOR NFS/URL>

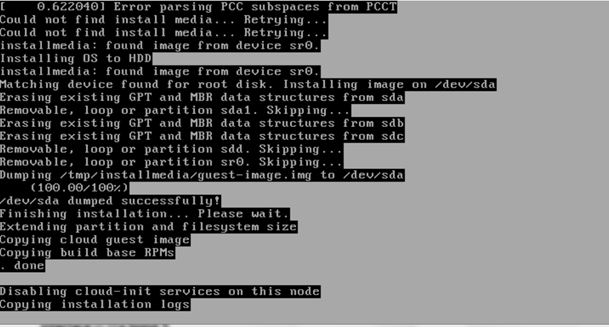

After rebooting, the installation will bring up the Akraino Edge Stack screen.

The first step is to clean all the drives discovered before installing the ISO image.

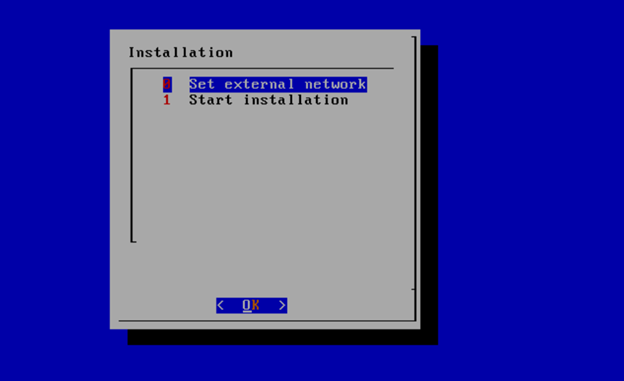

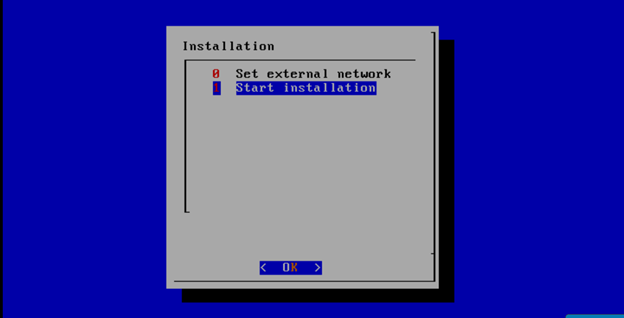

Select, 0 Set external network at the Installation window, press OK.

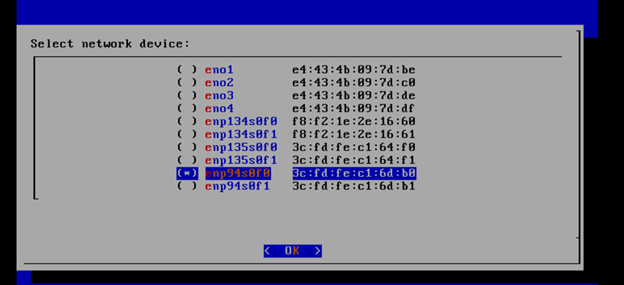

Arrow down to and press the spacebar to select the network interface to be used for the external network.

If using bonded nics, select the first interface in the bond.

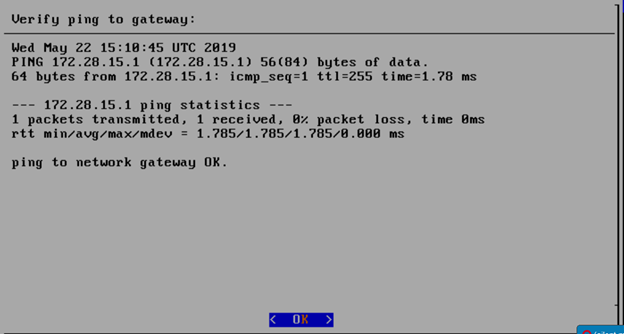

Enter the external ip address with CIDR for controller-1: 172.28.15.211/24

Enter the gateway ip address for the external ip address just entered: 172.28.15.1

Enter the VLAN number: 141

The installation will check the link and connectivity of the IP addresses entered.

If connectivity test passed, then Installation window will return.

Select, 1 Start installation and OK.

After selecting Start Installation, you will arrive at a CentOS login screen.

Uploading user_config.yaml

Go to your RC or jump server and scp (or sftp) your user_config.yaml to controller-1’s /etc/userconfig directory.

initial credentials: root/root.

scp user_config.yaml root@<controller-1 ip address>/etc/userconfig/

Start Deployment

Log into controller-1 (root/root), go to /opt/start-menu

Run ./start_menu_service.sh

Monitoring Deployment Progress/Status

You may monitor the CaaS deployment by tailing the logs in /srv/deployment/log/.

There are two log files:

bootstrap.log

cm.log

tail -f /srv/deployment/log/cm.log

tail -f /srv/deployment/logbootstrap.log

Note: When the deployment to all the nodes has completed, “controller-1” will reboot automatically.

Verifying Deployment

A post installation verification is required to ensure that all nodes and services were properly deployed.

You need to establish an ssh connection to the controller’s VIP address and login with administrative rights.

Example Needed

Verify Deployment Success.

Enter the following command:

tail /srv/deployment/log/bootstrap.log

You should see: Installation complete, Installation Succeeded.

2. Confirm active state of required services

Enter the following commands:

systemctl status --no-pager docker.service

systemctl status --no-pager kubelet.service

Example

systemctl status --no-pager docker.service* docker.service - Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: enabled)

Active: active (running)

3. Verify node functionality

Enter the following commands:

kubectl get no --no-headers | grep -v Ready

Output: The command output shows nothing.

kubectl get no --no-headers | wc -l

Output: The command output shows the number of CaaS nodes.

4. Verify Components

Enter the following command:

kubectl get po --no-headers --namespace=kube-system --field-selector status.phase!=Running

Output: The command output shows nothing.

5. Confirm Package Manager Status (Helm)

- Docker registry is running, and images can be downloaded:

image=$(docker images -f 'reference=*/rec/hypercube' --format="{{.Repository}}:{{.Tag}}"); docker rmi $image; docker pull $image

Output: Status: Downloaded newer image for …

- Chart repository is up and running: (The curl command below is really one line.)

curl -sS -XGET --cacert /etc/chart-repo/ssl/ca.pem --cert /etc/chart-repo/ssl/chart-repo?.pem

--key /etc/chart-repo/ssl/chart-repo?-key.pem https://chart-repo.kubesystem.svc.rec.io:8088/charts/index.yaml

Output: output is a yaml file.

- Helm is able to run a sample application:

helm list

Output: rec-infra.

Deployment Failures

Sometimes failures happen, usually do to misconfigurations or incorrect addresses entered.

To re-launch a failed deployment

There are two options for redeploying.

- /opt/nokia/cmframework/scripts/bootstrap.sh /opt/nokia/installer-ui/user_config/user_config.yaml (the user_config.yaml file is loaded from installer GUI)

- Openvt -s -w /opt/start-menu/start_menu.sh &/

Note: In some cases, modifications to the user_config.yaml may be necessary to resolve a failure.

If re-deployment is not possible, then the deployment will need to be started from booting to the REC.iso,