This is a draft and work in progress

The Blueprint Validation Framework offers a set of tools that can be used to test Akraino deployments on different layers (hardware, os, k8s, openstack, etc).

The framework provides tests at different layers of the stack, like hardware, operating system, cloud infrastructure, security, etc. Since the project is constantly evolving, the full list of available tests can be found in the projects repo, where the tests are located under their respective layer. Each layer has its own container image built by the validation project. The full list of images provided can be found in the project’s DockerHub repo.

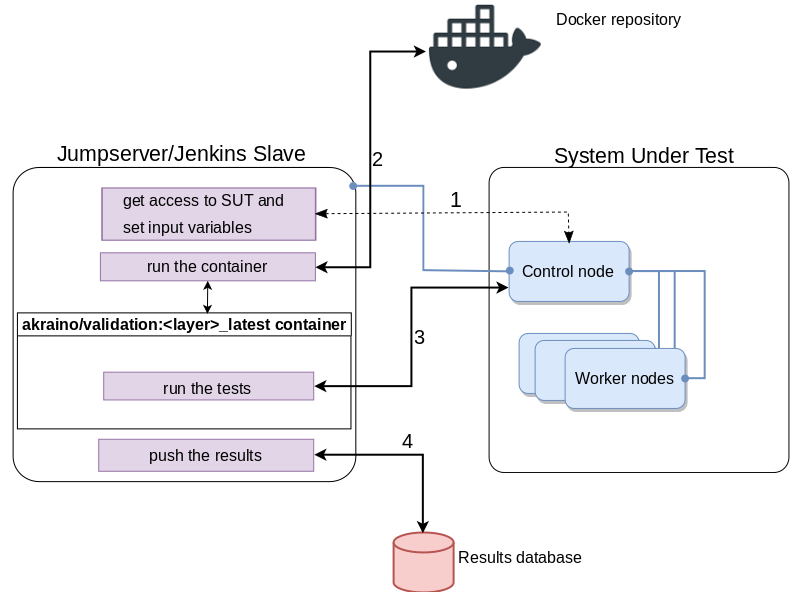

Topology

General Requirements

The Jumpserver can also act as a Jenkins slave and it needs to have docker installed. All the other tools needed to run the tests are available inside the container.

Some of the tests need to install the testing tools directly on the SUT, so the SUT needs to have access to Internet.

Using blucon to run the tests

The containers are ran from a Jumpserver with access to the SUT. Some tests use ssh to connect to the cluster, some use other tools (e.g the k8s layer uses the kubeclt client). The input needed from user is specified in this guide. Each layer has an individual container from which the tests run.

The tests run using the bluval tool. The tool takes as parameters the layer and the blueprint for which to run the tests. A base blueprint is given as an example here.

The blucon script can be used to both start the container and run the tests. The steps to do that are:

Clone the validation repo

ubuntu@ubuntu:~$ git clone http://gerrit.akraino.org/r/validation

Customize the blueprint - Optional step

In case your blueprint yaml file is not already in the validation repo inside the bluval folder, or you want to run a subset of the tests, you can create your own bluval yaml file and mount it later in the container. Below is an example of a demo blueprint that will call just the k8s conformance tests.

ubuntu@ubuntu:~$ cat validation/bluval/bluval-demo.yaml blueprint: name: demo layers: - k8s k8s: &k8s - name: conformance what: conformance optional: "False"Fill in volumes.yaml file

Fill in the file validation/bluval/volume.yaml with the data that applies to your setup. The volumes that don’t have a local value set will be ignored. In the example below, only the following volumes are set:

- Location to the kube config files needed for access to the cluster

- Location to the customized blueprint file

- Location to where to store the results

ubuntu@ubuntu:~$ cat validation/bluval/volumes.yaml |tail -n +23 volumes: # location of the ssh key to access the cluster ssh_key_file: local: '' target: '/root/.ssh' # location of the k8s access files (config file, certificates, keys) kube_config_dir: local: '/home/ubuntu/kube' target: '/root/.kube/' # location of the customized variables.yaml custom_variables_file: local: '' target: '/opt/akraino/validation/tests/variables.yaml' # location of the bluval-<blueprint>.yaml file blueprint_dir: local: '/home/ubuntu/validation/bluval' target: '/opt/akraino/validation/bluval' # location on where to store the resulst on the local jumpserver results_dir: local: '/home/ubuntu/results' target: '/opt/akraino/results'Do not modify the target part of each volume. These paths will be automatically created inside the container when started, and are fixed paths that the tools inside the container expect to exist as is.

Depending on which tool is used for connecting to the cluster (ssh, kubectl, etc), the corresponding volume needs to be mounted inside the container. To see which are the specific containers for each layer, check the second section in the same volumes.yaml file

ubuntu@ubuntu:~$ cat validation/bluval/volumes.yaml |tail -n +45 # parameters that will be passed to the container at each layer layers: # volumes mounted at all layers; volumes specific for a different layer are below common: - custom_variables_file - blueprint_dir - results_dir hardware: - ssh_key_file os: - ssh_key_file networking: - ssh_key_file k8s: - ssh_key_file - kube_config_dir k8s_networking: - ssh_key_File - kube_config_dir- Run the tests

ubuntu@ubuntu:~$ python3 validation/bluval/blucon.py <blueprint_name> [-l <layer>]

Getting the results

The results are stored inside the container in the /opt/akraino/results folder. If a volume was mounted to this folder (e.g. /home/ubuntu/results) then they can be viewed from the host where the test was ran. The results can be viewed with a browser, using the report.html file that the robot framework provides.

ubuntu@ubuntu:~$ ls results/k8s/conformance/ 201909110859_sonobuoy_376a4ddc-4498-49fc-af2e-999242c4c245.tar.gz Conformance.Conformance.log log.html output.xml report.html

Troubleshooting

In case of failures, the sonobuoy archive can be used to inspect all the logs. For live troubleshooting the container for a specific layer can be started with the command below:

docker run -ti -v <mount-volume-to-access-the-cluster> akraino/validation:<layer>-latest /bin/sh

Tests can be invoked directly using the bluval.py script located at /opt/akraino/validation/bluval/

Tests are located at /opt/akraino/validation/tests/ and they can be locally modified to print more output.

The OS layer

TBD

The Hardware layer

TBD

The Networking layer

TBD

The Kubernetes layer

| Test suites | Access type |

|---|---|

| k8s confrormance | kubectl tool Before running the image, copy the folder ~/.kube from your Kubernetes master node to a local folder (e.g. ~/k8s_access). |

| ha | ssh key Before running the image, copy the ssh key to access your cluster to a local folder (e.g. ~/.ssh) |

The OpenStack layer

TBD