Introduction:

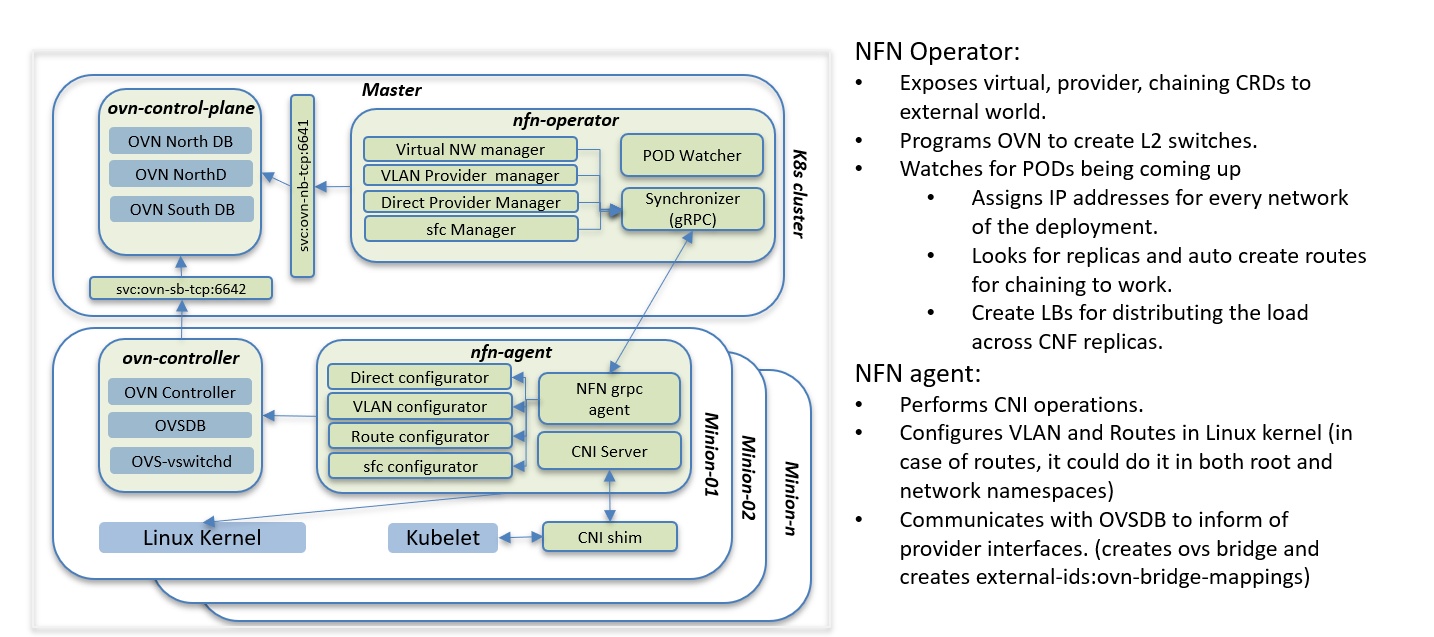

OVN4NFV is the Network controller designed based on the K8s controller framework and provides Open flow control based on OVN. This address OVN based Multiple Network creation, support Multiple network interfaces and support Virtual networking and Provider networkings.

Problem statement:

Application transformation is one of the major objectives in the edge computing in the cloud-native evolution. Taking a PNF(Physical Network Function) or a VNF(Virtual Network Functions) to be ready to deploy in the edge is as challenging because the NFs(Network Functions) are composited into smaller microservices and these microservers will be deployed in the multiple edge location. Controlling the network traffics such as both control plane and data plane traffics in the scenarios is required to achieve low latency and multiple clusters networking

Solution:

Adding Multi cluster networking is a challenging requirement for Edge networking in the cloud-native world. As Kubernetes delegates all the networking features to CNI(Container Network Interfaces), and right now we have 16+ CNI types that offer various networking features starting from localhost to BGP networking. Having a single network controller for the Multiple Cluster within an edge and also across geo-distributed edge location is a requirement to create a virtual network, provider networks across the edges, and apply the same tuning parameter for the network resources in the edges.

As edge locations are as small as 16 GB RAM, we don't have the options to run multiple network controller in edge, we required to have single network controller that could handle all the network requirement of the edge.

- Kubelets invoke the CNI as a process at each time for the pod creation and deletion. Having more complex in the CNI binary will increase the pod creation and deletion time. we are required to have thin shim CNI layers and more complex to be moved to the network controller. Having a CNI controller as a process along with Kubelet is the ideal solution, but this requires a serious change in Kubernetes networking.

- CNI is complexing as they are forced to multiple items features within them.

- Having a proxy CNI that calls another network controllers/CNI to invoke multiple network interfaces is forcing to having multiple read and write operation to store the post and pre-state of the network creation, and this invokes multiple CNIs to achieve a simple networking configuration such as bonding and Qos. Are even to apply the SFCs mechanism

- CNI framework also doesn't address the on-fly changing in the networking such as route change, overcome the network failovers or to apply SFCs to meet the network demands on the fly.

OVN4NFV is designed to address all the challenges. We designed the very thin layer of CNI shim that development to maintain the CNI framework and all the networking complexity such as Multiple networking, handling the infinite network resouces and finite network resources are moved in a single network controller.

Features:

InFinite Network Resources:

Virtual Networks:

OVN4NFV uses the NFN operator to define the virtual network CRs that will create a OVN networking for virtual networking as defined in the CR.

apiVersion: k8splugin.opnfv.org/v1alpha1 kind: Network metadata: name: ovn-priv-net spec: cniType: ovn4nfv ipv4subnets: - subnet: 172.16.33.0/24 name: subnet1 gateway: 172.16.33.1/24 excludeIps: 172.16.33.2 172.16.33.5..172.16.33.10

This CR defines the OVN networking and provides the gateway and exclude IPs to be reserved for any internal static IP address assignment.

Provider Networks:

Provider network supports both VLAN and direct provider networking

apiVersion: k8s.plugin.opnfv.org/v1alpha1

kind: ProviderNetwork

metadata:

name: pnetwork

spec:

cniType: ovn4nfv

ipv4Subnets:

- subnet: 172.16.33.0/24

name: subnet1

gateway: 172.16.33.1/24

excludeIps: 172.16.33.2 172.16.33.5..172.16.33.10

providerNetType: VLAN

vlan:

vlanId: "100"

providerInterfaceName: eth0

logicalInterfaceName: eth0.100

vlanNodeSelector: specific

nodeLabelList:

- kubernetes.io/hostname=ubuntu18

The major change between the VLAN provider network and direct provide networks is the VLAN information is provided in the VLAN CR and they are excluded in the direct provider

apiVersion: k8s.plugin.opnfv.org/v1alpha1

kind: ProviderNetwork

metadata:

name: directpnetwork

spec:

cniType: ovn4nfv

ipv4Subnets:

- subnet: 172.16.34.0/24

name: subnet2

gateway: 172.16.34.1/24

excludeIps: 172.16.34.2 172.16.34.5..172.16.34.10

providerNetType: DIRECT

direct:

providerInterfaceName: eth1.

directNodeSelector: specific

nodeLabelList:

- kubernetes.io/hostname=ubuntu18

Service Function Chaining:

apiVersion: k8splugin.opnfv.org/v1alpha1

kind: NetworkChaining

metadata:

name: chain1

namespace: vFW

spec:

type: Routing

routingSpec:

leftNetwork:

- networkName: ovn-provider1

gatewayIP: 10.1.5.1

subnet: 10.1.5.0/24

rightNetwork:

- networkName: ovn-provider1

gatewayIP: 10.1.10.1

subnet: default

networkChain: app=slb, ovn-net1, app=ngfw, ovn-net2, app=sdwancnf

Finite network Resources:

SRIOV Overlay Networks:

Required features in SRIOV Overlay networking:

- Currently, OVN4NFV by default create the Veth pair interfaces for all interfaces.

- SRIOV Overlay networks introduce a feature to include the interfaceType in the OVN networking and provide the deviceplugin sock name and targets on the devices only having SRIOV hardware-enabled labels

SRIOV Type Virtual network

apiVersion: k8splugin.opnfv.org/v1alpha1

kind: Network

metadata:

name: ovn-sriov-net

spec:

cniType: ovn4nfv

ipv4subnets:

- subnet: 172.16.33.0/24

name: subnet1

gateway: 172.16.33.1/24

excludeIps: 172.16.33.2 172.16.33.5..172.16.33.10

NodeSelector: specific

nodeLabelList:

- feature.node.kubernetes.io/network-sriov.capable=true

- feature.node.kubernetes.io/custom-xl710.present=true

SRIOV Type provider network

apiVersion: k8s.plugin.opnfv.org/v1alpha1

kind: ProviderNetwork

metadata:

name: ovn-sriov-vlan-pnetwork

spec:

cniType: ovn4nfv

interface:

- Type:sriov

deviceName: intel.com/intel_sriov_700

ipv4Subnets:

- subnet: 172.16.33.0/24

name: subnet1

gateway: 172.16.33.1/24

excludeIps: 172.16.33.2 172.16.33.5..172.16.33.10

providerNetType: VLAN

vlan:

vlanId: "100"

providerInterfaceName: eth0

logicalInterfaceName: eth0.100

vlanNodeSelector: specific

nodeLabelList:

- feature.node.kubernetes.io/network-sriov.capable=true

- feature.node.kubernetes.io/custom-xl710.present=true

SRIOV Type Direct network

apiVersion: k8s.plugin.opnfv.org/v1alpha1

kind: ProviderNetwork

metadata:

name: ovn-sriov-direct-pnetwork

spec:

cniType: ovn4nfv

interface:

- Type:sriov

deviceName: intel.com/intel_sriov_700

ipv4Subnets:

- subnet: 172.16.34.0/24

name: subnet2

gateway: 172.16.34.1/24

excludeIps: 172.16.34.2 172.16.34.5..172.16.34.10

providerNetType: DIRECT

direct:

providerInterfaceName: enp

directNodeSelector: specific

nodeLabelList:

- feature.node.kubernetes.io/network-sriov.capable=true

- feature.node.kubernetes.io/custom-xl710.present=true

Parameter definition:

interface - Define the type of sriov interface to be created.

deviceName - Define device plugin to be targeted to get the pod resource information from the kubelet api - For more information refer here -

https://github.com/kubernetes/kubernetes/blob/master/test/e2e_node/util.go

apiVersion: v1

kind: Pod

metadata:

name: pod-case-01

annotations:

k8s.plugin.opnfv.org/nfn-network: '{ "type": "ovn4nfv", "interface": [{ "name": "ovn-sriov-virutal-net", "interface": "net0", Type: sriov, Attribute: [{type: bonding, mode: roundrobin}, {type:tuning, bandwidth:”2GB”}}]}'

spec:

containers:

- name: test-pod

image: docker.io/centos/tools:latest

command:

- /sbin/init

resources:

requests:

intel.com/intel_sriov_700: '1'

limits:

intel.com/intel_sriov_700: '1'

- Admission controller should be part of NFN operator that insert the request and limit to pod spec by reading the OVN4NFV net CR.

- This design adds the SRIOV directly into the OVN overlay for both primary and secondary networking. The development should also address the SNAT for all the interfaces